by Ashutosh Jogalekar

Earlier this week, European investigators concluded that the Russian opposition leader Alexei Navalny had been killed with epibatidine, a toxin unknown in Russia’s natural environment and ordinarily found only in the skin of small, brilliantly colored frogs native to the rainforests of South America. If that conclusion is correct, a molecule shaped in one of the most intricate ecosystems on Earth has completed a journey that ends not in the forest, nor in the laboratory, but in a prison cell. For Putin’s Russia, this is one more marker on the road to political assassination using chemical and biological weapons.

Long before laboratories named it, indigenous communities of the Amazon understood through long experience that certain tiny, extraordinarily bright and beautiful frogs carried extraordinary power in their skin. The knowledge was practical and restrained. It served hunting, survival, and continuity. It was part of a relationship with the living forest in which danger and respect were inseparable. Nothing in that knowledge pointed toward geopolitics or assassination. The molecule existed only within a web of life that had shaped it.

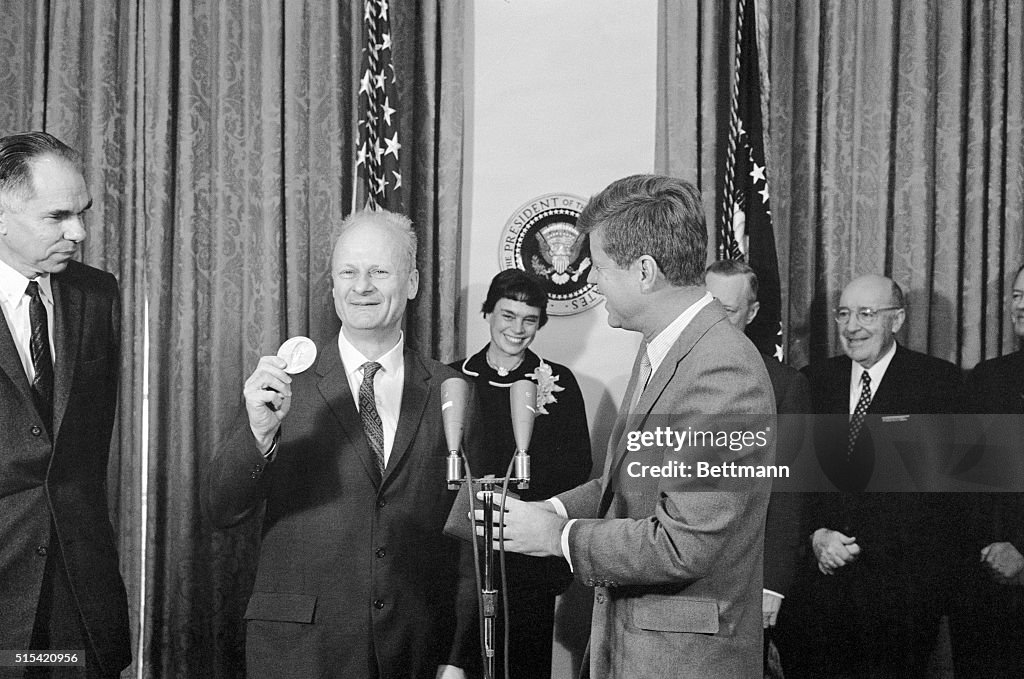

Centuries later, science encountered the same substance and read it differently. At the National Institutes of Health, the chemist John Daly devoted decades to the study of amphibian alkaloids, following faint chemical traces through repeated expeditions, careful collections, and patient analysis. His work was not driven by persistence, by the belief that small natural molecules could reveal deep biological truths. From thousands of specimens and years of attention emerged epibatidine, a molecule isolated from the skin of a poison dart frog endemic to Ecuador and Peru: a structure modest in size yet immense in biological effect, binding human receptors with an affinity evolution had refined without intention. Daly turned into something of a folk hero whose findings resonated beyond the halls of chemistry. Read more »

Now there is a

Now there is a