by Marie Snyder

I started reading about burnout when I walked away from teaching earlier than expected. Suddenly, I couldn’t bring myself to open that door after over thirty years of bounding to work. A series of events wiped away any sense of agency, fairness, or shared values. Their wellness lunch-and-learns didn’t help me, and I soon discovered I’m not alone.

I started reading about burnout when I walked away from teaching earlier than expected. Suddenly, I couldn’t bring myself to open that door after over thirty years of bounding to work. A series of events wiped away any sense of agency, fairness, or shared values. Their wellness lunch-and-learns didn’t help me, and I soon discovered I’m not alone.

An article published in JAMA last June looked at rising rates of burnout in healthcare, where 40% of physicians surveyed intended to leave their practice. They suggest, “To prevent a health care worker exodus, experts argue that the emphasis needs to shift from individual resilience to broader system-level improvements.” They are looking for standardized methods to affect organizational management with “evidence-based interventions.”

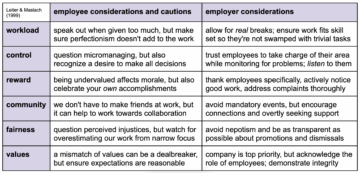

Over 25 years ago, Michael Leiter and Christina Maslach came to the same conclusion. They identified six areas of worklife affecting burnout and created a specific assessment for educators. They determined the cause to be a “mismatch” between employee expectations and employer behaviours leading workers to be closer to the bleak end of a continuum from burned out to engaged. They suggest that “the task for organizations and individuals is to achieve a resolution.” This is not just a matter of throwing wellness initiatives or resilience-speak into the mix, but addressing any reasonable expectations of employees with appropriate employer interventions in all six interrelating areas.

Feels vindicating, right?!

One problem with this solution and possibly a reason why it’s not widespread, however, is that it’s often the employees that hold the highest standards and care for the workplace who are the most affected by burnout, and they might make up a small minority of workers. People who show up to learn the right buzzwords and put in the least effort required to hit their hours without concern for the process and product of the company can feel unscathed, and those employees can make up enough of the workforce to provoke organizations to continue the micromanaging and questionable reward schemes for the many. Read more »

Eugene Russell, a piano tuner interviewed by

Eugene Russell, a piano tuner interviewed by

Sughra Raza. Untitled. June, 2014.

Sughra Raza. Untitled. June, 2014.

In philosophical debates about the aesthetic potential of cuisine, one central topic has been the degree to which smell and taste give us rich and structured information about the nature of reality. Aesthetic appreciation involves reflection on the meaning and significance of an aesthetic object such as a painting or musical work. Part of that appreciation is the apprehension of the work’s form or structure—it is often the form of the object that we find beautiful or otherwise compelling. Although we get pleasure from consuming good food and drink, if smell and taste give us no structured representation of reality there is no form to apprehend or meaning to analyze, so the argument goes. The enjoyment of cuisine then would be akin to that of basking in the sun. It is pleasant to be sure but there is nothing to apprehend or analyze beyond an immediate sensation.

In philosophical debates about the aesthetic potential of cuisine, one central topic has been the degree to which smell and taste give us rich and structured information about the nature of reality. Aesthetic appreciation involves reflection on the meaning and significance of an aesthetic object such as a painting or musical work. Part of that appreciation is the apprehension of the work’s form or structure—it is often the form of the object that we find beautiful or otherwise compelling. Although we get pleasure from consuming good food and drink, if smell and taste give us no structured representation of reality there is no form to apprehend or meaning to analyze, so the argument goes. The enjoyment of cuisine then would be akin to that of basking in the sun. It is pleasant to be sure but there is nothing to apprehend or analyze beyond an immediate sensation.

Bill Gates has long been one of the world’s leading optimists, and his new documentary, “What’s Next,” serves as a testament to his hopeful vision of the future. But what makes Gates’s optimism particularly compelling is that it is grounded not in dewy-eyed hopes and prayers but in logic, data, and an unshakable belief in the power of science and technology. Over the years, Gates and his wife Melinda, through their foundation, have invested in a wide array of innovative technologies aimed at addressing some of the most pressing issues faced by humanity. Their work has had an especially transformative impact on underserved populations in regions like Africa, tackling fundamental challenges in healthcare, energy, and beyond. In this new, five-part Netflix series, Gates showcases his trademark pragmatism and curiosity as he engages with some of the most complex and important challenges of our time: artificial intelligence (AI), misinformation, inequality, climate change, and healthcare. His approach stands out especially for his willingness to have a dialogue with those with whom he might strongly disagree.

Bill Gates has long been one of the world’s leading optimists, and his new documentary, “What’s Next,” serves as a testament to his hopeful vision of the future. But what makes Gates’s optimism particularly compelling is that it is grounded not in dewy-eyed hopes and prayers but in logic, data, and an unshakable belief in the power of science and technology. Over the years, Gates and his wife Melinda, through their foundation, have invested in a wide array of innovative technologies aimed at addressing some of the most pressing issues faced by humanity. Their work has had an especially transformative impact on underserved populations in regions like Africa, tackling fundamental challenges in healthcare, energy, and beyond. In this new, five-part Netflix series, Gates showcases his trademark pragmatism and curiosity as he engages with some of the most complex and important challenges of our time: artificial intelligence (AI), misinformation, inequality, climate change, and healthcare. His approach stands out especially for his willingness to have a dialogue with those with whom he might strongly disagree. I lived in Philadelphia in 1977 and would go to the Gallery mall on Market Street, a walking distance from our river front apartment. One day, around lunch, I decided to get Chinese food at the food court and looking for a place to sit, I asked two older ladies if I could sit at their table, since the place was packed. As I was picking through the food, separating the celery and water chestnuts, one of the old ladies said

I lived in Philadelphia in 1977 and would go to the Gallery mall on Market Street, a walking distance from our river front apartment. One day, around lunch, I decided to get Chinese food at the food court and looking for a place to sit, I asked two older ladies if I could sit at their table, since the place was packed. As I was picking through the food, separating the celery and water chestnuts, one of the old ladies said

Sughra Raza. Meadowstream Afternoon, Maine, 2001.

Sughra Raza. Meadowstream Afternoon, Maine, 2001. By all accounts, Alexandre Lefebvre’s new book,

By all accounts, Alexandre Lefebvre’s new book,