by Michael Abraham-Fiallos

I am sitting at a coffee shop downtown. It’s a nice Friday morning, not too hot and not too cool, not quite autumn and not quite summer. I have eaten, so I am no longer dreaming. And, I am reading “Howl” by Allen Ginsberg for the first time in at least half a decade.

I realize about halfway through the poem’s first long section that I don’t like this poem very much. Or, I don’t like this poem very much anymore. It’s a little bit racist, a little bit whiny, a little bit full of itself. It is profound; don’t get me wrong. It is epochal, in its way. But, it is not for me anymore. In his introduction to Howl and Other Poems, William Carlos Williams writes that “Howl” is “a howl of defeat. Not defeat at all for he has gone through defeat as if it were an ordinary experience, a trivial experience” (a sentence if ever there was one). Perhaps this is what I no longer like, this defeat, this sense that only in the abject is one to find the truth. The gambit of “Howl” is to think the marginal—the madman, the homosexual, the drug addict—as the site of visionary consciousness. Normally, this is a gambit for which I would be entirely down. But, contrary to Williams’s notion that, in the poem, “the spirit of love survives to ennoble our lives if we have the strength and the courage and the faith—and the art! to persist,” there is a kind of showmanship in the poem that does not sit well with me, a glorying in the abject that never quite reaches the eternal pronunciation of the Truth-with-a-capital-T that it explicitly declares as its intent. “Howl” is an exposé of the marginalized life, and it reads, to me at least, as imbued at every moment with the same kind of sensationalism on which the exposé thrives. A perfect example of this is the third section to Carl Solomon, in which Ginsberg declares as his refrain, “I’m with you in Rockland,” the psychiatric institute. While Ginsberg and Solomon did meet in a psychiatric institute, and while Solomon was in and out of them throughout his life, he was never in Rockland, and this bothered him. He also generally took issue with his representation in the poem, feeling it was not historically accurate and feeling, one imagines, sensationalized, reduced to his psychological afflictions to serve Ginsberg’s aesthetic aims. This is not to say that there is not gentleness in “Howl” at certain points, that Ginsberg did not belong to and care for the community he describes. But, “Howl” revels in a pain that I would seek to ameliorate rather than to celebrate. It takes too much pride in the total destruction of its protagonists and does not display enough worry for them.

However, as I read, I am taken back in time to a very different period in my life: sixteen and a fag, caught in the suburbs and dreaming of Manhattan, stumbling through sex and hopelessly in love with every boy, gay or straight or inbetween, who would bear his cock to me, tumbling over myself with hormones and earnestness and a flamelike desire to mean, to write. I found Ginsberg sometime around then. “Howl” consumed me like a dream. Read more »

My Presidency College friend Premen was always a voracious reader, particularly of political, social and military history. He often told me of new books in those areas and sometimes persuaded me to read them. But by the time I saw him again in Cambridge, I could see his slow turn from his fascination with Trotsky to Mao. This was in line with a general movement among the young in the European left around that time. Jean-Luc Godard’s 1967 film La Chinoise captured the restless energy of politically-activist students in contemporary France, foreshadowing the student rebellions in a year or so.

My Presidency College friend Premen was always a voracious reader, particularly of political, social and military history. He often told me of new books in those areas and sometimes persuaded me to read them. But by the time I saw him again in Cambridge, I could see his slow turn from his fascination with Trotsky to Mao. This was in line with a general movement among the young in the European left around that time. Jean-Luc Godard’s 1967 film La Chinoise captured the restless energy of politically-activist students in contemporary France, foreshadowing the student rebellions in a year or so. Imagine a world where the prison population was a rough mirror of wider society. In such a world there is a similar spread of rich and poor, highly educated and less educated, as well as a roughly equal proportion of men and women and those from deprived areas and well-off areas. The proportions of different ethnic groups reflect those in the surrounding society, as does the age profile, and having a mental health problem bears no relation to the likelihood of being in prison, neither does being in care in any systematic way increase the chances of ending up as a young offender. In addition, there seems to be no pattern from year to year. Some years there are low levels of crime and in other years the crime rate jumps for no discernible reason. The random nature of the prison population is recognised as providing good evidence for the belief that criminality is simply a result of individuals using their free will to make bad decisions, since we are all equally capable of this. After all, it could be argued, everyone is equal in possessing free will, and crime is a conscious and fully autonomous act in which social and psychological conditions play little part. Anyone, the argument goes, can be selfish or greedy and so succumb to criminality. In such a world, the general view is that prison exists to teach these individuals the error of their ways by providing them with extra motivation to retain their self-control next time temptation beckons.

Imagine a world where the prison population was a rough mirror of wider society. In such a world there is a similar spread of rich and poor, highly educated and less educated, as well as a roughly equal proportion of men and women and those from deprived areas and well-off areas. The proportions of different ethnic groups reflect those in the surrounding society, as does the age profile, and having a mental health problem bears no relation to the likelihood of being in prison, neither does being in care in any systematic way increase the chances of ending up as a young offender. In addition, there seems to be no pattern from year to year. Some years there are low levels of crime and in other years the crime rate jumps for no discernible reason. The random nature of the prison population is recognised as providing good evidence for the belief that criminality is simply a result of individuals using their free will to make bad decisions, since we are all equally capable of this. After all, it could be argued, everyone is equal in possessing free will, and crime is a conscious and fully autonomous act in which social and psychological conditions play little part. Anyone, the argument goes, can be selfish or greedy and so succumb to criminality. In such a world, the general view is that prison exists to teach these individuals the error of their ways by providing them with extra motivation to retain their self-control next time temptation beckons.

I went to France to study abroad as a 20-year-old in my third year of university. At the time, I had been studying French for eight years, but when I arrived in France, I found I was unable to express myself beyond the most rudimentary statements, and I couldn’t understand the rapid-fire questions sprayed at me by curious French students. After attending a dorm party that first weekend, I realized the gap between myself and the French students was simply too large to bridge; the most I could hope for from them was small talk and polite chatter—deep, meaningful conversation, and thus friendship, would be impossible.

I went to France to study abroad as a 20-year-old in my third year of university. At the time, I had been studying French for eight years, but when I arrived in France, I found I was unable to express myself beyond the most rudimentary statements, and I couldn’t understand the rapid-fire questions sprayed at me by curious French students. After attending a dorm party that first weekend, I realized the gap between myself and the French students was simply too large to bridge; the most I could hope for from them was small talk and polite chatter—deep, meaningful conversation, and thus friendship, would be impossible. Upton Sinclair famously remarked that “it is difficult to get a man to understand something when his salary depends on his not understanding it.” It is easy to imagine the sort of scenario that illustrates his point. A drug company rep works to increase how often a certain drug is prescribed, putting aside any worries that it is addictive. A video game designer seeks to increase the number of hours young players spend hooked on a game, not thinking about the impact this might have on their education.

Upton Sinclair famously remarked that “it is difficult to get a man to understand something when his salary depends on his not understanding it.” It is easy to imagine the sort of scenario that illustrates his point. A drug company rep works to increase how often a certain drug is prescribed, putting aside any worries that it is addictive. A video game designer seeks to increase the number of hours young players spend hooked on a game, not thinking about the impact this might have on their education.

“People in the know know him.” That’s what his English translator, Peter Constantine, told me. Grzegorz Kwiatkowski is becoming an important poetic voice from today’s Poland, with six volumes of poetry, and translated editions on the way. His translator added, “He has a strange poetic voice, very original and stark.”

“People in the know know him.” That’s what his English translator, Peter Constantine, told me. Grzegorz Kwiatkowski is becoming an important poetic voice from today’s Poland, with six volumes of poetry, and translated editions on the way. His translator added, “He has a strange poetic voice, very original and stark.”

Most cinemas have been open for some time where I live. After having been indoors in restaurants and bars a few times, I was slowly reintroduced to the pleasures of sharing a space with strangers. And finally it felt like the right moment to, once again, set foot in a cinema.

Most cinemas have been open for some time where I live. After having been indoors in restaurants and bars a few times, I was slowly reintroduced to the pleasures of sharing a space with strangers. And finally it felt like the right moment to, once again, set foot in a cinema.

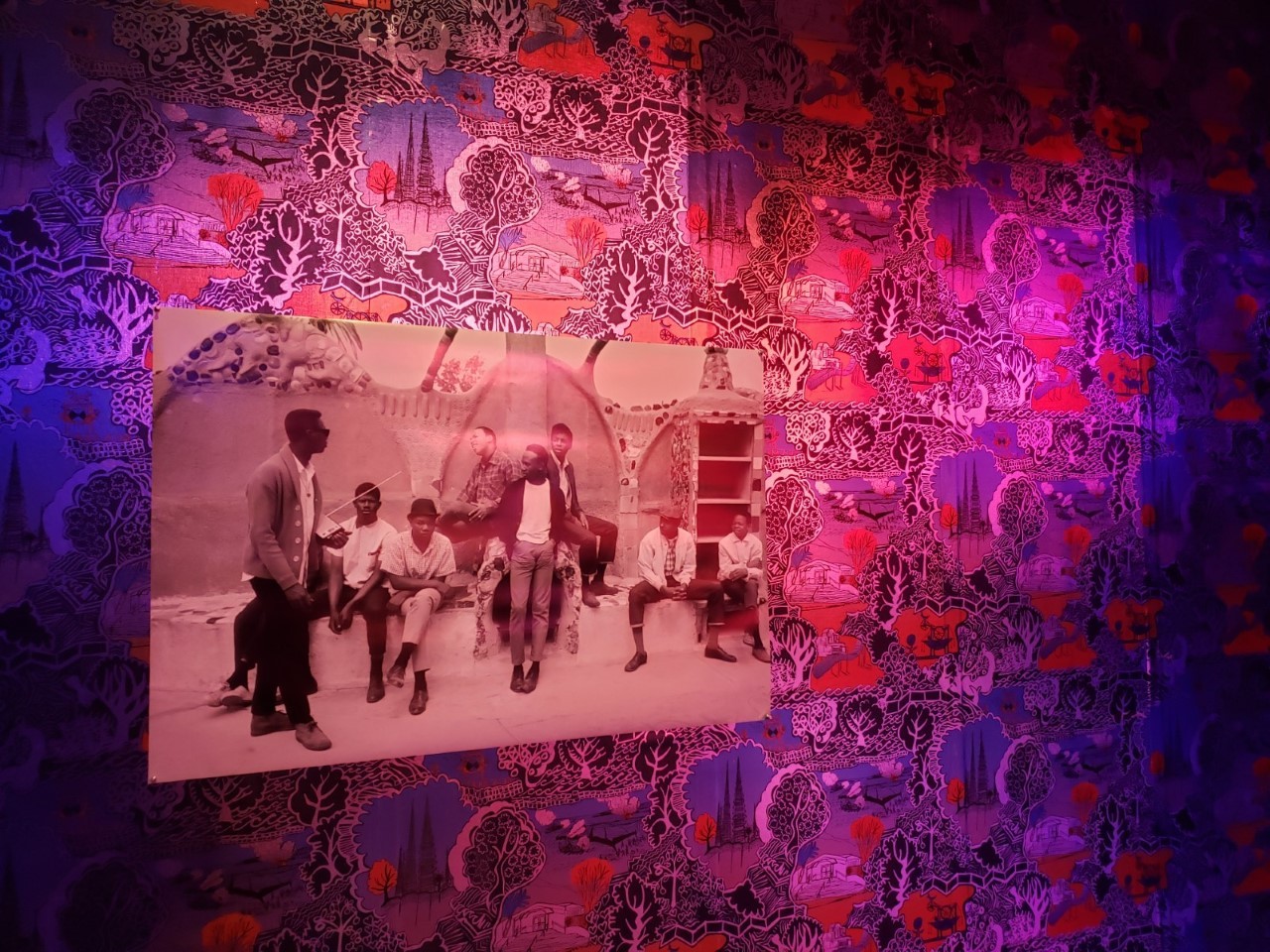

Cauleen Smith. Space Station Chinoiserie #1: Take hold of the Clouds, 2018.

Cauleen Smith. Space Station Chinoiserie #1: Take hold of the Clouds, 2018. Last night I (Danielle Spencer) went to the New York Film Festival screening of Memoria (dir. Apichatpong Weerasethakul) in Alice Tully hall at Lincoln Center. I last joined a large gathering 19 months ago, in March of 2020.

Last night I (Danielle Spencer) went to the New York Film Festival screening of Memoria (dir. Apichatpong Weerasethakul) in Alice Tully hall at Lincoln Center. I last joined a large gathering 19 months ago, in March of 2020.