by Eric Feigenbaum

When I lived in Singapore during the mid-aughts, it seemed like the only thing you needed to do Hot Yoga was to turn off the air conditioning or go outside.

In recent years, I have made hot yoga a part of my daily routine and am used to doing a variation of the Bikram set sequence at roughly 104 degrees and 50 percent humidity. On my last visit to Singapore, I decided to see if I could continue staying true to form.

There are a surprising number of yoga and hot yoga studios in Singapore today, so I sampled a few. The one whose format and facilities most resembled what I’m sued to at home was Hom Yoga in the Orchard Center Mall.

A very friendly American-Singaporean couple owns and runs it. Hom Yoga had all the elements one would expect of a nice corporate yoga studio – spacious, light, well appointed locker rooms with showers and hair dryers, towels, fancy water – the whole nine yards. They went the extra mile – providing mats and have them all laid out like parking spaces, which felt very Singaporean.

Hom Yoga provided exactly what I sought – the classic Bikram 26 and 2 sequence pervasive in American Hot Yoga.

Of course, the command, “Change!” between asanas was a bit jarring. No gentle, “rock forward into plank” or “let’s all meet in downward-facing dog.” While it felt shocking un-yogic, maybe you get used to it with time.

One very noticeable difference was the vibe. Maybe I’m just spoiled, but the studios I have attended in the US are very community oriented. People know each other. There are hugs and catching up between classes or while waiting for one to start. One teacher calls the ten minutes leading up to his class, “The Muppet Show” because of the quiet din of everyone chatting.

No one at Hom Yoga was talking. Maybe they were waiting for the “change!”

When I first came to Singapore on my first visits in late 2003-early 2004, I would be shocked if there were even half as many yoga studios as there are today. In 2005, when my friend Alex came to join me to recruit nurses for US hospitals, he was taken aback by how disconnected Singapore was from clean living, healthy food, self-care – all the things Alex experienced in abundance in the Bay Area for several years prior.

Alex noticed Singapore lacked its own art. He felt the amazingly strong and tight systems design that led to a successful Singapore in just one generation and was catapulting itself to higher heights in the next – didn’t leave its citizens the pathos that comes from suffering that when combined with creativity, often leads to great art.

While I never liked that assertion, the proof seemed to be in the pudding – Singapore had one modest art museum and a handful of small galleries. If there was any thriving art in Singapore, it was architecture – not the fine arts. Read more »

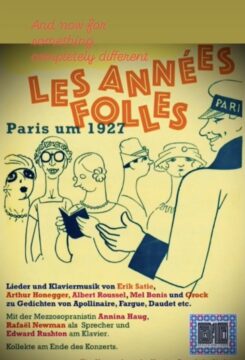

On Thursday this week I will join two of my colleagues—the mezzo Annina Haug and the pianist Edward Rushton—to present a program of poems by French authors to a private audience. We are staging our concert in Zurich, at the home of a descendant of one of those authors, the renowned Swiss-French clown and musician

On Thursday this week I will join two of my colleagues—the mezzo Annina Haug and the pianist Edward Rushton—to present a program of poems by French authors to a private audience. We are staging our concert in Zurich, at the home of a descendant of one of those authors, the renowned Swiss-French clown and musician

I’m curious about the intersection of psychology, philosophy, and spirituality, and the more I read, the more closely they all appear to intertwine until they’re sometimes indistinguishable. Buddhism overlaps with Stoicism, which influenced Albert Ellis’s REBT (then CBT and all its variations). They dig down to acknowledge and question mistaken core beliefs. Plato inspired some of Freud’s work, which mixed with Sartre and Camus to become the existential psychotherapy of Irvin Yalom and Otto Rank. They have a focus on the acceptance of death, which comes back around to the Buddhist prescription to meditate on our bones turning to dust. Yet, despite a general theme being repeated, it’s striking how hard it is to get out from the minutia of daily life to attend to it.

I’m curious about the intersection of psychology, philosophy, and spirituality, and the more I read, the more closely they all appear to intertwine until they’re sometimes indistinguishable. Buddhism overlaps with Stoicism, which influenced Albert Ellis’s REBT (then CBT and all its variations). They dig down to acknowledge and question mistaken core beliefs. Plato inspired some of Freud’s work, which mixed with Sartre and Camus to become the existential psychotherapy of Irvin Yalom and Otto Rank. They have a focus on the acceptance of death, which comes back around to the Buddhist prescription to meditate on our bones turning to dust. Yet, despite a general theme being repeated, it’s striking how hard it is to get out from the minutia of daily life to attend to it.  Sughra Raza. Microforest, March 2022.

Sughra Raza. Microforest, March 2022.

The debate about whether artificial intelligence might one day become conscious is philosophically interesting. It raises age-old philosophical questions in a new form: What is a mind? What counts as experience? What would it mean for something made of code and silicon to have beliefs, desires, or a point of view? I covered some of those issues in a

The debate about whether artificial intelligence might one day become conscious is philosophically interesting. It raises age-old philosophical questions in a new form: What is a mind? What counts as experience? What would it mean for something made of code and silicon to have beliefs, desires, or a point of view? I covered some of those issues in a