by Ashutosh Jogalekar

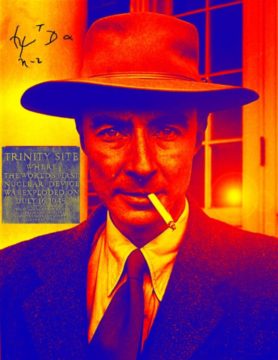

This is the third in a series of posts about J. Robert Oppenheimer’s life and times. All the others can be found here.

In 1925, there was no better place to do experimental physics than Cambridge, England. The famed Cavendish Laboratory there has been created in 1874 by funds donated by a descendant of the eccentric scientist-millionaire Henry Cavendish. It had been led by James Clerk Maxwell and J. J. Thomson, both physicists of the first rank. In 1924, the booming voice of Ernest Rutherford reverberated in its hallways. During its heyday and even beyond, the Cavendish would boast a record of scientific accomplishments unequalled by any other single laboratory before or since; the current roster of Nobel Laureates associated with the institution stands at thirty. By the 1920s Rutherford was well on his way to becoming the greatest experimental physicist in history, having discovered the laws of radioactive transformation, the atomic nucleus and the first example of artificially induced nuclear reactions. His students, half a dozen Nobelists among them, would include Niels Bohr – one of the few theorists the string-and-sealing-wax Rutherford admired – and James Chadwick who discovered the neutron.

Robert Oppenheimer returned back to New York in 1925 after a vacation in New Mexico to disappointment. While he had been accepted into Christ College, Cambridge, as a graduate student, Rutherford had rejected his application to work in his laboratory in spite of – or perhaps because of – the recommendation letter from his undergraduate advisor, Percy Bridgman, that painted a lackluster portrait of Oppenheimer as an experimentalist. Instead it was recommended that Oppenheimer work with the physicist J. J. Thomson. Thomson, a Nobel Laureate, was known for his discovery of the electron, a feat he had accomplished in 1897; by 1925 he was well past his prime. Oppenheimer sailed for England in September. Read more »

One of the amusing things about academic conferences – for a European – is to meet with American scholars. Five minutes into an amicable conversation with an American scholar and they will inevitably confide in a European one of two complaints: either how all their fellow American colleagues are ‘philistines’ (a favourite term) or (but sometimes and) how taxing it is to be always called out as an ‘erudite’ by said fellow countrymen. As Arthur Schnitzler demonstrated in his 1897 play Reigen (better known through Max Ophühls film version La Ronde from 1950), social circles are quickly closed in a confined space; and so, soon enough, by the end of day two of the conference, by pure mathematical calculation, as Justin Timberlake sings, ‘what goes around, comes around’, all the Americans in the room turn out to be both philistines and erudite.

One of the amusing things about academic conferences – for a European – is to meet with American scholars. Five minutes into an amicable conversation with an American scholar and they will inevitably confide in a European one of two complaints: either how all their fellow American colleagues are ‘philistines’ (a favourite term) or (but sometimes and) how taxing it is to be always called out as an ‘erudite’ by said fellow countrymen. As Arthur Schnitzler demonstrated in his 1897 play Reigen (better known through Max Ophühls film version La Ronde from 1950), social circles are quickly closed in a confined space; and so, soon enough, by the end of day two of the conference, by pure mathematical calculation, as Justin Timberlake sings, ‘what goes around, comes around’, all the Americans in the room turn out to be both philistines and erudite. Sa’dia Rehman. Allegiance To The Flag on Picture Day, 2018.

Sa’dia Rehman. Allegiance To The Flag on Picture Day, 2018.

In the first round of this year’s NBA playoffs, Austin Reaves, an undrafted and little-known guard who plays for the Los Angeles Lakers, held the ball outside the three-point line. With under two minutes remaining, the score stood at 118-112 in the Lakers’ favor against the Memphis Grizzlies. Lebron James waited for the ball to his right. Instead of deferring to the star player, Reaves ignored James, drove into the lane, and hit a floating shot for his fifth field goal of the fourth quarter. He then turned around

In the first round of this year’s NBA playoffs, Austin Reaves, an undrafted and little-known guard who plays for the Los Angeles Lakers, held the ball outside the three-point line. With under two minutes remaining, the score stood at 118-112 in the Lakers’ favor against the Memphis Grizzlies. Lebron James waited for the ball to his right. Instead of deferring to the star player, Reaves ignored James, drove into the lane, and hit a floating shot for his fifth field goal of the fourth quarter. He then turned around  I’m bored; you’re bored; we’re all bored. By our books and movies and television shows, the endless blandness of the Netflix queue, by our music and theater and art. Culture now is strenuously cautious, nervously polite, earnestly worthy, ploddingly obvious, and above all, dismally predictable. It never dares to stray beyond the four corners of the already known. Robert Hughes spoke of the shock of the new, his phrase for modernism in the arts. Now there’s nothing that is shocking, and nothing that is new: irresponsible, dangerous; singular, original; the child of one weird, interesting brain. Decent we have, sometimes even good: well-made, professional, passing the time. But wild, indelible, commanding us without appeal to change our lives? I don’t think we even remember what that feels like.

I’m bored; you’re bored; we’re all bored. By our books and movies and television shows, the endless blandness of the Netflix queue, by our music and theater and art. Culture now is strenuously cautious, nervously polite, earnestly worthy, ploddingly obvious, and above all, dismally predictable. It never dares to stray beyond the four corners of the already known. Robert Hughes spoke of the shock of the new, his phrase for modernism in the arts. Now there’s nothing that is shocking, and nothing that is new: irresponsible, dangerous; singular, original; the child of one weird, interesting brain. Decent we have, sometimes even good: well-made, professional, passing the time. But wild, indelible, commanding us without appeal to change our lives? I don’t think we even remember what that feels like. Viruses are possibly even more maligned than bacteria, spoken of exclusively in terms of disease. Here, virologist Marilyn J. Roossinck ranges far beyond human pathogens to convince you how narrow that picture is. She instead reveals them as enigmatic entities that are intimately entwined with the entirety of Earth’s biosphere, exploiting and enabling it in equal measure. Backed by numerous infographics, the book alternates between chapters on basic principles of virology and brief portraits of noteworthy viruses. The result is an entry-level introduction to virology that fascinated me more than I expected.

Viruses are possibly even more maligned than bacteria, spoken of exclusively in terms of disease. Here, virologist Marilyn J. Roossinck ranges far beyond human pathogens to convince you how narrow that picture is. She instead reveals them as enigmatic entities that are intimately entwined with the entirety of Earth’s biosphere, exploiting and enabling it in equal measure. Backed by numerous infographics, the book alternates between chapters on basic principles of virology and brief portraits of noteworthy viruses. The result is an entry-level introduction to virology that fascinated me more than I expected. “This is not a scenic drive,” said James Willcox, of adventure travel specialist

“This is not a scenic drive,” said James Willcox, of adventure travel specialist  At the press conference following the Cannes premiere of Martin Scorsese’s Killers of the Flower Moon, someone asked Robert De Niro about his character, a kingpin of a sort with a tricky psyche. “It’s the banality of evil,” he said, describing the character’s moral ambiguity. “It’s the thing we have to watch out for. We see it today, of course. We all know who I’m going to talk about, but I’m not going to say his name.” (

At the press conference following the Cannes premiere of Martin Scorsese’s Killers of the Flower Moon, someone asked Robert De Niro about his character, a kingpin of a sort with a tricky psyche. “It’s the banality of evil,” he said, describing the character’s moral ambiguity. “It’s the thing we have to watch out for. We see it today, of course. We all know who I’m going to talk about, but I’m not going to say his name.” (