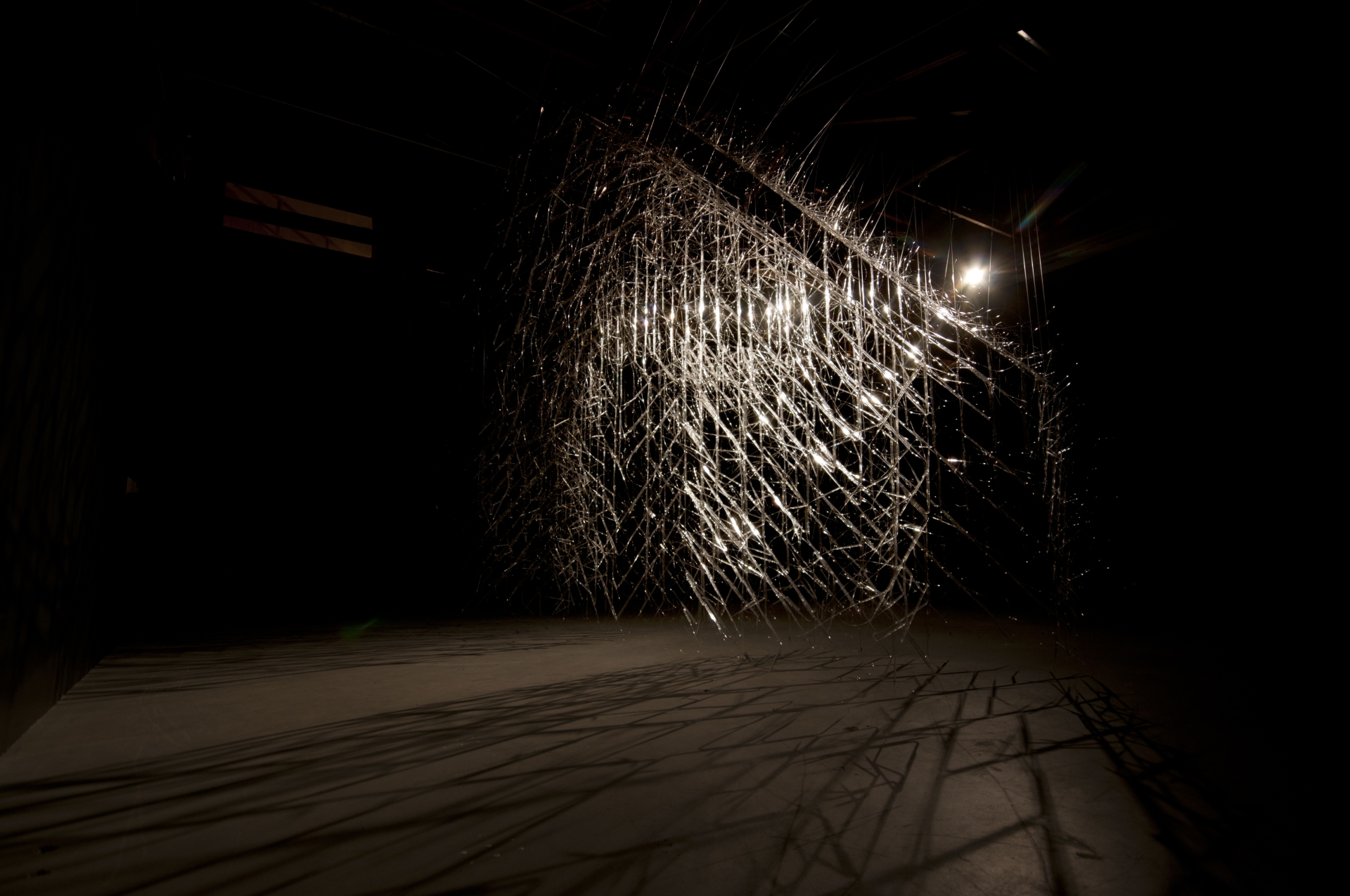

Katie Newell. Second Story. 2011, Flint, Michigan.

Katie Newell. Second Story. 2011, Flint, Michigan.

Enjoying the content on 3QD? Help keep us going by donating now.

Katie Newell. Second Story. 2011, Flint, Michigan.

Katie Newell. Second Story. 2011, Flint, Michigan.

Enjoying the content on 3QD? Help keep us going by donating now.

by Michael Liss

Ay! I am fairly out and you fairly in! See which one of us will be happiest! —George Washington to John Adams, March 4, 1797

No one in American history has ever known better how and when to make an exit than George Washington. Just two days before Washington left for the figs and vines of Mount Vernon, the Revolutionary Directory of France issued a decree authorizing French warships to seize neutral American vessels on the open seas. There was a bit of tit-for-tat in this—in 1795, America had negotiated the Jay Treaty to resolve certain post-Independence issues between it and the British, including navigation without interference. But France was at war with England, and, while France wasn’t necessarily looking to shoot it out with the Americans, it did want to disrupt trade. Adams moved quickly to prepare the country, but the French were on a war footing, the Americans were not, and, by the end of 1797, roughly 300 American merchant ships with their supplies and crews had been taken. This was the so-called “Quasi-War.” Adams was deft with diplomacy—he sent a team to Paris to negotiate an end to the open hostilities, but they (supposedly) were met with demands for large bribes as a predicate for discussions (the “XYZ Affair“). The country seethed.

We Americans love to say that “politics stop at the water’s edge,” but it is kind of a comforting lie. Politics almost never stop, water’s edge or not, and that was certainly true in the Spring of 1798. Federalists prepared for war, pointing out the obvious—France didn’t exactly look like a friend. Democratic-Republicans claimed Federalists were manipulating the situation as a pretext to centralize power in their own hands, and to drive a wedge between America and its sister nation, Revolutionary France.

Of course, they were both at least a little right. America was trying to figure it all out. Beyond the bigger conflicts with Europe, there was something interesting at this moment going on in American politics. Politicians and voters were adding political identities, along with their regional and state-level ones. They were further sorting themselves into temperaments and teams inside the Federalist and Democratic-Republican Parties—so it was not just two combatants, but several, across a spectrum. It was all so new. In just a generation, we had gone from being 13 colonies, to being loosely tied States under the Articles of Confederation, to having a federal government with real authority. A lot of Americans, including those in elected office, didn’t really know how conflicts would be resolved between the individual and his State, his State and the federal government, or among the federal government’s three branches. The one thing that was not new was human nature—the tendency to remember the convenient, to fill the space of ignorance with self-interest, to believe in one’s own “rightness,” and to thirst for power. Read more »

The Saint Matthew Passion – yes, I know, by Bach – was a rock band I played in back in the ancient days, 1969 through 1971, when I was working on a master’s degree in Humanities at Johns Hopkins. Before I can tell you about that band, however, I want to tell you something about my prior musical experience, both when I was just a kid growing up in Johnstown, Pennsylvania, in the Western part of the state. Football country, Steeler country. Then I entered Johns Hopkins, where I finally allowed myself to like rock and roll. That’s when I joined the Passion. After that, ah after that, indeed.

I started playing trumpet in fourth grade, group lessons at school, then private lessons at home for a couple of years.

Next I started taking lessons with a man named Dave Dysert, who gave lessons out of a teaching studio he’d built in his basement. When I became interested in jazz, he was happy to encourage that. I got a book of Louis Armstrong solos. He’d accompany me on the piano. Made special exercises in swing interpretation. Got me to take piano lessons so I could learn keyboard harmony. I learned a lot from him: My Early Jazz Education 6: Dave Dysert. Those lessons served me well, when, several years later, I joined The Saint Matthew Passion.

When I entered middle school I joined both the marching band and the concert band. Marching band was OK, sometimes actual fun. But the music was, well, it was military music and popular ditties dressed up as military music. I even fomented rebellion in my junior year, which was promptly quashed. Concert band was different. We played “real” music – movie scores, e.g. from Ben Hur (“March of the Charioteers” was a blast), classical transcriptions, e.g. Dvorak’s New World Symphony, Broadway shows, e.g. West Side Story, and this that and the other as well. We were a good, very good, both marching band and concert band.

I also played in what was called a “stage band” at the time. It had the same instrumentation as a big jazz band – trumpets, trombones, saxophones, rhythm section (drums, bass, guitar, piano) – and played the same repertoire. One of the tunes we played was the theme from The Pink Panther, by the great Henry Mancini. I was playing second trumpet, the traditional spot for the “ride” trumpeter, the guy who took the improvised solos. Since this arrangement was written for amateurs, there was a (lame-ass) solo written into the part. I wanted none of that. I composed my own solo. I’d been making up my own tunes for years, and Mr. Dysert had given me the tools I needed to compose a solo – another step further and I’d have been able to improvise on the spot, but that’s not how we did it back then, at least not in the sticks. So I composed my own solo. Surprised the bejesus out of the director the first time I played it in rehearsal. But he took it well.

That’s what I had behind me when, in the Fall of 1965, I went off to Johns Hopkins. Read more »

The music of what happens

begins with a bottom line of drums,

as in the foundation of a house,

percussion— the thumps of

bass in sync with a wind of horns:

baritone, bassoon, tuba; and in the

whispers of brushed snares, the

round tones of tympany, and in the

rests between —the spaces, those silent

shifts that may change everything:

a thunder-crash of cymbal, but then,

there, a rest, followed by

bells of glockenspiel—

Enjoying the content on 3QD? Help keep us going by donating now.

by Dwight Furrow

It is a curious legacy of philosophy that the tongue, the organ of speech, has been treated as the dumbest of the senses. Taste, in the classical Western canon, has for centuries carried the stigma of being base, ephemeral, and merely pleasurable. In other words, unserious. Beauty, it was argued, resides in the eternal, the intelligible, the contemplative. Food, which disappears as it delights, seemed to offer nothing of enduring aesthetic value. Yet today, as gastronomy increasingly is being treated as an aesthetic experience, we must re-evaluate those assumptions.

It is a curious legacy of philosophy that the tongue, the organ of speech, has been treated as the dumbest of the senses. Taste, in the classical Western canon, has for centuries carried the stigma of being base, ephemeral, and merely pleasurable. In other words, unserious. Beauty, it was argued, resides in the eternal, the intelligible, the contemplative. Food, which disappears as it delights, seemed to offer nothing of enduring aesthetic value. Yet today, as gastronomy increasingly is being treated as an aesthetic experience, we must re-evaluate those assumptions.

The aesthetics of food, far from being a gourmand’s indulgence, confronts some of the oldest and most durable hierarchies of Western thought, especially the tendency to privilege vision over the other senses. At its core are five questions, each a provocation: Can food be art? What constitutes an aesthetic experience of eating? Are there criteria for aesthetic judgment in cuisine? How are our tastes shaped by culture and identity? And what happens when we step outside the West and reframe the premises of aesthetic theory?

Is Food Art?

If food pleases the senses, moves us emotionally, and its composition requires skill and creativity, why not call it art? Well, the ghosts of Plato, Kant, and Hegel hover over our plates even today. For Plato, food was mired in the appetitive soul, a distraction from the real essences of things which could only be recognized by the intellect. Kant dismissed gustatory pleasure as a mere “judgment of the agreeable,” lacking the disinterestedness and universality that marked true aesthetic judgment. And Hegel, in his consummate disdain, excluded food from art on the grounds that it perishes in consumption.

But these historical arguments can’t accommodate recent developments in cuisine. Contemporary, creative cuisine, after all, exemplifies many hallmarks of artistic practice: aesthetic intention, technical virtuosity, formal innovation, and even thematic expression. When Ferran Adrià designs a deconstructed tortilla or a moss-covered dessert, he is not merely feeding; he is composing. Read more »

by Steven Gimbel

In my Philosophy 102 section this semester, midterms were particularly easy to grade because twenty seven of the thirty students handed in slight variants of the same exact answers which were, as I easily verified, descendants of ur-essays generated by ChatGPT. I had gone to great pains in class to distinguish an explication (determining category membership based on a thing’s properties, that is, what it is) from a functional analysis (determining category membership based on a thing’s use, that is, what it does). It was not a distinction their preferred large language model considered and as such when asked to develop an explication of “shoe,” I received the same flawed answer from ninety percent of them. Pointing out this error, half of the faces showed shame and the other half annoyance that I would deprive them of their usual means of “writing” essays.

In my Philosophy 102 section this semester, midterms were particularly easy to grade because twenty seven of the thirty students handed in slight variants of the same exact answers which were, as I easily verified, descendants of ur-essays generated by ChatGPT. I had gone to great pains in class to distinguish an explication (determining category membership based on a thing’s properties, that is, what it is) from a functional analysis (determining category membership based on a thing’s use, that is, what it does). It was not a distinction their preferred large language model considered and as such when asked to develop an explication of “shoe,” I received the same flawed answer from ninety percent of them. Pointing out this error, half of the faces showed shame and the other half annoyance that I would deprive them of their usual means of “writing” essays.

My comments to them steered away from moralizing about academic integrity and instead I asked what kind of class this was. “Philosophy,” they droned united, sensing the irony. “And what do we do in philosophy?” “Think.” Yes, I told them, in a couple years they would have a boss, likely a romantic partner, maybe even kids, at which point not one of them will care what they think. This may be the last time anyone really does. Why surrender that? Isn’t that what makes you human? Why willingly hand over your very personhood to a machine when this may be your last opportunity to fully embrace it?

I pointed out that never during the semester did I appear in the classroom wearing anything unexpected on my feet. I don’t really need them to tell me what makes something a shoe. The point of the question was not to write down the correct answer. Rather, the value of the exercise was to wrestle with something that seems at first glance trivially easy, but then gets hard when you consider boundary cases. Take this straightforward case and see how tricky it is in order to start building the cognitive muscles you’ll need when thinking about justice, God, truth, or love. It is the process, the struggle, that is important. And that is precisely what our contemporary AI eliminates.

I asked how many work-out and most hands went up. I then asked if they could lift more with a forklift. When they said yes, I asked “Then, why not take one to the gym?” This turned into a utilitarian justification of building skills that will benefit them in their future. Read more »

by Ed Simon

When critics describe Philip Levine as a “working class poet,” normally they have in mind his Detroit-upbringing, or his effecting verse about rarely discussed subjects such as laboring on the assembly line of a Ford factory. Often, there is a sense that the former Poet Laurette of the United States is particularly working class not just because of his subjects, but because of his style as well; that Levine writes in an unaffected, unadorned, and unpretentious manner. This is the poet whom in one of his most famous lyrics could defiantly write in the second-person that “You know what work is – if you’re/old enough to read this you know what/work is, although you may not do it./Forget you,” that piecing two-word sentence after the end-stop simultaneously a declaration of independence from a particular variety of literati and a declaration of allegiance to another. All of this is fair, good, and true, Levine’s style of low-spun verse meticulously and carefully presents particular commitments in a manner that reads easily but is nonetheless intricate to compose, but that style need not be limited to those particular subjects for which the poet is most renowned.

Indeed another poem from that 1991 collection What Work Is entitled “Soloing” does nothing less than explicate the origin of poems, or rather the origin of inspiration, and the manner in which human connection can be forged through artistic engagement; all of that accomplished without ever resorting to multisyllabic Latinate words like “transcendent,” “luminous,” “numinous,” or “incandescent.” The narrator of “Soloing” recounts a narrative whereby he comes to visit his mother in California, when she tells him about how in a dream the saxophone playing of John Coltrane moved her to tears. Unlike a more metaphysically-inclined poet, say a Robert Hass or a Louise Gluck, Levine is a poet who eschews an overly-theological diction. “Soloing” is, in many ways, a manifestly concrete poem; abstractions are avoided throughout. We’re presented with images like a television which is “gray, expressionless;” of suburban streets with the “neighbors quiet,” of “palm trees and all-/night super markets pushing/orange back-lighted oranges at 2 A.M.” Yet there is a sacred in Levine’s profane, this land of abundance and plenty, where the repetitive parallelism and redundancy of “orange back-lighted oranges” is a miracle in its own way (not least of all in the eerie exactitude of the image).

Levine plays with this trope of the Golden State as a type of promised land (so different from the Detroit in the collection’s other poems), where “I have driven for hours down 99,/over the Grapevine into heaven…. Finding solace in California/just where we were told it would/be.” This Edenic language shouldn’t be read as ironic; indeed, when Levine writes “What a world, a mother and her son/finding solace in California” the chuckling weariness of it isn’t an expression of sarcasm, but of amazement. That amazement is the through-line in the poem’s story, both that the elderly mother can be so moved by the music of a “great man half/her age,” but also that the ineffability and inexpressibility of artistic connection can move anyone. Read more »

by Paul Braterman

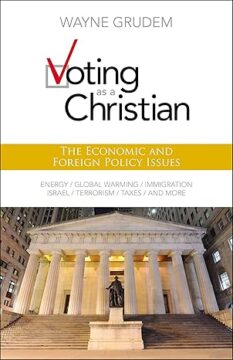

Voting as a Christian; The Economic and Foreign Policy Issues, Wayne Grudem. Zondervan 2010/2012, pp 330, 560 brief footnotes to text, no index!

WE AFFIRM that it is sinful to approve of homosexual immorality or transgenderism and that such approval constitutes an essential departure from Christian faithfulness and witness.

WE DENY that the approval of homosexual immorality or transgenderism is a matter of moral indifference about which otherwise faithful Christians should agree to disagree.

The above is Article X of the Nashville Statement, put out in 2017 by the Council for Biblical Manhood and Womanhood (CMBW), founded 1987. Not only does it presuppose that homosexual conduct and transgenderism are sinful, but it regards toleration of such conduct, and even toleration of toleration, as wrong.

CMBW, of which Wayne Grudem, author of the book under discussion, was a co-founder, “exists to equip the Church on the meaning of biblical sexuality,” this meaning being defined by a strict patriarchy, according to which men and women are equally precious in the sight of God, but women need to know their place, which is decidedly not in the pulpit. The signatories of the Nashville Statement include some of the most influential figures within US conservative Christianity, among them James Dobson (founder of Focus on the Family), Albert Mohler (President, The Southern Baptist Theological Seminary), Tony Perkins (President, Family Research Council), John MacArthur (then President, The Master’s Seminary & College; Pastor, Grace Community Church, whose London satellite we met in my most recent article here), and Ligon Duncan (Chancellor & CEO, Reformed Theological Seminary, whom I came across some years ago as a trustee of Highland Theological College), the as well as Wayne Grudem himself.1There is a paradox here. Like CMBW, I am disturbed by the upsurge in demand for clinical transitioning, but for exactly the opposite reason. CMBW maintains that one’s sex determines one’s God-given role, and that it is therefore sinful to attempt to change it. I maintain that all roles should be open to all people, and that therefore transitioning should only very rarely be necessary.

Grudem, in my view, deserves broader attention, because of his connections and the scope and influence of his writings, and as a representative of the US Religious Right. He holds a PhD in New Testament studies from Cambridge, degrees in Divinity from Westminster Theological Seminary, and, what made him particularly interesting to me, a BA in economics from Harvard. For this reason I thought it interesting to see how he, as an economist, justifies the low taxation policies of the American Religious Right, which to me as an outsider to both economics and Christianity seems to be nonsense economics and very different from what I think of as Christian. I think now that I understand his position better, but, if possible, like it even less. I also see how it flows directly from a theology that includes but transcends biblical literalism, according to which God’s creation is so good, and so strongly directed to meet human needs, that any actual policy-making is an intrusion on His prerogative. Read more »

by Daniel Shotkin

As any teacher would agree, it’s incredibly difficult to get a classroom of teens to focu s on a common topic. Yet at noon on May 8th, all 16 high school seniors in my AP Lit class were transfixed by one event: on the other side of the Atlantic, white smoke had come out of a chimney in the Sistine Chapel. “There’s a new pope” was the talk of the day, and phone screens that usually displayed Instagram feeds now showed live video of the Piazza San Pietro in Rome.

s on a common topic. Yet at noon on May 8th, all 16 high school seniors in my AP Lit class were transfixed by one event: on the other side of the Atlantic, white smoke had come out of a chimney in the Sistine Chapel. “There’s a new pope” was the talk of the day, and phone screens that usually displayed Instagram feeds now showed live video of the Piazza San Pietro in Rome.

What is it about a bureaucratic election in a 2,000-year-old microstate that so completely captivated my class?

On paper, the Catholic Church has no business occupying the attention of anyone in secular society, let alone a group of teens who’ve never stepped foot in a church in their lives. Long gone are the days when the Papacy positioned itself as the world’s divine arbiter of authority. Kingly excommunications are few and far between, and the Pope—once ruler of a vast swath of central Italy—is now confined to a hill in Rome.

Despite all these changes, the Pope still occupies an outsized role in the popular imagination. Why? Because the papacy is a living inheritor of two historical narratives that continue to captivate Western thought. Read more »

by Tim Sommers

Welcome to the VR office and, hopefully, welcome to the coven! No, it’s not just witches, a group of vampires is called a “coven” too. VR? Human Resources for vampires, obviously.

Just a few last details before we can move forward. Lunch after, so let’s get through this.

I know that some of this has already been covered, but there’s a certain vampire to human ratio that it’s essential to enforce if we are going to continue letting humans do the hard work of maintaining things while we live amongst them undetected.

You’re aware, no doubt, of the many positive aspects of being a vampire. You will stop aging, repair injuries easily, potentially live forever, be erotically mesmerizing to humans (even though always dressed like a goth), have superhuman senses and strength and, yes, you can turn into a bat.

Can you even imagine what it’s like to be a bat?

Downsides. Obviously, can’t go to church, be around crosses, holy water. You can’t go outside during the day. You don’t appear in mirrors, which for many is a big one, I mean, fixing your hair can be a nightmare!

What else? You can’t put garlic on your pizza. In fact, you can’t have pizza at all. Or coffee. Or chocolate. Or alcohol. Or anything except human blood. Which I guess is a biggie for a lot of people, but I don’t really get it. I mean, sure, you have to murder and consume the blood of a human several times a week, but what’s the big deal really? There are billions of them.

But please, keep in mind, being a vampire, a hunter, an outsider, is no easy thing. It’s not like the movies where you just go to parties or lounge about all night between kills. No. Being a vampire, in many ways, is more a thrill than a pleasure. Read more »

by David Beer

Danish author Solvej Balle’s novel On the Calculation of Volume, the first book translated from a series of five, could be thought of as time loop realism, if such a thing is imaginable. Tara Selter is trapped, alone, in a looping 18th of November. Each morning simply brings yesterday again. Tara turns to her pen, tracking the loops in a journal. Hinting at how the messiness of life can take form in texts, the passages Tara scribbles in her notebooks remain despite the restarts. She can’t explain why this is, but it allows her to build a diary despite time standing still. The capability of writing to curb the boredom and capture lost moments brings some comfort.

Danish author Solvej Balle’s novel On the Calculation of Volume, the first book translated from a series of five, could be thought of as time loop realism, if such a thing is imaginable. Tara Selter is trapped, alone, in a looping 18th of November. Each morning simply brings yesterday again. Tara turns to her pen, tracking the loops in a journal. Hinting at how the messiness of life can take form in texts, the passages Tara scribbles in her notebooks remain despite the restarts. She can’t explain why this is, but it allows her to build a diary despite time standing still. The capability of writing to curb the boredom and capture lost moments brings some comfort.

There are no chapters, no endings, occasionally we are given the number of 18ths of November Tara has endured. Those occasional numerical markers replace dates in the diary. As a consequence, the volume of repetitions becomes the key metric. The day takes on extra dimensions when the limits of what is possible in a single 24-hours can be explored so intricately. Unlike similar conceptions, Tara can move around, waking wherever she ended the previous version of the same day. She also ages, a burn on her hand heals to a scar, and certain things stay where she put them too. The absences also remain. Repeated food purchases leave gaps on shop shelves. Inexplicably, those gaps remain. Yet it is the absence of uncertainty that weighs most heavily on Tara. When you know what is coming, unpredictability is lost, it has to be actively sought-out instead.

It is the combination of Tara’s agency, the traces of her repetitions and the materiality of the experienced loops that give this time loop its realist property. We see how reliving the same day alters perspectives on people, places, space, nature, and so on. There is no when and no if to the story, what we get instead is what happens to someone experiencing endless predictability. At first, the things that are the same stick-out to Tara. A piece of dropped bread that falls slowly to the floor, a rain shower, footsteps on stairs, all become overwhelmingly familiar. Over time, the inconsistencies start to become a preoccupation. Tara can’t understand why certain objects stay whilst others return to their original location. Perhaps we shouldn’t expect the outcome of a major temporal disruption to be well-ordered and logical. Read more »

Ambulance speeds by the much slower boat I was in, at quite an unbelievable speed, in Venice.

Enjoying the content on 3QD? Help keep us going by donating now.

by Ken MacVey

Many have talked about Trump’s war on the rule of law. No president in American history, not even Nixon, has engaged in such overt warfare on the rule of law. He attacks judges, issues executive orders that are facially unlawful, coyly defies court orders, humiliates and subjugates big law firms to his will, and weaponizes law enforcement to target those who seek to uphold the law.

Many have talked about Trump’s war on the rule of law. No president in American history, not even Nixon, has engaged in such overt warfare on the rule of law. He attacks judges, issues executive orders that are facially unlawful, coyly defies court orders, humiliates and subjugates big law firms to his will, and weaponizes law enforcement to target those who seek to uphold the law.

What is not talked about as much is that this is part of a self-reinforcing pattern. Trump’s words, conduct, and example have been an assault on norms we once took for granted. With Trump 2 the assault has intensified. The new normal is there is no normal.

Trump as a businessman has never been a producer in the way other businesses are. Businesses sell products and services. Businesses manage their operations. As Warren Buffet commented, Trump is not particularly good at business operations but is good at licensing. That is because Trump’s product is himself. He is the product people consume and we are all his consumers—to love him is to be a consumer; to despise him is to be a consumer. Fox News and MSNBC are equally fueled by selling his product, which again is Trump himself. His game and his product are the same: “Look at me!” And we all fall for it.

It has become a cliché to note that Trump is a convicted felon, a businessman who has gone through six bankruptcies and who boasts about how he stiffs others. It is widely commented he lies, cheats, and according to a jury and his own admission, sexually assaults women. He is constantly taunting and insulting others, testing and crossing boundaries. None of this seems to ultimately hurt him. This is all part of his “authenticity” his base finds so appealing. He is the ultimate “sticking it to the Man” guy and by each insult, each crossing of what for anyone else would be a bridge too far, has become the Man himself. As president in a second term he is taking selling his product, that is himself, to new levels. People can buy access by contributing millions to his inauguration committee, paying millions to his crypto fund, or millions for his Trump gold card. Again, the product is always Trump himself. This then is coupled with his two in the morning postings on Truth Social (another Trump product where Trump is the product) or his White House posted AI generated images of his basking in the sun with Musk at a future resort in Gaza or his portrayal as king or pope.

The point is Trump is always the product, the center of attention, and no norms apply. Read more »

by Christopher Hall

When this article is published, it will be close to – perhaps on – the 39th anniversary of one of the most audacious moments in television history: Bobby Ewing’s return to Dallas. The character, played by Patrick Duffy, had been a popular foil for his evil brother JR, played by Larry Hagman on the primetime soap, but Duffy’s seven-year contract with the show had expired, and he wanted out. His character had been given a heroic death at the end of the eighth season, and that seemed to be that. But ratings for the ninth season slipped, Duffy wanted back in, and death in television, being merely a displaced name for an episodic predicament, is subject to narrative salves. So, on May 16, 1986, Bobby would return, not as a hidden twin or a stranger of certain odd resemblance, but as Bobby himself; his wife, Pam, awakes in bed, hears a noise in the bathroom and investigates, and upon opening the shower door, reveals Bobby alive and well. She had in fact dreamed the death, and, indeed, the entirety of the ninth season.

When this article is published, it will be close to – perhaps on – the 39th anniversary of one of the most audacious moments in television history: Bobby Ewing’s return to Dallas. The character, played by Patrick Duffy, had been a popular foil for his evil brother JR, played by Larry Hagman on the primetime soap, but Duffy’s seven-year contract with the show had expired, and he wanted out. His character had been given a heroic death at the end of the eighth season, and that seemed to be that. But ratings for the ninth season slipped, Duffy wanted back in, and death in television, being merely a displaced name for an episodic predicament, is subject to narrative salves. So, on May 16, 1986, Bobby would return, not as a hidden twin or a stranger of certain odd resemblance, but as Bobby himself; his wife, Pam, awakes in bed, hears a noise in the bathroom and investigates, and upon opening the shower door, reveals Bobby alive and well. She had in fact dreamed the death, and, indeed, the entirety of the ninth season.

This imposition on the audience’s credibility (though rarely done with such chutzpah) has occurred often enough in television and other media (comic books in particular, which is where the term originated) to have earned a name: retroactive continuity. Continuity refers to our sense that events should proceed in logical sequence, but the retroactive element insists that a key and unexpected change has occurred which alters or nullifies some previous sequence. Something deeply out of expected continuity has happened in the narrative, which means that our interpretation of previous events must be completely changed, or, in this case, obliterated. You watched season nine, but it didn’t happen. Schrodinger’s Bobby Ewing: we saw him die, worked through the consequences of that for a season of television, and yet here he is lathering up in the shower.

Retroactive continuity – or retcon, retconning – is a kludge, an act of “repair” done to a narrative to ease the consequences of what is usually some external, often commercial, pressure. Its literate cousin is peripeteia, the moment of sudden reversal, the turning point, the more specific version of which is anagnorisis, the recognition, the new knowledge that changes our understanding of everything. The archetypical example of this is in Sophocles’ Oedipus Rex, when the terrible knowledge that he is the father-killing, mother-schtupping abomination causing the plague in his city dawns on the title character.

What happened to Bobby Ewing in Dallas was clumsy to the point of loutishness, and the worst thing about it was what it implied about the audience: that it might roll its eyes, but there was a reasonable certainty it would keep right on watching. (The series lasted another 5 years after Bobby’s return.) The moment of anagnorisis in Oedipus Rex is, in contrast, narrative perfection; the story could end no other way. (It no doubt helped, of course, that the Greek audience already knew the ending.) Read more »

by Kyle Munkittrick

To write well with AI, you’ve got to understand Socrates.

Paul Graham and Adam Grant argue that having AI write for you ruins your writing and your thinking. Now, honestly, I tend to agree, but I thought these smart people were making a couple mistakes.

First, they seemed to be criticizing first drafts. If you asked a person to write a poem in five minutes with vague instructions, unless they were a champion haiku composer like Lady Mariko from Shogun, it would probably be pretty bad. AI is best in conversation, reacting to feedback. Sure the initial draft might be bad, but AI can revise, just like we can. Second, and more important, if AI shouldn’t be doing the writing, it should probably be the critic. Even if it didn’t have good taste, it could surely evaluate a specific piece, given sufficient prompt scaffolding. Right?

After completing a major portion of a draft of an in-progress novel, I decided to test my theory. I shared the first Act with Claude (3 Sonnet and Opus) and Boom! I got exactly what I hoped for—some expected constructive criticism along with glowing praise that my novel draft was amazing and unique.

In reality, it was not.

This was not a skill issue! It was a temptation issue. My prompt was ostensibly well-crafted. I knew how to avoid exactly this problem, but I didn’t want to. I knew, deep down, that my novel was, in fact, overstuffed, weirdly paced, exposition dumpy, and had half a dozen other rookie mistakes. Of course it was! It was a draft! But I crippled my AI critic so that I could get a morale boost.

The sycophantic critic is an under-appreciated, and, to me, equally concerning, risk of using AI when writing. Read more »

by Nils Peterson

I

A friend sent me a day or two ago a poem that contained this story:

The Buddha tells a story of a woman chased by a tiger.

When she comes to a cliff, she sees a sturdy vine

and climbs halfway down. But there’s also a tiger below.

And two mice—one white, one black—scurry out

and begin to gnaw at the vine.

I was reminded, when I read it, of a guy who was a regular on late night talk shows in the 60’s. His first name was Alexander. [I refuse to look him up. Maybe it will come.] He had written a book entitled May This House Be Safe From Tigers and I was thrown back to my young days as a father when this was my prayer at those times I was driven to prayer. I guess I felt my wife and I and our daughters could survive small catastrophes, but we also knew that there were those that were overwhelming, the Tigers of the world, Tygers, really, that could and would maul or eat you. But we were blessed not that we didn’t have griefs, the deaths of parents, friends.

In my 80’s I became aware aware that tigers are very, very patient, are never altogether not there, and the vine where the black mouse and the white mouse gnaw grew thin. Our tyger was the ALS my wife was diagnosed as having.

The story goes on as her poem explains:

At this point

she notices a wild strawberry growing from a crevice.

She looks up, down, at the mice.

Then she eats the strawberry.

Well, what else is there to do but weep or eat the strawberry? The poem ends –

Oh, taste how sweet and tart

the red juice is, how the tiny seeds

crunch between your teeth.

And so we looked for strawberries. In Sweden, my ancestral country, wild strawberries are unbelievably delicious. They even have their own name, Smultron. Read more »

Elif Saydam. Free Market. 2020.

Elif Saydam. Free Market. 2020.

23 k gold, inkjet transfer and oil on canvas.

Enjoying the content on 3QD? Help keep us going by donating now.

by Katalin Balog

Nathaniel in E. T. A. Hoffmann’s The Sandman loses his sanity over having fallen in love with a wooden doll, the beautiful automaton Olympia. Olympia is an invention of a mad scientist and a master of the dark arts. Like Mary Shelley’s monster, born of the romantic imagination, she is the first literary example of a human-like machine. As one of Nathaniel’s friends observes:

We have come to find this Olympia quite uncanny; we would like to have nothing to do with her; it seems to us that she is only acting like a living creature, and yet there is some reason for that which we cannot fathom.

We can sympathize with the sentiment. But uncanny as the wooden doll might have struck them, contemporary readers of the tale were meant to see Nathaniel’s infatuation as macabre farce – Olympia is clearly robotic and shows no signs of intelligence – orchestrated by dark forces either in the world or in his soul, we can’t know for sure.

The Enlightenment’s fascination with automata (Hoffman’s story was published in 1816) prefigured our predicament, however. We now find ourselves in the curious position of having to give serious thought to the possibility, and increasingly, the reality of relationships with machines that hitherto were reserved for fellow humans. We also seem to have to defend ourselves against – or perhaps reconcile ourselves to – suggestions that AI will soon, perhaps in some regards already, surpass us in some of our most characteristically human activities, like understanding the feelings of others, or creating art, literature, and music. Read more »

by Lei Wang

It’s my birthday twice a month, every month. Or at least I treat each 13th and 27th as if it were my birthday. I don’t ask anyone else to pretend with me; I keep to the usual annual celebratory imposition. It is an internal orientation.

From morning to night on the 13th and 27th of the month (because I was born on a 13th and like odd numbers), I feel the day is special. I can do whatever I want. Technically, as a writer and freelance worker, I can pretty much always do what I want, but external circumstances don’t always match internal understandings. And the ability to always do what I want is also the pressure to be always working, no real evenings or weekends, because I can (though mostly because I’ve procrastinated during the day). How much work is enough when you work for yourself, when you are supposedly doing the work you love? Even resident doctors get two days off a month, to save their own lives. And so: my self-made fortnightly ritual.

What I always want to do is lounge around but on my “birthday,” I can lounge without an ounce of guilt. I may still choose to write a bit, but then I feel extraordinarily virtuous—to be working on my birthday! (Yes, my birthday is secretly a productivity hack.) I get the fancier chocolate at the grocery store which I may have justified on another day, but today, I don’t even have to justify. I light the jasmine-bergamot candle I have been hoarding. I am magnanimous with myself. Whatever I do, there is a rare sense of permissiveness that even real birthdays don’t have: there is some pressure to revel on actual birthdays, and the potential for disappointment, while on private birthdays I can do anything I want which includes nothing at all.

Perhaps I even need to work—probably, in fact, I have not quite planned my time well and unlike Jesus’ birthday or the birthdays of nations, my fake birthdays necessarily fall on random, inconvenient days. But even working, I think, just for today, I don’t have to be perfect. It’s my birthday. And there is a special pleasure in a random Tuesday no longer being random, just because I imagined it so. Read more »