Samuel Myon in Harper’s Magazine:

In Greek myth, Eos falls in love with Tithonus. She is the goddess of the dawn. He is a Trojan prince, yet still a mere mortal. Eos asks Zeus to give her mate the gift of eternal life—but, foolishly, she forgets to ask for eternal youth too.

In Greek myth, Eos falls in love with Tithonus. She is the goddess of the dawn. He is a Trojan prince, yet still a mere mortal. Eos asks Zeus to give her mate the gift of eternal life—but, foolishly, she forgets to ask for eternal youth too.

Tithonus never dies; he just grows older and older. “Ruthless age,” goes the Homeric hymn recounting his story, is “dreaded even by the gods.” Tithonus becomes more decrepit and wizened with each passing year. Eventually, when he can no longer move, Eos has to shut him away, in a place where “he babbles endlessly, and no more has strength at all.” Eternal life amid the decline of one’s faculties is not a blessing but a curse. “Me only cruel immortality / Consumes: I wither slowly in thine arms,” Tithonus complains in Alfred Tennyson’s rendition of the myth (published in these pages in 1860), in a rare moment of lucidity that emerges from his everlasting gibberish.

The story of Tithonus no longer feels so outlandish, because our society postpones death to an unprecedented degree. Unlike immortals, we still pass. But the great majority of us, and not only the bad, now die old. In whatever nursing home he was parked in, Tithonus must have looked much like we increasingly do, as doctors continuously defer our mortality. We are approaching a time when a legion of Tithonuses will live in our midst. We have already felt the social and political consequences.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

Over the last few years, breakthroughs in AI have been almost too numerous to track. Chatbots can now pass the same exams required of

Over the last few years, breakthroughs in AI have been almost too numerous to track. Chatbots can now pass the same exams required of  I

I It’s beautiful in North Carolina in March, which means that Zach has set out to use his metal detector in the woods near our house. He is certain that we are about to embark on a new journey as a family: owning our own junkyard.

It’s beautiful in North Carolina in March, which means that Zach has set out to use his metal detector in the woods near our house. He is certain that we are about to embark on a new journey as a family: owning our own junkyard. On Thursday, a group of researchers

On Thursday, a group of researchers  T

T For a few weeks this spring, you couldn’t swing a thyrsus in New York without hitting a play about Antigone. Perhaps it started with Robert Icke’s Oedipus, the Broadway production from February, which featured a modern-day Antigone as a sulky teen who little suspects that her father is also her brother. Soon after, four different theaters across the five boroughs staged their own renditions of Sophocles’s famous play, reimagining his two-thousand-and-five-hundred-year-old mythic figure as, variously, a pregnant teenager, an analysis patient, an incestuous home renovator, and a freedom fighter in a fascist regime in the future. The latter, in a bid to underscore the theme of rebellion across the ages, went so far as to include audio from the ICE raids in Minneapolis.

For a few weeks this spring, you couldn’t swing a thyrsus in New York without hitting a play about Antigone. Perhaps it started with Robert Icke’s Oedipus, the Broadway production from February, which featured a modern-day Antigone as a sulky teen who little suspects that her father is also her brother. Soon after, four different theaters across the five boroughs staged their own renditions of Sophocles’s famous play, reimagining his two-thousand-and-five-hundred-year-old mythic figure as, variously, a pregnant teenager, an analysis patient, an incestuous home renovator, and a freedom fighter in a fascist regime in the future. The latter, in a bid to underscore the theme of rebellion across the ages, went so far as to include audio from the ICE raids in Minneapolis. The core problem in oncology has always been one of discrimination. Cancer cells and normal cells are, at the molecular level, nearly identical. What distinguishes a cancer cell is dysregulation, a set of genetic switches flipped in the wrong direction, causing uncontrolled growth. For decades, finding and exploiting those switches required hunting through patient samples by hand, looking for patterns subtle enough to be almost invisible.

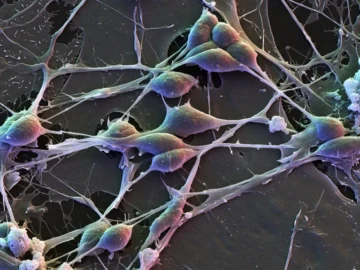

The core problem in oncology has always been one of discrimination. Cancer cells and normal cells are, at the molecular level, nearly identical. What distinguishes a cancer cell is dysregulation, a set of genetic switches flipped in the wrong direction, causing uncontrolled growth. For decades, finding and exploiting those switches required hunting through patient samples by hand, looking for patterns subtle enough to be almost invisible. By analysing more than a million brain cells, researchers have uncovered widespread differences in patterns of gene activity between male and female brains.

By analysing more than a million brain cells, researchers have uncovered widespread differences in patterns of gene activity between male and female brains.