(Note: Throughout February, at least one post will be devoted to Black History Month: A century of Black History Commemorations)

Enjoying the content on 3QD? Help keep us going by donating now.

(Note: Throughout February, at least one post will be devoted to Black History Month: A century of Black History Commemorations)

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.

Auren Hoffman at Summation:

Amazon knows everything you’ve ever bought. They could build an incredibly sophisticated profile of who you are and what you want and even what you need.

Amazon knows everything you’ve ever bought. They could build an incredibly sophisticated profile of who you are and what you want and even what you need.

And their recommendations STILL haven’t improved since Bush (W.). yes, the last time Amazon really improved its recommendations was BEFORE the US invaded Iraq.

Instead, they just recommend more of what you just bought.

The same pattern plays out for everything: buy razors → here are 200 more razor options.

The fundamental assumption seems to be that people want to endlessly comparison shop the thing they just bought. Which is INSANE.

What we really want is delightful recommendations. We want to see products that we do not know about that would be good fits for us. This is very doable in the age of AI. but even mighty Amazon does not even try. They are completely out to lunch.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

Edward Chen in Nature:

Scientists report that a type of giant virus multiplies furiously by hijacking its host’s protein-making machinery1 — long-sought experimental evidence that viruses can co-opt a system typically associated with cellular life.

Scientists report that a type of giant virus multiplies furiously by hijacking its host’s protein-making machinery1 — long-sought experimental evidence that viruses can co-opt a system typically associated with cellular life.

The researchers found that the virus makes a complex of three proteins that takes over its host’s protein-production system, which then churns out viral proteins instead of the host’s own.

Virologists had already suspected that viruses could perform such a feat, says Frederik Schulz, a computational biologist at the Lawrence Berkeley National Laboratory in California, who was not involved with the work. But the new findings, published in Cell on 17 February, are an important confirmation. Compared with other viruses, he says, this one “has a more powerful toolbox to really replace what the host is doing”.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.

Dan Kagan-Kans at Transformer:

“Somehow all of the interesting energy for discussions about the long-range future of humanity is concentrated on the right,” wrote Joshua Achiam, head of mission alignment at OpenAI, on X last year. “The left has completely abdicated their role in this discussion. A decade from now this will be understood on the left to have been a generational mistake.”

“Somehow all of the interesting energy for discussions about the long-range future of humanity is concentrated on the right,” wrote Joshua Achiam, head of mission alignment at OpenAI, on X last year. “The left has completely abdicated their role in this discussion. A decade from now this will be understood on the left to have been a generational mistake.”

It’s a provocative claim: that while many sectors of the world, from politics to business to labor, have begun engaging with what artificial intelligence might soon mean for humanity, the left has not. And it seems to be right.

As a movement, it appears the left has not been willing to engage seriously with AI — despite its potential to affect the lives and livelihoods of billions of people in ways that would normally make it just the kind of threat, and opportunity, left politics would concern itself with.

Instead, the left has, for a mix of reasons good and bad, convinced itself that AI is at the same time something to hate, to mock, and to ignore.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

We would like to linger here even longer,

especially when the sun lays gold

over lawns, some so white-fenced, idyllic, and sexy

they obsess us with what? —ourselves? —That recurring

wilderness within? All night the rain

gently sucking leaves till morning. And here

are the flowers that put out our eyes. We should throw

our bodies onto the earth, just as we throw

them onto each other. Reyes and I walked long,

talking of love in a place with no people. We could feel

its absence burning within. First at twilight

in the cow boneyard. Then next morning

beside the birthing pen. The way the heifer licked

the wet calf up, then mooed.

life into its bones. This, when nature is

only itself, when love is

sheer will. But still, the mother’s eyes bulging

toward the birth, and the mooing that goes down

into the glistening body, down into the soft hooves,

and down into the earth. This mooing

that goes on and on and will not stop, up to

the final sucking ass and carcass of death. This,

what we would, but lack. WE choose instead

such sheer reprehensible and pansexual

delights, vogueing us beyond our shirted longing,

incomprehensible despite. Quiet fools we move

and are moved by movings until staring

through the glass eyes of pleasure, we feel its palace

collapse. Oh how we long to feel that muscled

abandon for which there is no height,

an expanse whose taste is

salt, and whose hearing is all underwater,

all struggle, all breathing, one ocean, one

night. Everywhere now new leaves are ungluing their green

…… encased

with light. What we give changes us into something more

airy, something to last.

by Mark Irwin

from Quick, Now, Always

BOA Editions, 1996

Enjoying the content on 3QD? Help keep us going by donating now.

Alaina Morgan in Black Perspectives:

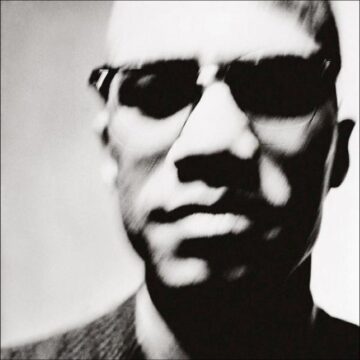

In 1963, famed American photographer Richard Avedon shot a set of rare portraits of Malcolm in which he appears unsmiling, facial features blurred, the rims of his iconic glasses just visible, and his eyes receding into the dark spaces around their sockets. He appears as a wisp – we know it is him, but only a faint impression of him is actually visible. Scholar Graeme Abernethy notes that the photograph is one of the few instances that we have which so starkly represents the malleability of Malcolm’s image. He writes that the photograph is “cryptic in its purposeful haze, skull-like in its coloration and concealment of Malcolm’s eyes in shadow, yet intimate in its perspective . . . [and therefore, it] seems to allude to the transubstantiation enabled by his death.” This malleability is possible because Malcolm’s life, with all of its possibility, was snuffed out as it began to shine the brightest.

In 1963, famed American photographer Richard Avedon shot a set of rare portraits of Malcolm in which he appears unsmiling, facial features blurred, the rims of his iconic glasses just visible, and his eyes receding into the dark spaces around their sockets. He appears as a wisp – we know it is him, but only a faint impression of him is actually visible. Scholar Graeme Abernethy notes that the photograph is one of the few instances that we have which so starkly represents the malleability of Malcolm’s image. He writes that the photograph is “cryptic in its purposeful haze, skull-like in its coloration and concealment of Malcolm’s eyes in shadow, yet intimate in its perspective . . . [and therefore, it] seems to allude to the transubstantiation enabled by his death.” This malleability is possible because Malcolm’s life, with all of its possibility, was snuffed out as it began to shine the brightest.

More here.

(Note: Throughout February, at least one post will be devoted to Black History Month: A century of Black History Commemorations)

Enjoying the content on 3QD? Help keep us going by donating now.

(Note: Throughout February, at least one post will be devoted to Black History Month: A century of Black History Commemorations)

Enjoying the content on 3QD? Help keep us going by donating now.

From Jonathan Bate’s Literary Remains:

One of the most consequential misunderstandings in the history of literary criticism turns on a single Greek word. In Aristotle’s Poetics, that word is hamartia. It is usually rendered, in classrooms and handbooks, as “tragic flaw,” and on that translation an entire tradition of reading tragedy has been erected. Yet if we return to Aristotle’s Greek and trace the word’s history with some philological care, it becomes clear that this familiar formula rests on a slow but decisive mistranslation—less an error at a single moment than a long cultural drift in which a term meaning “mistake” gradually hardened into a doctrine of moral defect.

One of the most consequential misunderstandings in the history of literary criticism turns on a single Greek word. In Aristotle’s Poetics, that word is hamartia. It is usually rendered, in classrooms and handbooks, as “tragic flaw,” and on that translation an entire tradition of reading tragedy has been erected. Yet if we return to Aristotle’s Greek and trace the word’s history with some philological care, it becomes clear that this familiar formula rests on a slow but decisive mistranslation—less an error at a single moment than a long cultural drift in which a term meaning “mistake” gradually hardened into a doctrine of moral defect.

In classical Greek, hamartia belongs to the language of action rather than character. Its root sense is concrete and kinetic: to miss one’s mark, as an archer misses the target. By extension, it denotes an error, a misjudgment, a false step—often one made in ignorance of some crucial fact. Aristotle uses the term this way throughout his works, ethical and otherwise.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

Amber Dance in Quanta:

Researchers have been mapping the brain for more than a century. By tracing cellular patterns that are visible under a microscope, they’ve created colorful charts and models that delineate regions and have been able to associate them with functions. In recent years, they’ve added vastly greater detail: They can now go cell by cell and define each one by its internal genetic activity. But no matter how carefully they slice and how deeply they analyze, their maps of the brain seem incomplete, muddled, inconsistent. For example, some large brain regions have been linked to many different tasks; scientists suspect that they should be subdivided into smaller regions, each with its own job. So far, mapping these cellular neighborhoods from enormous genetic datasets has been both a challenge and a chore.

Researchers have been mapping the brain for more than a century. By tracing cellular patterns that are visible under a microscope, they’ve created colorful charts and models that delineate regions and have been able to associate them with functions. In recent years, they’ve added vastly greater detail: They can now go cell by cell and define each one by its internal genetic activity. But no matter how carefully they slice and how deeply they analyze, their maps of the brain seem incomplete, muddled, inconsistent. For example, some large brain regions have been linked to many different tasks; scientists suspect that they should be subdivided into smaller regions, each with its own job. So far, mapping these cellular neighborhoods from enormous genetic datasets has been both a challenge and a chore.

Recently, Tasic, a neuroscientist and genomicist at the Allen Institute for Brain Science, and her collaborators recruited artificial intelligence for the sorting and mapmaking effort.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.

Almos C. Molnar and Steven Sloman at ScienceDirect:

Zero-sum bias refers to the tendency to believe that anything gained by one side is lost by the other when in fact win-win outcomes are available. Prior research has documented the bias in several domains but little is known about what triggers it. As politics is a hotbed of zero-sum beliefs, we hypothesized that politicizing problems would act as either a situational trigger or inhibitor for partisans and that this would lead them to propose qualitatively different solutions. We report five studies that find evidence for our hypotheses. We demonstrate that Democrats find less-effective solutions to a problem when it is framed in terms of corporate tax cuts, and more-effective solutions when a formally identical problem is framed in terms of pro-immigration policies, than when it is framed non-politically. Republicans exhibit the opposite pattern. Thus, we find differential problem-solving performance between the two political groups only in the politicized problem frames. We show that the political frames interfere with the process of problem solving per se, as opposed to rendering some solutions socially inadmissible. We also show that this interference impacts participants not by dialing up or down the effort they put in, but by constraining their way of thinking about the space of possible solutions. Finally, we demonstrate that the outcome of the problem-solving process is predicted by the presence or absence of zero-sum beliefs about the particular political frame, but not by participants’ affective response to it.

Zero-sum bias refers to the tendency to believe that anything gained by one side is lost by the other when in fact win-win outcomes are available. Prior research has documented the bias in several domains but little is known about what triggers it. As politics is a hotbed of zero-sum beliefs, we hypothesized that politicizing problems would act as either a situational trigger or inhibitor for partisans and that this would lead them to propose qualitatively different solutions. We report five studies that find evidence for our hypotheses. We demonstrate that Democrats find less-effective solutions to a problem when it is framed in terms of corporate tax cuts, and more-effective solutions when a formally identical problem is framed in terms of pro-immigration policies, than when it is framed non-politically. Republicans exhibit the opposite pattern. Thus, we find differential problem-solving performance between the two political groups only in the politicized problem frames. We show that the political frames interfere with the process of problem solving per se, as opposed to rendering some solutions socially inadmissible. We also show that this interference impacts participants not by dialing up or down the effort they put in, but by constraining their way of thinking about the space of possible solutions. Finally, we demonstrate that the outcome of the problem-solving process is predicted by the presence or absence of zero-sum beliefs about the particular political frame, but not by participants’ affective response to it.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.

Ashawnta Jackson in JSTOR Daily:

While bedridden with dysentery, Wright picked up a volume of haiku—a Japanese poetic form containing three unrhymed lines with a 5/7/5 syllabic pattern—and fell in love with the form. As Iadonisi writes, Wright “began composing in August 1959 and, within a few months, he had written four thousand haiku.” He prepared just over 800 of his poems for publication, but when he submitted them to a publisher in 1960, the manuscript was rejected. His haiku wouldn’t see publication until 1998. So what was it about the form that captivated him?

While bedridden with dysentery, Wright picked up a volume of haiku—a Japanese poetic form containing three unrhymed lines with a 5/7/5 syllabic pattern—and fell in love with the form. As Iadonisi writes, Wright “began composing in August 1959 and, within a few months, he had written four thousand haiku.” He prepared just over 800 of his poems for publication, but when he submitted them to a publisher in 1960, the manuscript was rejected. His haiku wouldn’t see publication until 1998. So what was it about the form that captivated him?

…As he wrote in his 1957 essay collection, White Man, Listen, “If the expression of the American Negro should take a sharp turn toward strictly racial themes, then you will know by that token that we are suffering our old and ancient agonies at the hands of our white American neighbors. If, however, our expression broadens, assumes the common themes and burdens of literary expression which are the heritage of all men, then by that token you will know that a humane attitude prevails in America towards us.” But this may all be as simply put as American literature scholar Abraham Chapman’s explanation in a 1967 article, “What is important is the Negro writer’s right to full freedom of choice in subject matter and in artistic voice.”

More here. (Note: Throughout February, at least one post will be devoted to Black History Month: A century of Black History Commemorations)

Enjoying the content on 3QD? Help keep us going by donating now.

Jim Hartley in National Association of Scholars:

Every now and then, I had heard that Wright was a fantastic writer. But not often enough; most of the time I heard about him it was in the context of “protest novel” and “communism.” But, I finally got around to reading Native Son. Wow. Great book, and as noted above, most probably, Great Book. But, its greatness has nothing to do with being a means of implicating America in being racist.

Every now and then, I had heard that Wright was a fantastic writer. But not often enough; most of the time I heard about him it was in the context of “protest novel” and “communism.” But, I finally got around to reading Native Son. Wow. Great book, and as noted above, most probably, Great Book. But, its greatness has nothing to do with being a means of implicating America in being racist.More here. (Note: Throughout February, at least one post will be devoted to Black History Month: A century of Black History Commemorations)

Enjoying the content on 3QD? Help keep us going by donating now.

Omari Weeks at Bookforum:

On Morrison, Namwali Serpell’s foray into the expanding field of Morrison scholarship, picks up where these previous monographs left off. Serpell, an award-winning fiction writer and critic and professor of English at Harvard, meticulously pored over archival materials made accessible by the Morrison estate and Princeton University to produce a breathtaking excavation of the inner workings and outer impression of a swaggering Black genius. With this book, Serpell extends Mayberry’s research on Morrison’s critical reception by providing more critical reception of Morrison’s fiction and nonfiction oeuvre. She adds further grist to Williams’s careful exploration of what percolated just below Morrison’s famously steely surface. Altogether, On Morrison homes a novelist-critic’s eye in on a novelist-critic’s body of work and not only considers the beautiful world-building that has enamored publics for decades but does so with attention steeped in the craft of Black virtuosity.

On Morrison, Namwali Serpell’s foray into the expanding field of Morrison scholarship, picks up where these previous monographs left off. Serpell, an award-winning fiction writer and critic and professor of English at Harvard, meticulously pored over archival materials made accessible by the Morrison estate and Princeton University to produce a breathtaking excavation of the inner workings and outer impression of a swaggering Black genius. With this book, Serpell extends Mayberry’s research on Morrison’s critical reception by providing more critical reception of Morrison’s fiction and nonfiction oeuvre. She adds further grist to Williams’s careful exploration of what percolated just below Morrison’s famously steely surface. Altogether, On Morrison homes a novelist-critic’s eye in on a novelist-critic’s body of work and not only considers the beautiful world-building that has enamored publics for decades but does so with attention steeped in the craft of Black virtuosity.

For many reasons, Serpell’s magisterial deep dive into the output of one of the most profound writers of all time should not work. The breadth contained in her subject can petrify even the most seasoned reader; setting out to cover it in its entirety risks resorting to dull platitudes in the face of overwhelming precision.

more here.

Enjoying the content on 3QD? Help keep us going by donating now.

Enjoying the content on 3QD? Help keep us going by donating now.