Category: Recommended Reading

César Pelli (1926 – 2019)

Why this ancient philosophical tradition is astonishingly suitable for modern life — down to its physics

Temma Ehrenfeld in AlterNet:

‘The pursuit of Happiness’ is a famous phrase in a famous document, the United States Declaration of Independence (1776). But few know that its author was inspired by an ancient Greek philosopher, Epicurus. Thomas Jefferson considered himself an Epicurean. He probably found the phrase in John Locke, who, like Thomas Hobbes, David Hume and Adam Smith, had also been influenced by Epicurus. Nowadays, educated English-speaking urbanites might call you an epicure if you complain to a waiter about over-salted soup, and stoical if you don’t. In the popular mind, an epicure fine-tunes pleasure, consuming beautifully, while a stoic lives a life of virtue, pleasure sublimated for good. But this doesn’t do justice to Epicurus, who came closest of all the ancient philosophers to understanding the challenges of modern secular life.

‘The pursuit of Happiness’ is a famous phrase in a famous document, the United States Declaration of Independence (1776). But few know that its author was inspired by an ancient Greek philosopher, Epicurus. Thomas Jefferson considered himself an Epicurean. He probably found the phrase in John Locke, who, like Thomas Hobbes, David Hume and Adam Smith, had also been influenced by Epicurus. Nowadays, educated English-speaking urbanites might call you an epicure if you complain to a waiter about over-salted soup, and stoical if you don’t. In the popular mind, an epicure fine-tunes pleasure, consuming beautifully, while a stoic lives a life of virtue, pleasure sublimated for good. But this doesn’t do justice to Epicurus, who came closest of all the ancient philosophers to understanding the challenges of modern secular life.

…Epicureans did focus on seeking pleasure – but they did so much more. They talked as much about reducing pain – and even more about being rational. They were interested in intelligent living, an idea that has evolved in our day to mean knowledgeable consumption. But equating knowing what will make you happiest with knowing the best wine means Epicurus is misunderstood. The rationality he wedded to democracy relied on science.

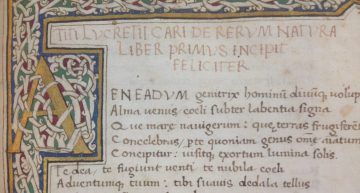

…Its principles read as astonishingly modern, down to the physics. In six books, Lucretius states that everything is made of invisible particles, space and time are infinite, nature is an endless experiment, human society began as a battle to survive, there is no afterlife, religions are cruel delusions, and the universe has no clear purpose. The world is material – with a smidgen of free will. How should we live? Rationally, by dropping illusion. False ideas largely make us unhappy. If we minimise the pain they cause, we maximise our pleasure.

More here.

This New Liquid Is Magnetic, and Mesmerizing

Knvul Sheikh in The New York Times:

Lodestone, a naturally-occurring iron oxide, was the first persistently magnetic material known to humans. The Han Chinese used it for divining boards 2,200 years ago; ancient Greeks puzzled over why iron was attracted to it; and, Arab merchants placed it in bowls of water to watch the magnet point the way toMecca. In modern times, scientists have used magnets to read and record data on hard drives and form detailed images of bones, cells and even atoms. Throughout this history, one thing has remained constant: Our magnets have been made from solid materials. But what if scientists could make magnetic devices out of liquids? In a study published Thursday in Science, researchers managed to do exactly that. “We’ve made a new material that has all the characteristics of an ordinary magnet, but we can change its shape, and conform it to different applications because it is a liquid,” said Thomas Russell, a polymer scientist at the University of Massachusetts, Amherst, and the study’s lead author. “It’s very unique.”

Lodestone, a naturally-occurring iron oxide, was the first persistently magnetic material known to humans. The Han Chinese used it for divining boards 2,200 years ago; ancient Greeks puzzled over why iron was attracted to it; and, Arab merchants placed it in bowls of water to watch the magnet point the way toMecca. In modern times, scientists have used magnets to read and record data on hard drives and form detailed images of bones, cells and even atoms. Throughout this history, one thing has remained constant: Our magnets have been made from solid materials. But what if scientists could make magnetic devices out of liquids? In a study published Thursday in Science, researchers managed to do exactly that. “We’ve made a new material that has all the characteristics of an ordinary magnet, but we can change its shape, and conform it to different applications because it is a liquid,” said Thomas Russell, a polymer scientist at the University of Massachusetts, Amherst, and the study’s lead author. “It’s very unique.”

Using a special 3D printer, Dr. Russell and his colleagues at the Lawrence Berkeley National Laboratory injected iron oxide nanoparticles into millimeter-scale droplets of toluene, a colorless liquid that does not dissolve in water. The team also added a soap-like material to the droplets, and then suspended them in water. The soap-like material caused the iron oxide nanoparticles to crowd together on the surface of the droplets and form a semisolidshell. “The particles get stuck in place, like a traffic jam at 5 o’clock,” Dr. Russell said. Next, the scientists placed the droplets on a stirring plate with a spinning bar magnet, and observed something extraordinary: The solid magnet caused the positive and negative poles of the liquid magnets to follow the external magnetic field, making the droplets dance on the plate. When the solid magnet was removed, the droplets remained magnetized.

The motion of liquid droplets can also be guided with external magnets. Thus employed, liquid magnets could be useful for delivering drugs to specific locations in a person’s body, and for creating “soft” robots that can move, change shape or grab things.

More here.

Saturday, July 20, 2019

Spadework

Alyssa Battistoni in n+1:

Alyssa Battistoni in n+1:

IN 2007, WHEN I WAS 21 YEARS OLD, I wrote an indignant letter to the New York Times in response to a column by Thomas Friedman. Friedman had called out my generation as a quiescent one: “too quiet, too online, for its own good.” “Our generation is lacking not courage or will,” I insisted, “but the training and experience to do the hard work of organizing — whether online or in person — that will lead to political power.”

I myself had never really organized. I had recently interned for a community-organizing nonprofit in Washington DC, a few months before Barack Obama became the world’s most famous (former) community organizer, but what I learned was the language of organizing — how to write letters to the editor about its necessity — not how to actually do it. I graduated from college, and some months later, the global economy collapsed. I spent the next years occasionally showing up to protests. I went to Zuccotti Park and to an attempted general strike in Oakland; I participated in demonstrations against rising student fees in London and against police killings in New York. I wrote more exhortatory articles. But it wasn’t until I went to graduate school at Yale, where a campaign for union recognition had been going on for nearly three decades, that I learned to do the thing I’d by then been advocating for years.

More here.

Iran: The Case Against War

Steven Simon and Jonathan Stevenson in New York Review of Books:

Steven Simon and Jonathan Stevenson in New York Review of Books:

There is no plausible reason for the United States to go to war with Iran, although the Trump administration appears to be preparing to do so. In mid-May, the Pentagon presented the White House with plans for deploying up to 120,000 troops to the Middle East to respond to Iranian attacks on US forces or the acceleration of Iran’s nuclear weapons program.

To be sure, the Iranian government is guilty of genuine transgressions against American interests and values. It backs Syria’s brutal dictator, Bashar al-Assad. It undermines the security of Israel by organizing and sustaining Shia militias in Syria, supporting the Palestinian extremist group Hamas, and arming the Lebanese Shia militia Hezbollah. By serving as Iran’s proxy on Israel’s border, Hezbollah exposes Lebanon—long a fragile state—to the risk of Israeli retaliation. Iran has also supported Shia militias in Iraq that in theory answer to the Iraqi prime minister through a special commission, but in practice are outside the national military command structure, which compromises the cohesion and authority of the Iraqi state.

More here.

An Economy in Waiting

John Case in The New Republic:

John Case in The New Republic:

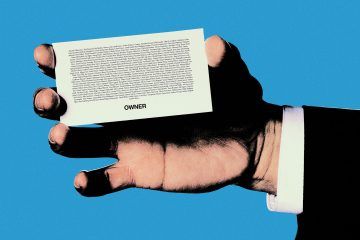

The 2020 Democratic field now teems with proposals to mitigate rampaging wealth and income inequality, from Kamala Harris’s plan to increase tax credits for low- and moderate-income families to Elizabeth Warren’s wealth tax. Such plans overlook, however, the principal set of relations that skew American capitalism upward: the ownership and operational control of business enterprises.

This failing is puzzling, because ownership and control so obviously matter. People who own companies, or who run them on the owners’ behalf, decide where and how much to invest. They decide how many people to hire, what sort of working conditions to provide, and—within broad limits—how much to pay those employees. All such decisions go a long way toward determining how well an economy serves its participants.

And decisions aside, ownership mightily affects the distribution of wealth and income all by itself. To take just one example, consider a successful midsize company—a regional restaurant chain, say, or a construction firm with $200 million in annual revenue and $10 million to $20 million in net profit. If it’s owned by one person or a small group, as is often the case, these owners may receive a million or more in income every year, and they have an asset likely worth tens of millions. Most of their employees will be lucky to earn $50,000 a year and save up a few thousand in a 401(k) plan. Surely broadening the ownership of business is a good idea.

More here.

‘Plastic Emotions’ by Shiromi Pinto – An Architectural Romance

Shahidha Bari at The Guardian:

There’s an air of romance to nearly all the places Shiromi Pinto describes in Plastic Emotions, her novel about a love affair between two great 20th-century architects. Some of those places are tropical and alluring. In Sri Lanka, we head to Kandy with its verdant hills, and then Colombo with its chattering bourgeoisie. In India, Pinto takes us to Chandigarh and its elegantly experimental modernist buildings. Even in Paris and London, we are surrounded by the glamour of bohemians and their postwar parties. But it’s in the mildly prosaic confines of a conference in Bridgwater, Somerset, that the Swiss-French architect Le Corbusier seems first to have collided with a Sri Lankan architect called Minnette de Silva.

There’s an air of romance to nearly all the places Shiromi Pinto describes in Plastic Emotions, her novel about a love affair between two great 20th-century architects. Some of those places are tropical and alluring. In Sri Lanka, we head to Kandy with its verdant hills, and then Colombo with its chattering bourgeoisie. In India, Pinto takes us to Chandigarh and its elegantly experimental modernist buildings. Even in Paris and London, we are surrounded by the glamour of bohemians and their postwar parties. But it’s in the mildly prosaic confines of a conference in Bridgwater, Somerset, that the Swiss-French architect Le Corbusier seems first to have collided with a Sri Lankan architect called Minnette de Silva.

more here.

“The Liberal Idea Has Become Obsolete”

Martin Jay at The Point:

I was first alerted to Raymond Geuss’s sour anti-commemoration of Jürgen Habermas’s ninetieth birthday, “A Republic of Discussion,” coincidentally on the same day that Vladimir Putin declared the obsolescence of liberalism in a meeting with Donald Trump. Trump, with the exquisite cluelessness that has made him so easy to mock, took the remark to refer to American political liberals, such as those in the Democratic Party. But Putin’s target was something much larger: the tradition of liberal democratic norms and institutions he and his fellow authoritarian populists are determined to undermine. It is the tradition that Geuss finds so lamely defended by Habermas’s theory of communicative action, which believes in discursive deliberation as a fundamental principle of a liberal democratic polity.

I was first alerted to Raymond Geuss’s sour anti-commemoration of Jürgen Habermas’s ninetieth birthday, “A Republic of Discussion,” coincidentally on the same day that Vladimir Putin declared the obsolescence of liberalism in a meeting with Donald Trump. Trump, with the exquisite cluelessness that has made him so easy to mock, took the remark to refer to American political liberals, such as those in the Democratic Party. But Putin’s target was something much larger: the tradition of liberal democratic norms and institutions he and his fellow authoritarian populists are determined to undermine. It is the tradition that Geuss finds so lamely defended by Habermas’s theory of communicative action, which believes in discursive deliberation as a fundamental principle of a liberal democratic polity.

Since guilt by association may not be a fair tactic—although in this case, it is hard to resist—let’s look at Geuss’s argument on its own terms. The first point to make is that it is, in fact, an argument, made publicly, drawing on reasons and evidence, employing Geuss’s characteristic rhetorical flair and keen intellect, and not a mindless rant.

more here.

Which Way to the City on a Hill?

Marilynne Robinson at the NYRB:

Recently, at a lunch with a group of graduate students, conversation turned to American colonial history, then to John Winthrop’s 1630 speech “A Modell of Christian Charity,” associated now with an image borrowed from Jesus, “a city on a hill.” This phrase has been grossly misinterpreted, both Winthrop’s use of it and Jesus’. In any case, the students pronounced the speech capitalist, with a certainty and unanimity that, quite frankly, is inappropriate to any historical subject, and would be, even if the students, or the teachers who gave them the word, could define “capitalist.” Because I encounter variants of this conversation in such settings all over the country, I should not be heard as criticizing any particular university when I say that such certainty is not the product of good education. Indeed, it is distinctively the product of bad education.

This characterization of Winthrop’s speech had the finality of a moral judgment, which is odd but, again, typical. For these purposes, capitalism is simply what America is and does and has always done and will do into any imaginable future. A dark stream of greed flows beneath its glittering surface, intermingling with its best works, its highest motives, and it is naive to think otherwise. Like the country itself, it is a rude, robust intrusion of unbridled self-interest upon a world whose traditional order was humane—in the best sense, civilized. Capitalism is, by these lights, original with and exclusive to us, except where Americanization has extended its long reach. This is believed so utterly that the fact that Marx was making his critique of the mature industrial/colonial economy of Britain is overlooked or forgotten.

more here.

Hacking Humans: Yuval Noah Harari Roundtable at the École polytechnique fédérale de Lausanne

Yuval Noah Harari speaks about human hackability and sits down for a conversation moderated by Leila Delarive: With Ken Roth (Executive Director of Human Rights Watch), Effy Vayena (professor of bioethics at ETH Zurich), and Jacques Dubochet (winner of the Nobel Prize for Chemistry) — accompanied by presentations from Grégoire Courtine (EPFL) and Jocelyne Bloch (CHUV), and Jamie Paik (EPFL).

Man and the Moon

Alex Colville in 1843 Magazine:

Gazing at the Moon is an eternal human activity, one of the few things uniting caveman, king and commuter. Each has found something different in that lambent face. For Li Po, a lonely Tang dynasty poet, the Moon was a much-needed drinking buddy. For Holly Golightly, who serenaded it during the “Moon River” scene of “Breakfast at Tiffany’s”, it was a “huckleberry friend” who could whisk her away from her fire escape and her sorrows. By pretending to control a well-timed lunar eclipe, Columbus recruited it to terrify Native Americans into submission. Mussolini superstitiously feared sleeping in its light, and hid from it behind tightly drawn blinds. It would be a challenge for any museum to chronicle the long, complicated relationship humanity has with the Moon, but the National Maritime Museum in Greenwich, London has done it. For the 50th anniversary of the Apollo 11 Moon landing, the museum has placed relics from those nine remarkable days in July 1969 alongside cuneiform tablets, Ottoman pocket lunar-calendars, giant Victorian telescopes and instruments designed for India’s first lunar probe in 2008. This impressive exhibition tells the history of humanity’s fascination with this celestial body and looks to the future, as humans attempt once again to return to the Moon.

Gazing at the Moon is an eternal human activity, one of the few things uniting caveman, king and commuter. Each has found something different in that lambent face. For Li Po, a lonely Tang dynasty poet, the Moon was a much-needed drinking buddy. For Holly Golightly, who serenaded it during the “Moon River” scene of “Breakfast at Tiffany’s”, it was a “huckleberry friend” who could whisk her away from her fire escape and her sorrows. By pretending to control a well-timed lunar eclipe, Columbus recruited it to terrify Native Americans into submission. Mussolini superstitiously feared sleeping in its light, and hid from it behind tightly drawn blinds. It would be a challenge for any museum to chronicle the long, complicated relationship humanity has with the Moon, but the National Maritime Museum in Greenwich, London has done it. For the 50th anniversary of the Apollo 11 Moon landing, the museum has placed relics from those nine remarkable days in July 1969 alongside cuneiform tablets, Ottoman pocket lunar-calendars, giant Victorian telescopes and instruments designed for India’s first lunar probe in 2008. This impressive exhibition tells the history of humanity’s fascination with this celestial body and looks to the future, as humans attempt once again to return to the Moon.

The Moon was once the domain of the gods, who had placed this seemingly smooth orb in the heavens, the finishing touch to a perfectly ordered creation. The red of the blood Moon was a sign of divine imbalance that only humanity could correct: the Chinese banged mirrors, the Inca shouted and the Romans frantically waved burning torches.

More here.

I Wanted to Know What White Men Thought About Their Privilege. So I Asked.

Claudia Rankine in The New York Times:

In the early days of the run-up to the 2016 election, I was just beginning to prepare a class on whiteness to teach at Yale University, where I had been newly hired. Over the years, I had come to realize that I often did not share historical knowledge with the persons to whom I was speaking. “What’s redlining?” someone would ask. “George Washington freed his slaves?” someone else would inquire. But as I listened to Donald Trump’s inflammatory rhetoric during the campaign that spring, the class took on a new dimension. Would my students understand the long history that informed a comment like one Trump made when he announced his presidential candidacy? “When Mexico sends its people, they’re not sending their best,” he said. “They’re sending people that have lots of problems, and they’re bringing those problems with us. They’re bringing drugs. They’re bringing crime. They’re rapists.” When I heard those words, I wanted my students to track immigration laws in the United States. Would they connect the treatment of the undocumented with the treatment of Irish, Italian and Asian people over the centuries?

In the early days of the run-up to the 2016 election, I was just beginning to prepare a class on whiteness to teach at Yale University, where I had been newly hired. Over the years, I had come to realize that I often did not share historical knowledge with the persons to whom I was speaking. “What’s redlining?” someone would ask. “George Washington freed his slaves?” someone else would inquire. But as I listened to Donald Trump’s inflammatory rhetoric during the campaign that spring, the class took on a new dimension. Would my students understand the long history that informed a comment like one Trump made when he announced his presidential candidacy? “When Mexico sends its people, they’re not sending their best,” he said. “They’re sending people that have lots of problems, and they’re bringing those problems with us. They’re bringing drugs. They’re bringing crime. They’re rapists.” When I heard those words, I wanted my students to track immigration laws in the United States. Would they connect the treatment of the undocumented with the treatment of Irish, Italian and Asian people over the centuries?

In preparation, I needed to slowly unpack and understand how whiteness was created. How did the Naturalization Act of 1790, which restricted citizenship to “any alien, being a free white person,” develop over the years into our various immigration acts? What has it taken to cleave citizenship from “free white person”? What was the trajectory of the Ku Klux Klan after its formation at the end of the Civil War, and what was its relationship to the Black Codes, those laws subsequently passed in Southern states to restrict black people’s freedoms? Did the United States government bomb the black community in Tulsa, Okla., in 1921? How did Italians, Irish and Slavic peoples become white? Why do people believe abolitionists could not be racist?

More here.

Friday, July 19, 2019

Bauhaus: The strains of middle age

Morgan Meis in The Easel:

Walter Gropius was fond of making claims about The Bauhaus like the following: “Our guiding principle was that design is neither an intellectual nor a material affair, but simply an integral part of the stuff of life, necessary for everyone in a civilized society.” Idealistic and vague, perhaps to a fault, but one gets the point. Bauhaus design was never intended to be alienating. It was supposed to be the opposite. It was supposed to preserve the relative “health” of the craft traditions while marching boldly into a new era. It was supposed to help cure the potential wounds of modern life, wounds caused, initially, by the industrial revolution and its jarring impact on everything from the natural landscape to the material and design of our cutlery. Anti-alienation was really the one guiding intuition behind The Bauhaus as a school and then, more amorphously, as a general approach to architecture and design.

Walter Gropius was fond of making claims about The Bauhaus like the following: “Our guiding principle was that design is neither an intellectual nor a material affair, but simply an integral part of the stuff of life, necessary for everyone in a civilized society.” Idealistic and vague, perhaps to a fault, but one gets the point. Bauhaus design was never intended to be alienating. It was supposed to be the opposite. It was supposed to preserve the relative “health” of the craft traditions while marching boldly into a new era. It was supposed to help cure the potential wounds of modern life, wounds caused, initially, by the industrial revolution and its jarring impact on everything from the natural landscape to the material and design of our cutlery. Anti-alienation was really the one guiding intuition behind The Bauhaus as a school and then, more amorphously, as a general approach to architecture and design.

Somehow, though, something changed. Perhaps this was due to the process by which Bauhaus ideas spread from Weimar and out into the world at large, including the historical circumstances by which leading practitioners of Bauhaus were driven from Germany (by Nazism) and then found themselves in places like England and America. Whatever its precise origin, by the 1960s and 70s, Bauhaus ideas were frequently cited not as a cure for alienation but rather as its cause.

More here.

NASA’s Next 50 Years

Robert Zubrin in The New Atlantis:

NASA deserves a lot of credit. A space agency funded by 4 percent of the world’s population, it is responsible for launching 100 percent of the rovers that have ever wheeled on Mars; all the probes that have visited Jupiter, Saturn, Uranus, Neptune, and Pluto; nearly all the major space telescopes; and all the people who have ever walked on the Moon. But while its robotic planetary exploration and space astronomy programs continue to produce epic results, for nearly half a century its human spaceflight effort has been stuck in low Earth orbit.

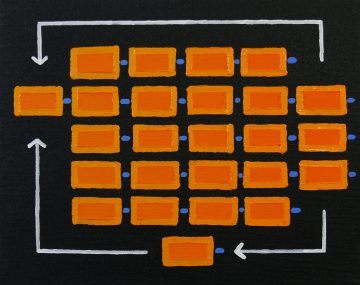

The reason for this is simple: NASA’s space science programs accomplish a lot because they are mission-driven. In contrast, the human spaceflight program has allowed itself to become constituency-driven (or, to put it less charitably, vendor-driven). In consequence, the space science programs spend money in order to do things, while the human spaceflight program does things in order to spend money. Thus, the efforts of the science programs are focused and directed, while those of the human spaceflight program are purposeless and entropic.

This was not always so. During the Apollo period, NASA’s human spaceflight program was strongly mission-driven. We did not go to the Moon because there were three random constituency-backed programs to develop Saturn V boosters, command modules, and lunar excursion vehicles, which luckily happened to fit together, and which needed something to do to justify their funding. Rather, we had a clear goal — sending humans to the Moon within a decade — from which we derived a mission plan, which then dictated vehicle designs, which in turn defined necessary technology developments. That’s why the elements of the flight hardware set all fit together. But in the period since, with no clear mission, things have worked the other way.

More here.

A Cultural Darwinian Analysis of Witch Persecutions

Steije Hofhuis and Maarten Boudry in Cultural Science:

The theory of Darwinian cultural evolution is gaining currency in many parts of the socio-cultural sciences, but it remains contentious. Critics claim that the theory is either fundamentally mistaken or boils down to a fancy re-description of things we knew all along. We will argue that cultural Darwinism can indeed resolve long-standing socio-cultural puzzles; this is demonstrated through a cultural Darwinian analysis of the European witch persecutions. Two central and unresolved questions concerning witch-hunts will be addressed. From the fifteenth to the seventeenth centuries, a remarkable and highly specific concept of witchcraft was taking shape in Europe. The first question is: who constructed it? With hindsight, we can see that the concept contains many elements that appear to be intelligently designed to ensure the continuation of witch persecutions, such as the witches’ sabbat, the diabolical pact, nightly flight, and torture as a means of interrogation. The second question is: why did beliefs in witchcraft and witch-hunts persist and disseminate, despite the fact that, as many historians have concluded, no one appears to have substantially benefited from them? Historians have convincingly argued that witch-hunts were not inspired by some hidden agenda; persecutors genuinely believed in the threat of witchcraft to their communities. We propose that the apparent ‘design’ exhibited by concepts of witchcraft resulted from a Darwinian process of evolution, in which cultural variants that accidentally enhanced the reproduction of the witch-hunts were selected and accumulated. We argue that witch persecutions form a prime example of a ‘viral’ socio-cultural phenomenon that reproduces ‘selfishly’, even harming the interests of its human hosts.

The theory of Darwinian cultural evolution is gaining currency in many parts of the socio-cultural sciences, but it remains contentious. Critics claim that the theory is either fundamentally mistaken or boils down to a fancy re-description of things we knew all along. We will argue that cultural Darwinism can indeed resolve long-standing socio-cultural puzzles; this is demonstrated through a cultural Darwinian analysis of the European witch persecutions. Two central and unresolved questions concerning witch-hunts will be addressed. From the fifteenth to the seventeenth centuries, a remarkable and highly specific concept of witchcraft was taking shape in Europe. The first question is: who constructed it? With hindsight, we can see that the concept contains many elements that appear to be intelligently designed to ensure the continuation of witch persecutions, such as the witches’ sabbat, the diabolical pact, nightly flight, and torture as a means of interrogation. The second question is: why did beliefs in witchcraft and witch-hunts persist and disseminate, despite the fact that, as many historians have concluded, no one appears to have substantially benefited from them? Historians have convincingly argued that witch-hunts were not inspired by some hidden agenda; persecutors genuinely believed in the threat of witchcraft to their communities. We propose that the apparent ‘design’ exhibited by concepts of witchcraft resulted from a Darwinian process of evolution, in which cultural variants that accidentally enhanced the reproduction of the witch-hunts were selected and accumulated. We argue that witch persecutions form a prime example of a ‘viral’ socio-cultural phenomenon that reproduces ‘selfishly’, even harming the interests of its human hosts.

More here.

The Secret Life Of Brian: Documentary on the Monty Python film

Style and Grammar Guides Suck

Jonathan Russell Clark at The Vulture:

The reason we pay happily for these manuals is straightforward, if a little sad. We’ve been convinced that we need them — that without them, we’d be lost. Readers aren’t drawn to in-depth arguments on punctuation and conjugation for the sheer fun of it; they’re sold on the promise of progress, of betterment. These books benefit from the dire misconception that they are for everyday people, when, in fact, they’re for editors and educators.

The reason we pay happily for these manuals is straightforward, if a little sad. We’ve been convinced that we need them — that without them, we’d be lost. Readers aren’t drawn to in-depth arguments on punctuation and conjugation for the sheer fun of it; they’re sold on the promise of progress, of betterment. These books benefit from the dire misconception that they are for everyday people, when, in fact, they’re for editors and educators.

Take this year’s Dreyer’s English, whose jacket description reads in part, “We all write, all the time: blogs, books, emails. Lot and lots of emails. And we all want to write better.” Even if we accept the idea that we all (or most of us) want to become clearer and more interesting writers, is grammar truly the key to such improvements?

No, it’s not.

more here.

Sound and Resistance in Damon Krukowski’s ‘Ways of Hearing’

Will Meyer at The Baffler:

Contemporary technological anxiety—when not directed towards the internet wholesale—is just as often pitched against sound. We’ve recently started to ask what platform monopolies are doing to the quality of music, or whether Amazon is killing record (and book) stores, or if podcasts are further ensnaring us online, destroying our private thoughts. It’s enough, given his pedagogical wisdom, to make us wonder: What would Berger say?—about earbuds or streambait pop.

Contemporary technological anxiety—when not directed towards the internet wholesale—is just as often pitched against sound. We’ve recently started to ask what platform monopolies are doing to the quality of music, or whether Amazon is killing record (and book) stores, or if podcasts are further ensnaring us online, destroying our private thoughts. It’s enough, given his pedagogical wisdom, to make us wonder: What would Berger say?—about earbuds or streambait pop.

To that end, musician and author Damon Krukowski launched a podcast, and now a book, that nods in Berger’s direction. Ways of Hearing is a slim work, really a blown-out podcast script, beautifully designed and illustrated, which takes readers on an admirably reported tour of sound from analog to digital, from 1960s New York to our era of “hyper-gentrification,” all the while considering the relation between noise and power. And, crucially, Krukowski doesn’t privilege a hierarchy between digital and analog but contests it by appreciating—that is, paying attention to—their material differences. All the better to apprehend the political systems that arrange what we hear in the world.

more here.

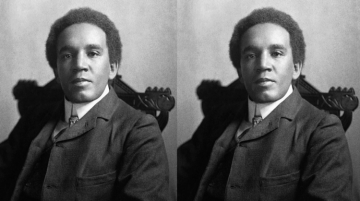

Samuel Coleridge-Taylor’s Violin Concerto

Sudip Bose at The American Scholar:

Coleridge-Taylor was deeply interested in both African and African-American melodies. A meeting with the American writer Paul Laurence Dunbar in 1896, for example, had let to a vocal work, African Romances, with the two of them deciding to put on a series of performances together. Another work, the Twenty-Four Negro Melodies for piano, was influenced by Dunbar’s work. “What Brahms has done for the Hungarian folk music,” Coleridge-Taylor wrote in the preface to the score, “Dvořák for the Bohemian, and Grieg for the Norwegian, I have tried to do for these Negro melodies.” But though the composer’s political consciousness had been informed by an abiding interest in pan-Africanism, though his explorations of his paternal ancestry had him briefly flirting with the idea of relocating to the United States, he primarily filtered the raw materials of black American and African music through a distinctly European sensibility, Brahms and Dvořák being his guiding lights. Coleridge-Taylor’s true métier was the realm of light English music, and as interest in that field diminished with the passing of the 20th century, with the decline of the amateur choruses that once would have taken up such repertoire, so too did an interest in his music. This was already true by 1912, the year the composer died from pneumonia, having collapsed at the West Croydon station, while waiting for a train.

Coleridge-Taylor was deeply interested in both African and African-American melodies. A meeting with the American writer Paul Laurence Dunbar in 1896, for example, had let to a vocal work, African Romances, with the two of them deciding to put on a series of performances together. Another work, the Twenty-Four Negro Melodies for piano, was influenced by Dunbar’s work. “What Brahms has done for the Hungarian folk music,” Coleridge-Taylor wrote in the preface to the score, “Dvořák for the Bohemian, and Grieg for the Norwegian, I have tried to do for these Negro melodies.” But though the composer’s political consciousness had been informed by an abiding interest in pan-Africanism, though his explorations of his paternal ancestry had him briefly flirting with the idea of relocating to the United States, he primarily filtered the raw materials of black American and African music through a distinctly European sensibility, Brahms and Dvořák being his guiding lights. Coleridge-Taylor’s true métier was the realm of light English music, and as interest in that field diminished with the passing of the 20th century, with the decline of the amateur choruses that once would have taken up such repertoire, so too did an interest in his music. This was already true by 1912, the year the composer died from pneumonia, having collapsed at the West Croydon station, while waiting for a train.

more here.