by Jonathan Kujawa

For several years now, the AI companies have been using the annual Putnam exam as one of these benchmarks. The Putnam exam is taken every December by thousands of undergraduates around the world. It has twelve problems, each worth ten points, for a total of 120 points. The exam notoriously difficult [1]. Earning two points on the exam puts you in the top 50%. If you’d like to try this year’s questions, you can find them along with their solutions here.

As this article describes, a number of AI systems were tested against this year’s Putnam exam. The best of them scored 103 points, putting it among the top three humans. Even the worst model earned 58 points, making it comparable to the human ranked 78th (out of the 4329 who took the exam).

One advantage of this benchmark is the Putnam problems are written from scratch every year. It is unlikely that the AI systems are simply repeating answers they’ve seen in their training data [2]. On the other hand, comparing AI Putnam results with those of humans is rather artificial. The AI systems can throw massive amounts of effort against the problems. If you gave me a million well-trained undergraduates in a windowless data center, I bet I could generate a pretty impressive solution set [3].

The Putnam exam itself is rather artificial. The problems are designed to be brain teasers of a sort. They are standalone questions that often require some clever thinking, but are not the sort of problem you are likely to see in mathematical research or in the mathematics of real-world problems. There are many Putnam problems along with their solutions available in the training data. Solving a new Putnam problem is impressive, but not earth-shattering.

The Putnam exam itself is rather artificial. The problems are designed to be brain teasers of a sort. They are standalone questions that often require some clever thinking, but are not the sort of problem you are likely to see in mathematical research or in the mathematics of real-world problems. There are many Putnam problems along with their solutions available in the training data. Solving a new Putnam problem is impressive, but not earth-shattering.

In a similar spirit, enthusiasts have been prompting AI systems with problems posed by Paul Erdos. We’ve crossed paths with Erdos a number of times here at 3QD (here, here, and here, for example). He has been described as the “prince of problem solvers and the absolute monarch of problem posers.” Famously, Erdos would offer small cash prizes for his favorite problems. I once heard it said that Erdos surely violated minimum wage laws, since the problems invariably took more time than the money alone warranted.

There is an online database of 1183 problems posed by Erdos, with over half of them still waiting to be solved. In January, it was announced that ChatGPT-5.2 had provided a proof of the Erdos problem #278. More precisely, Kevin Barreto, an undergraduate student at the University of Cambridge, prompted ChatGPT with problem #278. ChatGPT worked on the problem autonomously and provided a solution. As it does, ChatGPT provided the solution in English. Since LLMs are notorious liars, ChatGPT’s proof needed an independent check. The solution was fed into the Aristotle AI system, which converts text-based mathematical arguments into the formal language of the Lean system. We talked about Lean here on 3QD. A mathematical proof can be written into a sort of computer code that Lean is able to test. Lean has been rigorously vetted. It’s widely agreed that if Lean gives a thumbs-up, then your proof is watertight.

Both human readers and Lean agreed that ChatGPT’s solution is correct. As far as we know, this problem hasn’t been solved before. That said, Carl Pomerance pointed out that a result of his from 2014 is similar to the argument used by ChatGPT. It may be that ChatGPT was aware of Pomerance’s paper and used it for inspiration. But I wouldn’t count that against ChatGPT. After all, I and every other mathematician finds inspiration in the work of others all the time. As Newton famously said, “If I have seen further, it is by standing on the shoulders of Giants.”

Both human readers and Lean agreed that ChatGPT’s solution is correct. As far as we know, this problem hasn’t been solved before. That said, Carl Pomerance pointed out that a result of his from 2014 is similar to the argument used by ChatGPT. It may be that ChatGPT was aware of Pomerance’s paper and used it for inspiration. But I wouldn’t count that against ChatGPT. After all, I and every other mathematician finds inspiration in the work of others all the time. As Newton famously said, “If I have seen further, it is by standing on the shoulders of Giants.”

The role of the Aristotle AI system is worth noting. Aristotle is an AI system that combines the informal “thinking” of LLMs like ChatGPT with the formal verification provided by Lean. By checking the logic of the AI system with Lean, you hope to avoid the subtle errors and lies that LLMs are so good at generating. It would be handy to have Aristotle or another AI system do the tedious work of turning your brilliant ideas into verifiable Lean code.

For example, imagine you want to write computer code that will operate a Boeing 747, a pacemaker, or your high-tech espresso machine. Nowadays, you can “vibe code” with Claude, ChatGPT, and other systems and generate software that looks and runs great. But what happens when there is a leap year, or a drop in air pressure, or someone sneezes? How do you verify that the code always works as expected? Indeed, Microsoft first created Lean because it wanted to have a system that could ensure code, mathematical formulas, and the like were certifiably correct.

The existence of Lean is another one of the reasons the AI companies are so interested in mathematics. AI systems are quite good at writing computer code. Partly because it is fairly easy to test computer code and verify that it does what it is intended to do. Coupling AI with Lean gives you the same sanity check for mathematical reasoning [4]. If an architect uses an AI system to design a bridge, it would be nice to know that the calculations and reasoning used to keep the bridge standing have been rigorously certified.

Not that this substitutes for human judgment. I have had many calculus students do correct calculations of the entirely wrong thing. Just because the math is correct doesn’t mean you’ve answered the right question!

AI systems don’t know when a question is worth answering. All they do is answer what we ask. Indeed, both the Putnam problems and the Erdos problem are cute puzzles, but arguably not that important. Carl Pomerance’s paper was published ten years ago. Likely the reason nobody thought to use his technique on problem #278 is that nobody bothered. After all, Erdos posed 1100+ problems, and in the thirty years since he “left” (as Erdos would have described it), hundreds of them are still open. Some are important, but lots of them are simply interesting puzzles, and there is a reason nobody has gotten around to them.

AI systems don’t know when a question is worth answering. All they do is answer what we ask. Indeed, both the Putnam problems and the Erdos problem are cute puzzles, but arguably not that important. Carl Pomerance’s paper was published ten years ago. Likely the reason nobody thought to use his technique on problem #278 is that nobody bothered. After all, Erdos posed 1100+ problems, and in the thirty years since he “left” (as Erdos would have described it), hundreds of them are still open. Some are important, but lots of them are simply interesting puzzles, and there is a reason nobody has gotten around to them.

With this in mind, a group of mathematicians posed a challenge to the AI community in February. They released ten questions that arose during their research, but, as far as they knew, had never appeared in the mathematics literature. The authors knew how to solve the problems, but had not yet published them. I should make sure not to overstate things. These problems are what a mathematician would call a lemma or proposition: results of interest, but only a stepping stone towards a bigger, more significant result. Solving one of these is a helpful step, but not the same as writing an entire research paper.

Since the problems came up organically in the work of researchers, you could reasonably say that these problems are more typical of the kind of thing we wish AI could help with. They called the project “First Proof.”

They gave AI folks a week to tackle the problems. They asked that the AI systems be left to solve the problems autonomously. The goal was to gauge what an AI system can do on its own. The results were mixed, at best. OpenAI claimed to solve half of them, but used some unspecified amount of human intervention. It was a far cry from the gangbuster results on the Putnam Exam.

The First Proof mathematicians have announced a Second Proof Challenge. They have invited research mathematicians to submit unpublished problems with their solutions this spring. Ten of them will be selected, and interested AI systems will be given one attempt to answer each problem. Those solutions will be graded, and then the problems will be released to the public along with the solutions shortly thereafter. It will be interesting to see how the Second Proof goes. Unlike last time, the AI developers are now forewarned and can optimize their systems. On the other hand, the systems will have only a single shot and have to be entirely autonomous — no OpenAI shenanigans this time. I predict the results will again be notable, but well short of the breathless hype of the AI techno-boosters.

Setting aside these fancy challenges, are actual working-class mathematicians using AI? Anecdotally, the answer is yes. I was recently told of one senior mathematician who finds AI so useful that they are willing to pay the $200/month for access to the best systems. Ken Ono, a well-regarded number theorist (and the PhD advisor of my colleague, Holly Swisher), recently left his faculty position at the University of Virginia to join a Math + AI startup called Axiom.

Setting aside these fancy challenges, are actual working-class mathematicians using AI? Anecdotally, the answer is yes. I was recently told of one senior mathematician who finds AI so useful that they are willing to pay the $200/month for access to the best systems. Ken Ono, a well-regarded number theorist (and the PhD advisor of my colleague, Holly Swisher), recently left his faculty position at the University of Virginia to join a Math + AI startup called Axiom.

I haven’t gone as far as they have, but I do find AI useful for brainstorming and for doing context-based searches. Recently, I was thinking through a possible line of attack on a problem, but it relied on results that were a little outside my areas of expertise, and I didn’t know quite the right terminology or the technical results needed. With a little prompting, Claude filled in the missing bit along with a reference to where in the literature that fact could be found. I could have done the same “by hand,” but it likely would have taken me an hour of searching the web and flipping through books. Claude gave it to me in a few minutes.

It is important to say that the usefulness is very much a function of my own expertise. I can quickly spot when Claude is going the wrong way, or inventing some plausible sounding nonesense. And I can read the literature and confirm that it works as Claude claims. Without my active involvement, Claude would generate a lot of garbage. This is why the challenge set by the First/Second Proof folks is important: it is a test of what AI systems can do on problems of interest when the AI doesn’t have training wheels.

That feels right to me. AI systems have strengths and weaknesses that we don’t really understand. Google’s DeepMind can beat the best human chess and Go players, and learned to do so by playing against itself countlessly many times. But that same technique apparently fails abysmally when it tries to learn NIM, a game my pre-teen nieces can easily master. Even in the area where AI has been most disruptive, software coding, the results have been mixed. This recent discussion on Hacker News has professional coders declaring AI to be the best and worst thing they’ve ever used.

It is an interesting time, but it’s far from clear where we will end up.

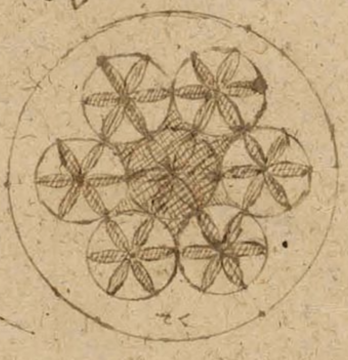

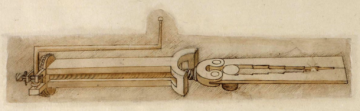

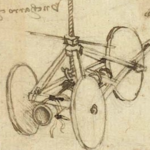

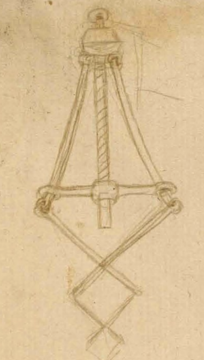

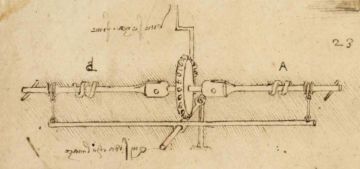

[0] All non-mohawk images from the recently digitized collection of manuscripts by Leonardo da Vinci.

[1] If I may brag a little bit, the Oregon State University Putnam team placed in the top 15% this year. All credit goes to the students and to my colleagues, Nick Marshall and Farid Bouya, for training the team.

[2] The article does mention that the exam problems were tested against AI over the summer. It is unclear what precautions were taken. There is some chance that the problems were known to some of the AI systems in advance.

[3] Thanks to Borel’s Infinite Monkey Theorem :-).

[4] It should be noted that Lean is not a magic box. It tests what you give it, and there are various ways an AI system could game the system to get a thumbs-up from Lean. A human still needs to be in the loop.

[5] Image borrowed from here.

Enjoying the content on 3QD? Help keep us going by donating now.