David Austin Walsh at the Boston Review:

Zohran Mamdani is now the mayor of New York City. Amid the chaos unleashed by Trump in the first weeks of 2026, it can be easy to lose sight of the truly seismic shift in politics his mayoralty represents.

Zohran Mamdani is now the mayor of New York City. Amid the chaos unleashed by Trump in the first weeks of 2026, it can be easy to lose sight of the truly seismic shift in politics his mayoralty represents.

To recap: an obscure, thirty-four-year-old state assemblyman and member of the Democratic Socialists of America, who a year ago could barely fill a seminar room at New York University, beat both incumbent mayor Eric Adams and former governor Andrew Cuomo by running on an unapologetically progressive ticket, critical of ICE and Israel as much as rents being too damn high. India Walton came close to a similar upset in Buffalo four years ago, but this time the socialists prevailed. In his inaugural address on New Year’s Day, sworn in by Bernie Sanders and quoting Fiorello La Guardia, Mamdani spoke of building a city “‘far greater and more beautiful’ for the hungry and the poor.” Handing out free tickets to a theater festival earlier this month, he spoke of his vision of a city “where we make it possible for working people to afford lives of joy, of art, of rest, of expression.” When’s the last time you heard a politician talk like this?

More here.

Enjoying the content on 3QD? Help keep us going by donating now.

What are we ever really fighting for? The answer is love. Love in the movies, and on the streets, and in our heads—instead of the dead people we are seeing right now. Existence is a contagion of love. That’s why you have to fast-forward through a bunch of scenes in Reds, where men are giving speeches to other men in English and Russian with those faces of certainty—not hope, but certainty—that they are right and have it all figured out.

What are we ever really fighting for? The answer is love. Love in the movies, and on the streets, and in our heads—instead of the dead people we are seeing right now. Existence is a contagion of love. That’s why you have to fast-forward through a bunch of scenes in Reds, where men are giving speeches to other men in English and Russian with those faces of certainty—not hope, but certainty—that they are right and have it all figured out. T

T The story of “Human Behaviour” as we know it begins in 1993, when

The story of “Human Behaviour” as we know it begins in 1993, when  Another bout of Gothic fever in the early twentieth century revolved less around a style than around a restless Gothic energy, an overwrought Gothic sensibility. In 1921 the German art historian Hermann Schmitz remarked that calling something Gothic had become the highest form of praise: a dancer on Berlin’s Kurfürstendamm might be complimented for the Gothic line of her movements, an Expressionist painting for its Gothic feeling. Schmitz bemoaned this misappropriation of the “most glorious legacy of the pious and pure spirit of our forefathers.” Seen as German by Germans and as French by the French, the Gothic was revered by those yearning for a lost age of faith and unity as well as by avant-gardes in search of the new.

Another bout of Gothic fever in the early twentieth century revolved less around a style than around a restless Gothic energy, an overwrought Gothic sensibility. In 1921 the German art historian Hermann Schmitz remarked that calling something Gothic had become the highest form of praise: a dancer on Berlin’s Kurfürstendamm might be complimented for the Gothic line of her movements, an Expressionist painting for its Gothic feeling. Schmitz bemoaned this misappropriation of the “most glorious legacy of the pious and pure spirit of our forefathers.” Seen as German by Germans and as French by the French, the Gothic was revered by those yearning for a lost age of faith and unity as well as by avant-gardes in search of the new. Bhalla picked up the mantle from his predecessor Mayor Dawn Zimmer, launching a

Bhalla picked up the mantle from his predecessor Mayor Dawn Zimmer, launching a

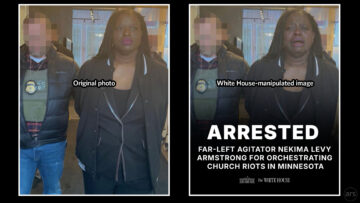

The Trump White House yesterday posted a manipulated photo of Nekima Levy Armstrong, a Minnesota civil rights attorney who was arrested after protesting in a church where a pastor is allegedly also an Immigration and Customs Enforcement (ICE) official.

The Trump White House yesterday posted a manipulated photo of Nekima Levy Armstrong, a Minnesota civil rights attorney who was arrested after protesting in a church where a pastor is allegedly also an Immigration and Customs Enforcement (ICE) official. On Jan. 13, Vermont legislator Troy Headrick (I)

On Jan. 13, Vermont legislator Troy Headrick (I)  AI Will Accelerate the Regulatory Pipeline

AI Will Accelerate the Regulatory Pipeline Kristalina Georgieva told delegates in Davos that the IMF’s own research suggested there would be a big transformation of demand for skills, as the technology becomes increasingly widespread.

Kristalina Georgieva told delegates in Davos that the IMF’s own research suggested there would be a big transformation of demand for skills, as the technology becomes increasingly widespread. Picture a fall afternoon in Austin, Texas. The city is experiencing a sudden rainstorm, common there in October. Along a wet and darkened city street drive two robotaxis. Each has passengers. Neither has a driver.

Picture a fall afternoon in Austin, Texas. The city is experiencing a sudden rainstorm, common there in October. Along a wet and darkened city street drive two robotaxis. Each has passengers. Neither has a driver. I must begin with a condition rather than a confession: my safety, anonymity and physical survival come first. In Iran, where words can still wound the body, this text is written cautiously, stripped of names and coordinates – anything that could invite harm. What follows is not testimony in the juridical sense, nor reportage. It is a personal record: fragile, partial, and deliberately inward. This is not about who I am in an administrative sense, but about where I stand.

I must begin with a condition rather than a confession: my safety, anonymity and physical survival come first. In Iran, where words can still wound the body, this text is written cautiously, stripped of names and coordinates – anything that could invite harm. What follows is not testimony in the juridical sense, nor reportage. It is a personal record: fragile, partial, and deliberately inward. This is not about who I am in an administrative sense, but about where I stand. I

I