by Scott F. Aikin and Robert B. Talisse

Before the COVID pandemic, travel to academic conferences and colloquia was a large part of the job of being a professor at a research-focused university. The last few months have given us the opportunity to reflect on the hurly burly of academic travel. We’ve keenly missed many things about those in-person events. Yet there were things we don’t miss very much at all. While academic conferences are still paused, we wanted to make a note about what’s worth our time and not, and then make some resolutions about what we can do better.

The bloom of online conferences since last Spring provides a key point of comparison. The online conference has many of the same problems that beset the in-person conference: the schedules are overfull with interesting papers at conflicting times, presenters go over their allotted times and thereby leave no time for discussion, and the Q&A sessions tend to go off the rails with people asking questions that have more to do with their own views than with the presentation. But we were still pleased that the move online allowed younger scholars the opportunity to shine and get uptake with their work. And we were able still to hear a few presentations that provided some real insight. In these respects, online conferences are much like their in-person counterparts.

But there are differences. A unique feature of in-person conferences lies in the unplanned sociality that they make possible. The in-person setting allows for the possibility of passing some luminary in the hall between sessions, or meeting someone whose work you just read. In fact, it’s a piece of unacknowledged common wisdom that the true value of in-person conferences lies in unstructured time when one is not attending sessions. Read more »

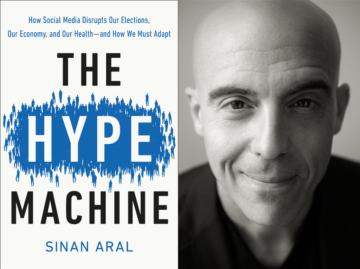

Given where we find ourselves in this late November of 2020, it is hard to think of a book more relevant or timely than The Hype Machine by Sinan Aral. The author is the David Austin Professor of Management and Professor of Information Technology and Marketing at the Massachusetts Institute of Technology. As one of the world’s foremost experts on social media and its effects, Prof. Aral is the perfect person to look at how this phenomenon has changed the world and the human experience. This is what he sets out to do in his new book, The Hype Machine, published under the Currency Imprint of Random House this September, and with considerable success.

Given where we find ourselves in this late November of 2020, it is hard to think of a book more relevant or timely than The Hype Machine by Sinan Aral. The author is the David Austin Professor of Management and Professor of Information Technology and Marketing at the Massachusetts Institute of Technology. As one of the world’s foremost experts on social media and its effects, Prof. Aral is the perfect person to look at how this phenomenon has changed the world and the human experience. This is what he sets out to do in his new book, The Hype Machine, published under the Currency Imprint of Random House this September, and with considerable success. Dylan Kwait. Surfers by Plum Island, October 2020.

Dylan Kwait. Surfers by Plum Island, October 2020.

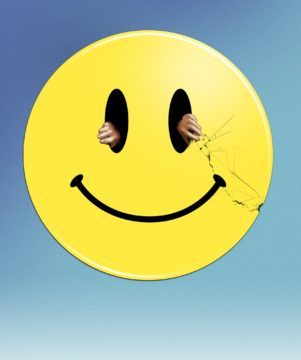

Not long ago there was an article circulating on Facebook about ‘Hating the English’, originally published in a large circulation newspaper. The Irish author says something to the effect that once she thought it was just a few bad ones etc., but now she hates the lot of them. It’s been stimulated, I think, by the repulsive English nationalism that has been raising its head since Brexit, plus the usual ignorance about Ireland, Irish history and Irish interests on the part of your typical ‘Brit’. It’s not a very good piece of writing, and it has a rather slight idea in it. I’d ignore it but for the ‘likes’ and positive comments it’s received, particularly from ‘leftists’. It’s an example of what we could call ‘bloc thinking’ – the emotionally satisfying but futile consignment of entire masses of people into categories of nice and nasty.

Not long ago there was an article circulating on Facebook about ‘Hating the English’, originally published in a large circulation newspaper. The Irish author says something to the effect that once she thought it was just a few bad ones etc., but now she hates the lot of them. It’s been stimulated, I think, by the repulsive English nationalism that has been raising its head since Brexit, plus the usual ignorance about Ireland, Irish history and Irish interests on the part of your typical ‘Brit’. It’s not a very good piece of writing, and it has a rather slight idea in it. I’d ignore it but for the ‘likes’ and positive comments it’s received, particularly from ‘leftists’. It’s an example of what we could call ‘bloc thinking’ – the emotionally satisfying but futile consignment of entire masses of people into categories of nice and nasty.

According to Donald Trump, in

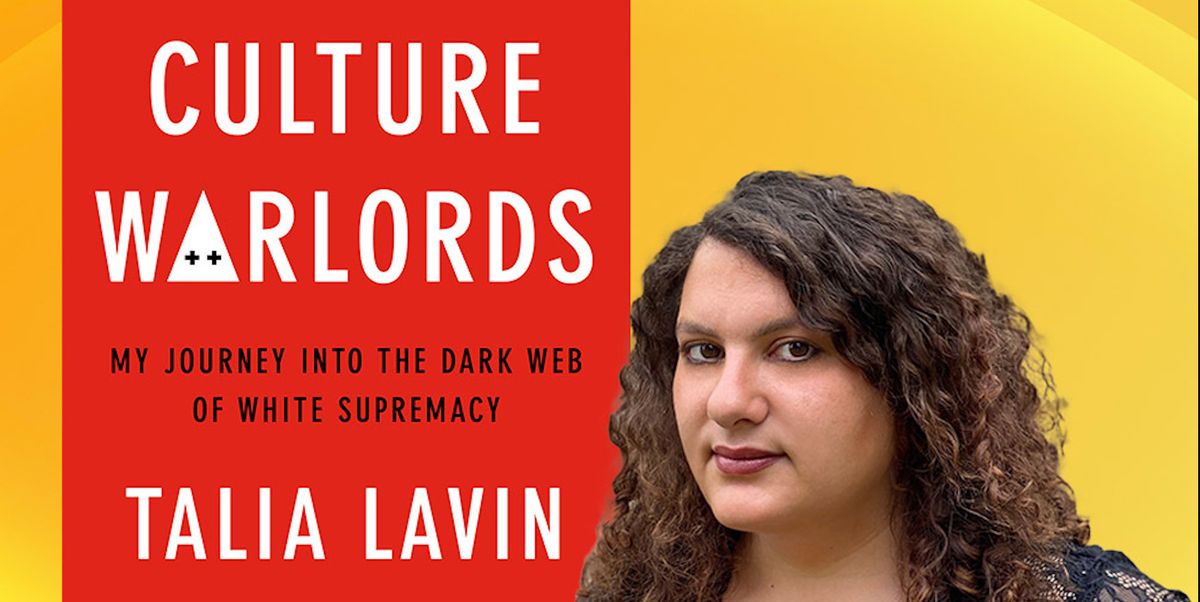

According to Donald Trump, in  Recent protests in the US by Trump supporters since the election of Joe Biden, highlight just how political ideologies have the potential to tear seemingly ‘stable’ societies apart. A political divide however cannot always be seen as a clear-cut contradiction between the right and the left, as, for example, the way Trump supporters might assert; Biden, and Democrats more broadly, could hardly be seen to represent the left. Likewise, the right has it shades of commitment to conservatism. However, Trump’s 70 million supporters represent a congealing of far-right politics in America identifiable by the policies articulated by Trump that they endorse: anti- immigration, racism, a resurgent nationalism. While there is little doubt that such policies have been magical music to the ears of many right wingers, for others Trump and the Republican Party do not go far enough, and it is these extreme right-wing groups that are the subject of Talia Lavin’s book Culture Warlords: My Journey into the Dark Web of White Supremacists.

Recent protests in the US by Trump supporters since the election of Joe Biden, highlight just how political ideologies have the potential to tear seemingly ‘stable’ societies apart. A political divide however cannot always be seen as a clear-cut contradiction between the right and the left, as, for example, the way Trump supporters might assert; Biden, and Democrats more broadly, could hardly be seen to represent the left. Likewise, the right has it shades of commitment to conservatism. However, Trump’s 70 million supporters represent a congealing of far-right politics in America identifiable by the policies articulated by Trump that they endorse: anti- immigration, racism, a resurgent nationalism. While there is little doubt that such policies have been magical music to the ears of many right wingers, for others Trump and the Republican Party do not go far enough, and it is these extreme right-wing groups that are the subject of Talia Lavin’s book Culture Warlords: My Journey into the Dark Web of White Supremacists.

When I was seventeen years old, I took my first college science course, a summer class in astronomy for non-majors. The professor narrated his wild claims in an amused deadpan, calmly showing us how to reconstruct the life cycle of stars, and how to estimate the age of the universe. This course was at the University of Iowa, and I imagine that the professor was accustomed to intermittent resistance from students like me, whose rural, religious upbringing led them—led me—to challenge his claims. Yet I often found myself at a loss. The professor used a soft sell, and his claims seemed somewhere beyond the realm of mere politics or belief. Sure, I could spot a few gaps in his vision (he batted away my psychoanalytic interpretation of the Big Bang by saying he had never heard of Freud), but I envied him. I wished that my own positions were so easy to defend.

When I was seventeen years old, I took my first college science course, a summer class in astronomy for non-majors. The professor narrated his wild claims in an amused deadpan, calmly showing us how to reconstruct the life cycle of stars, and how to estimate the age of the universe. This course was at the University of Iowa, and I imagine that the professor was accustomed to intermittent resistance from students like me, whose rural, religious upbringing led them—led me—to challenge his claims. Yet I often found myself at a loss. The professor used a soft sell, and his claims seemed somewhere beyond the realm of mere politics or belief. Sure, I could spot a few gaps in his vision (he batted away my psychoanalytic interpretation of the Big Bang by saying he had never heard of Freud), but I envied him. I wished that my own positions were so easy to defend. Cosmology is a young science. Maybe the youngest. Some people say it started in the 1920’s when these little glowing clouds visible at certain points in the sky were found, by better and better telescopes, to be composed of billions and billions of stars, just like our own galaxy – the Milky Way – and it was then discovered that no matter what direction you looked they were all rushing away from us. More than one cosmologist has wondered if these galaxies know something that you and I don’t.

Cosmology is a young science. Maybe the youngest. Some people say it started in the 1920’s when these little glowing clouds visible at certain points in the sky were found, by better and better telescopes, to be composed of billions and billions of stars, just like our own galaxy – the Milky Way – and it was then discovered that no matter what direction you looked they were all rushing away from us. More than one cosmologist has wondered if these galaxies know something that you and I don’t. ONE HUNDRED YEARS AGO, when most American Jews were immigrants from Eastern Europe, nearly every Jew in the United States spoke Yiddish, but no one gave it any respect. Today, by contrast, everyone is full of affection for Yiddish, even though almost no one speaks it. Though one hears from every synagogue pulpit and reads in most university Jewish Studies mission statements that Hebrew is the eternal and unifying language of the Jewish experience, Yiddish maintains an emotional claim on the descendants of Eastern European Jews, as well as leaving an indelible imprint on the popular culture created by, for, and among these immigrants and their offspring. Is this valorization of Yiddish commensurate with knowledge and appreciation of — or respect for — the language and the culture it created beyond the lexicon of sentimental melodies, off-color jokes, and redefined adjectives? One could gesture to the 2020 Seth Rogen film An American Pickle without having to answer the question further. Emotional relationships can often lead in nonrational directions, seldom directed by facts.

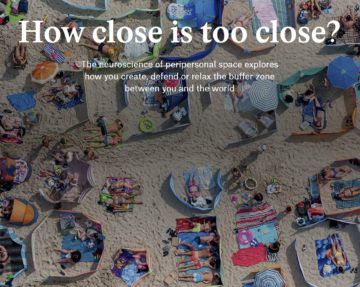

ONE HUNDRED YEARS AGO, when most American Jews were immigrants from Eastern Europe, nearly every Jew in the United States spoke Yiddish, but no one gave it any respect. Today, by contrast, everyone is full of affection for Yiddish, even though almost no one speaks it. Though one hears from every synagogue pulpit and reads in most university Jewish Studies mission statements that Hebrew is the eternal and unifying language of the Jewish experience, Yiddish maintains an emotional claim on the descendants of Eastern European Jews, as well as leaving an indelible imprint on the popular culture created by, for, and among these immigrants and their offspring. Is this valorization of Yiddish commensurate with knowledge and appreciation of — or respect for — the language and the culture it created beyond the lexicon of sentimental melodies, off-color jokes, and redefined adjectives? One could gesture to the 2020 Seth Rogen film An American Pickle without having to answer the question further. Emotional relationships can often lead in nonrational directions, seldom directed by facts. Heini Hediger, a noted 20th-century Swiss biologist and zoo director, knew that animals ran away when they felt unsafe. But when he set about designing and building zoos himself, he realised he needed a more precise understanding of how animals behaved when put in proximity to one another. Hediger decided to investigate the flight response systematically, something that no one had done before.

Heini Hediger, a noted 20th-century Swiss biologist and zoo director, knew that animals ran away when they felt unsafe. But when he set about designing and building zoos himself, he realised he needed a more precise understanding of how animals behaved when put in proximity to one another. Hediger decided to investigate the flight response systematically, something that no one had done before. The beating heart of literature is writers’ engagement with sadness and the conflicts of their time. Many of these conflicts are centred on wealth and access to natural resources: land, water, mineral, forest, stone, sand, clean air. Big money, often with the aid of big media, attempts to shape public opinion about who controls the world, who deserves what, how resources ought to be shared. In a similar vein, traditional hegemonies in India – patriarchy and the caste system – try to control the stories we tell about each other.

The beating heart of literature is writers’ engagement with sadness and the conflicts of their time. Many of these conflicts are centred on wealth and access to natural resources: land, water, mineral, forest, stone, sand, clean air. Big money, often with the aid of big media, attempts to shape public opinion about who controls the world, who deserves what, how resources ought to be shared. In a similar vein, traditional hegemonies in India – patriarchy and the caste system – try to control the stories we tell about each other. THERE’S A WEALTH OF

THERE’S A WEALTH OF The Covid-19 pandemic has made the world feel lonelier than ever as people have been shut away in their homes, aching to gather with their loved ones again. This instinct to evade loneliness is deeply engrained in our brains, and a new study published in the journal

The Covid-19 pandemic has made the world feel lonelier than ever as people have been shut away in their homes, aching to gather with their loved ones again. This instinct to evade loneliness is deeply engrained in our brains, and a new study published in the journal

More than 40 years ago, three psychologists published

More than 40 years ago, three psychologists published