Brian Leiter in the Times Literary Supplement:

The German philosopher Friedrich Nietzsche (1844–1900) pursued two main themes in his work, one now familiar, even commonplace in modernity, the other still under-appreciated, often ignored. The familiar Nietzsche is the “existentialist”, who diagnoses the most profound cultural fact about modernity: “the death of God”, or more exactly, the collapse of the possibility of reasonable belief in God. Belief in God – in transcendent meaning or purpose, dictated by a supernatural being – is now incredible, usurped by naturalistic explanations of the evolution of species, the behaviour of matter in motion, the unconscious causes of human behaviours and attitudes, indeed, by explanations of how such a bizarre belief arose in the first place. But without God or transcendent purpose, how can we withstand the terrible truths about our existence, namely, its inevitable suffering and disappointment, followed by death and the abyss of nothingness?

The German philosopher Friedrich Nietzsche (1844–1900) pursued two main themes in his work, one now familiar, even commonplace in modernity, the other still under-appreciated, often ignored. The familiar Nietzsche is the “existentialist”, who diagnoses the most profound cultural fact about modernity: “the death of God”, or more exactly, the collapse of the possibility of reasonable belief in God. Belief in God – in transcendent meaning or purpose, dictated by a supernatural being – is now incredible, usurped by naturalistic explanations of the evolution of species, the behaviour of matter in motion, the unconscious causes of human behaviours and attitudes, indeed, by explanations of how such a bizarre belief arose in the first place. But without God or transcendent purpose, how can we withstand the terrible truths about our existence, namely, its inevitable suffering and disappointment, followed by death and the abyss of nothingness?

Nietzsche the “existentialist” exists in tandem with an “illiberal” Nietzsche, one who sees the collapse of theism and divine teleology as tied fundamentally to the untenability of the entire moral world view of post-Christian modernity.

More here.

In 1890, just over six thousand people lived in the damp lowlands of south

In 1890, just over six thousand people lived in the damp lowlands of south  EARLY IN THE MORNING

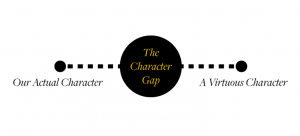

EARLY IN THE MORNING Here is the predicament that most of us seem to be in. We are not virtuous people. We simply do not have characters that are good enough to qualify as honest, compassionate, wise, courageous, and the like. We are not vicious people either—dishonest, callous, foolish, cowardly, and so forth. Rather we have a mixed character with some good sides and some bad sides. This is the most plausible interpretation of what psychology tells us. It is also true to our lived experience in the world. Those are the facts as I see them. Now comes the value judgment—this is a real shame. It is very unfortunate that our characters are this way. It is a good thing—indeed, a very good thing—to be a good person. Excellence of character, or being virtuous, is what we should all strive for. Admittedly, the news is not all bad. It would be a lot worse if most of us were vicious people. Imagine what it would be like to live in a world full of mostly cruel, self-centered, dishonest, and hateful people. It would be hell on earth.

Here is the predicament that most of us seem to be in. We are not virtuous people. We simply do not have characters that are good enough to qualify as honest, compassionate, wise, courageous, and the like. We are not vicious people either—dishonest, callous, foolish, cowardly, and so forth. Rather we have a mixed character with some good sides and some bad sides. This is the most plausible interpretation of what psychology tells us. It is also true to our lived experience in the world. Those are the facts as I see them. Now comes the value judgment—this is a real shame. It is very unfortunate that our characters are this way. It is a good thing—indeed, a very good thing—to be a good person. Excellence of character, or being virtuous, is what we should all strive for. Admittedly, the news is not all bad. It would be a lot worse if most of us were vicious people. Imagine what it would be like to live in a world full of mostly cruel, self-centered, dishonest, and hateful people. It would be hell on earth. What strategies are there to try to develop a better character, and which of these strategies show substantial promise? In my book The Character Gap: How Good Are We?, I consider a number of different strategies. One I find quite interesting is what we might call “virtue labeling.” Suppose you come to believe, as I do, that most of the people we know do not have any of the virtues. So your friend, your boss, your neighbor … you need to change your opinion of all of them. Now here is the interesting idea—even with this new outlook firmly in mind, you should still go ahead and call them honest people next time you see them. You should still praise them for being compassionate. You should go out of your way to comment on their courage.

What strategies are there to try to develop a better character, and which of these strategies show substantial promise? In my book The Character Gap: How Good Are We?, I consider a number of different strategies. One I find quite interesting is what we might call “virtue labeling.” Suppose you come to believe, as I do, that most of the people we know do not have any of the virtues. So your friend, your boss, your neighbor … you need to change your opinion of all of them. Now here is the interesting idea—even with this new outlook firmly in mind, you should still go ahead and call them honest people next time you see them. You should still praise them for being compassionate. You should go out of your way to comment on their courage. You might feel that you have the ability to make choices, decisions and plans – and the freedom to change your mind at any point if you so desire – but many psychologists and scientists would tell you that this is an illusion. The denial of

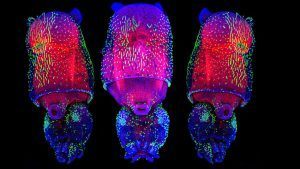

You might feel that you have the ability to make choices, decisions and plans – and the freedom to change your mind at any point if you so desire – but many psychologists and scientists would tell you that this is an illusion. The denial of  Billions of years ago, life crossed a threshold. Single cells started to band together, and a world of formless, unicellular life was on course to evolve into the riot of shapes and functions of multicellular life today, from ants to pear trees to people. It’s a transition as momentous as any in the history of life, and until recently we had no idea how it happened.

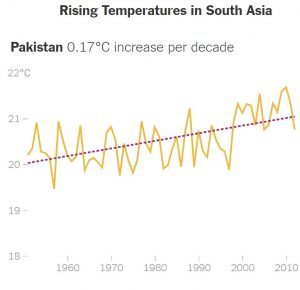

Billions of years ago, life crossed a threshold. Single cells started to band together, and a world of formless, unicellular life was on course to evolve into the riot of shapes and functions of multicellular life today, from ants to pear trees to people. It’s a transition as momentous as any in the history of life, and until recently we had no idea how it happened. Climate change could sharply diminish living conditions for up to 800 million people in South Asia, a region that is already home to some of the world’s poorest and hungriest people, if nothing is done to reduce global greenhouse gas emissions,

Climate change could sharply diminish living conditions for up to 800 million people in South Asia, a region that is already home to some of the world’s poorest and hungriest people, if nothing is done to reduce global greenhouse gas emissions, What did I do to deserve a yelling at from the famously curmudgeonly and irascible Harlan Ellison? Well, from 2010 to 2013, I was the president of SFWA, the Science Fiction and Fantasy Writers of America, an organization to which Harlan belonged and which made him one of its Grand Masters in 2006. Harlan believed that as a Grand Master I was obliged to take his call whenever he felt like calling, which was usually late in the evening, as I was Eastern time and he was on Pacific time. So some time between 11 p.m. and 1 a.m., the phone would ring, and “It’s Harlan” would rumble across the wires, and then for the next 30 or so minutes, Harlan Ellison would expound on whatever it was he had a wart on his fanny about, which was sometimes about SFWA-related business, and sometimes just life in general.

What did I do to deserve a yelling at from the famously curmudgeonly and irascible Harlan Ellison? Well, from 2010 to 2013, I was the president of SFWA, the Science Fiction and Fantasy Writers of America, an organization to which Harlan belonged and which made him one of its Grand Masters in 2006. Harlan believed that as a Grand Master I was obliged to take his call whenever he felt like calling, which was usually late in the evening, as I was Eastern time and he was on Pacific time. So some time between 11 p.m. and 1 a.m., the phone would ring, and “It’s Harlan” would rumble across the wires, and then for the next 30 or so minutes, Harlan Ellison would expound on whatever it was he had a wart on his fanny about, which was sometimes about SFWA-related business, and sometimes just life in general. F

F Moon Brow is a story of lust, love, and loss set during three periods of time: Iran’s revolution, the post-revolution and Eight Years War with Iraq, and the post-war era. An Eight Years War veteran, Amir Yamini, who formerly drowned himself in sex and alcohol, is discovered in a hospital for shell-shock victims by his mother and his sister Reyhaneh, having languished there for five years. Suffering from mental injuries caused by the war, Amir is haunted by a woman in his dreams that he calls “Moon Brow” because he can’t see her face. Amir’s attempt to seek the truth of his past brings him to his old friend, Kaveh, who might know what happened in Amir’s past life. The search for a woman he truly loved before going to war takes him to where he lost his left arm—and his wedding ring—during the war. Amir’s relationship with his sister Reyhaneh is one of the best parts of the novel—a true companion, Reyhaneh helps Amir discover the truth of his life before the war. Moon Brow combines Amir’s journey into his past life with the history of Iran, and also it shares the trauma of war as it reveals the victims of Saddam Hussein’s genocidal ideology.

Moon Brow is a story of lust, love, and loss set during three periods of time: Iran’s revolution, the post-revolution and Eight Years War with Iraq, and the post-war era. An Eight Years War veteran, Amir Yamini, who formerly drowned himself in sex and alcohol, is discovered in a hospital for shell-shock victims by his mother and his sister Reyhaneh, having languished there for five years. Suffering from mental injuries caused by the war, Amir is haunted by a woman in his dreams that he calls “Moon Brow” because he can’t see her face. Amir’s attempt to seek the truth of his past brings him to his old friend, Kaveh, who might know what happened in Amir’s past life. The search for a woman he truly loved before going to war takes him to where he lost his left arm—and his wedding ring—during the war. Amir’s relationship with his sister Reyhaneh is one of the best parts of the novel—a true companion, Reyhaneh helps Amir discover the truth of his life before the war. Moon Brow combines Amir’s journey into his past life with the history of Iran, and also it shares the trauma of war as it reveals the victims of Saddam Hussein’s genocidal ideology. I’m asking myself about double standards a lot lately, in public life and also in science. I’m particularly concerned about double standards in science whereby women’s issues are viewed differently than men’s. We’ve lagged behind in important ways because there is a concern that if we have a biological explanation for women’s behavior, it will smash women up against the glass ceiling, whereas a biological explanation for men’s behavior doesn’t do such a thing. So, we’ve been freer in biomedical science to explore questions about the biological foundations of men’s behavior and less free to explore those questions about women’s behavior. That’s a problem that manifests itself in the lag behind what we understand about men and what we understand about women.

I’m asking myself about double standards a lot lately, in public life and also in science. I’m particularly concerned about double standards in science whereby women’s issues are viewed differently than men’s. We’ve lagged behind in important ways because there is a concern that if we have a biological explanation for women’s behavior, it will smash women up against the glass ceiling, whereas a biological explanation for men’s behavior doesn’t do such a thing. So, we’ve been freer in biomedical science to explore questions about the biological foundations of men’s behavior and less free to explore those questions about women’s behavior. That’s a problem that manifests itself in the lag behind what we understand about men and what we understand about women. In the 1970s, Shulamith Firestone

In the 1970s, Shulamith Firestone  In his 1946 classic essay ‘Politics and the English language’, George Orwell argued that “if thought corrupts language, language can also corrupt thought”. Can the same be said for science — that the misuse and misapplication of language could corrupt research? Two neuroscientists believe that it can. In an intriguing paper published in the Journal of Neurogenetics, the duo claims that muddled phrasing in biology leads to muddled thought and, worse, flawed conclusions.

In his 1946 classic essay ‘Politics and the English language’, George Orwell argued that “if thought corrupts language, language can also corrupt thought”. Can the same be said for science — that the misuse and misapplication of language could corrupt research? Two neuroscientists believe that it can. In an intriguing paper published in the Journal of Neurogenetics, the duo claims that muddled phrasing in biology leads to muddled thought and, worse, flawed conclusions. THIRTY YEARS AGO, while the Midwest withered in massive drought and East Coast temperatures exceeded 100 degrees Fahrenheit, I testified to the Senate as a senior NASA scientist about climate change. I said that ongoing global warming was outside the range of natural variability and it could be attributed, with high confidence, to human activity — mainly from the spewing of carbon dioxide and other heat-trapping gases into the atmosphere. “It’s time to stop waffling so much and say that the evidence is pretty strong that the greenhouse effect is here,” I said.

THIRTY YEARS AGO, while the Midwest withered in massive drought and East Coast temperatures exceeded 100 degrees Fahrenheit, I testified to the Senate as a senior NASA scientist about climate change. I said that ongoing global warming was outside the range of natural variability and it could be attributed, with high confidence, to human activity — mainly from the spewing of carbon dioxide and other heat-trapping gases into the atmosphere. “It’s time to stop waffling so much and say that the evidence is pretty strong that the greenhouse effect is here,” I said.