by Thomas Fernandes

In Part I, we explored how bees navigate without depth perception, using optic flows to fly straight through tunnels, land smoothly, and estimate distance traveled. The visual system we examined works beautifully in providing simple navigation tools to solve complex tasks, leaving brain power for other activities such as pattern identification, nectar extraction, remembering profitable routes, and returning home

In Part I, we explored how bees navigate without depth perception, using optic flows to fly straight through tunnels, land smoothly, and estimate distance traveled. The visual system we examined works beautifully in providing simple navigation tools to solve complex tasks, leaving brain power for other activities such as pattern identification, nectar extraction, remembering profitable routes, and returning home

But what happens when that same motion-based perceptual interface must track and intercept a moving, evasive target? What are the rules of the hunt in insects? If any species can help us with this question, it is likely the dragonfly, with a hunting success rate close to 95%.

In a chase, a mammalian predator would run an interception course using depth perception to estimate closing distance, not follow in the exact footsteps of its prey. But insects cannot perceive depth and face a major optical challenge in motion parallax. When an observer moves, it becomes difficult to distinguish the target’s actual movement from the apparent motion caused by the observer’s own displacement.

Most flying insects, say flies pursuing other flies, use what’s called parallel navigation. The strategy is simple, you align with your target and match any changes in the target’s direction. If the target veers left, you veer left. This is a reactive pursuit, a continuous adjustment in response to prey movement.

It solves the motion parallax problem efficiently; the hunter only needs to keep the image motion of its prey at zero at all time which ultimately lets it zero in on the target. Notably, these chasing reflexes are driven by specialized “chasing neurons” that respond selectively to small, rapidly moving targets and are found only in chasing insects.

Dragonflies do something fundamentally different, as revealed by tracking studies. In reactive pursuit, you’d expect a direct correlation between the prey’s steering and the predator’s response. But in dragonflies, 75% of steering events occur without any corresponding prey maneuver. Meanwhile 70% of prey maneuvers are not followed by immediate corrective action on the dragonfly’s part. Hence, the dragonfly must be steering based on prediction, not reaction.

This requires not only an internal model, a representation of where the prey is going, but also of how to distinguish its own motion from the prey motion. How do insects with a brain the size of a grain of rice achieve this?

To start, dragonflies are well equipped. Their compound eyes have about 30 000 ommatidia, about five times more than bees and flies. This allows them to attain a visual acuity below 1° in the denser visual area called foveal region. While such visual acuity is record-breaking for this size, it is still below human vision.

To start, dragonflies are well equipped. Their compound eyes have about 30 000 ommatidia, about five times more than bees and flies. This allows them to attain a visual acuity below 1° in the denser visual area called foveal region. While such visual acuity is record-breaking for this size, it is still below human vision.

But when chasing fast-flying objects small angles of detection are only as interesting as your reaction time. To detect motion, humans need to compare successive images over 50 ms, which is why movies in 24 fps (42ms of fixed frame) appear continuous. Compound eyes face no such limitation for motion calculation and dragonflies detect motion up to 200 fps. In tracking studies their average response time was 47 ms from visual input to corrective course. This is faster than the time it takes our visual system to even register that a change has happened much less than the 120ms it takes to process it.

Yet, tools do not a craftsman make. To understand the dragonfly’s success, we must look past its anatomy and toward its strategy once a prey is detected and pursuit is engaged.

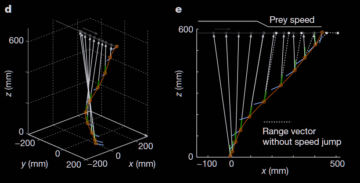

At takeoff, the dragonfly immediately aligns itself with the anticipated prey trajectory. It positions itself just below the prey, keeps its body tilted at a 30° angle and the prey centered on its foveal eye region. Positioned and aligned this way, the dragonfly has reduced the multidimensional capture problem to one of closing the vertical gap with the prey using superior speed. Figure 1 provides a better visual example of this description in 3D and simplified 2D.

Yet this strategy exposes the dragonfly to massive motion parallax issues. To illustrate with the numbers calculated by Mischiati et al., when the dragonfly rotates its body abruptly to position itself (about 1 000 °/s rotation speed), the apparent shift in prey position coming from the dragonfly’s own motion can be 10 times larger than the one coming from a prey’s abrupt turn.

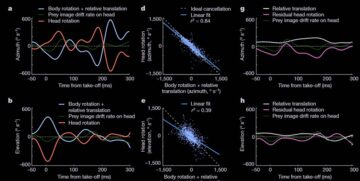

To counter these dragonflies have evolved an anatomical solution and a neuronal one. Anatomically they can rotate their heads independently of their bodies, a solution they share with other predators like hawks (check this hawk head stabilization video). The dragonfly’s nervous system in turn predicts how body movements will shift the visual image and issues corrective head commands before the image actually moves.

So as the dragonfly’s body moves, its head already counter-rotates to stabilize the prey’s image. What’s extraordinary is the timing. Head movements occur with an average lag of just 5 milliseconds after body disturbances. This is at the very limit of muscle contraction speed in insects and therefore clearly predictive.

But the head movements are not just cancelling the large shift caused by the dragonflies’ movements it is also continuously tracking the prey at the same time. This tracking also happens in mere millisecond and must therefore also be predictive of the prey trajectory based on initial speed.

That is, their eyes are not following after the target, they are preprogrammed to be constantly aligned on target even amid massive disturbances in body rotation. How those head counter-movement enable a stable prey image during such rapid movement can be seen in Figure 2.

In short, to solve the relative motion problem, parallel navigation hunters maintain their prey as a static target through the entire pursuit. The dragonfly maintains the future position of the prey as a static target. This is done through constant prediction, using internal knowledge of its own movements’ effect and the prey’s predicted path.

In the authors’ words: “In this view, if the dragonfly’s prediction of prey position were perfect, the prey image would be stabilized to a point for the entire flight and vision would be dispensable, at least for the purposes of interception”

The head is only staying on target to detect variations in prey trajectory. When the change is small, like the change of speed in Figure 1, there is reactive correction of eye tracking but only minor adjustment in flight path for the new collision course. This is why 70% of prey movement do not lead to immediate large correction in chasing dragonflies. Only when the prey makes a sudden, unpredictable maneuver does retinal drift occur, triggering a reactive correction of flight within about 40 milliseconds.

Accomplishing those things requires 80% of a dragonfly’s brain neurons to be devoted to visual systems. A much bigger investment than the number of ommatidia, one that only a specialized hunter could sustain.

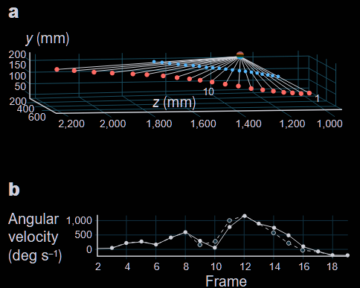

This neural commitment enables further prowess. Dragonflies are, with hoverflies, the only known animals to perform motion camouflage. Motion camouflage is the idea that, within insect vision, if an object were to move in perfect synchronicity with your own movement you would not be able to tell it was moving. This seems impossibly hard to realize and yet, this is what was observed in 6 out of 15 flight interactions between dragonflies. Here we can see a classic example where the blue dragonfly appears like a static and distant point to the red dragonfly. Notice how close to perfection the illusion is in (b), the mismatch below detection level.

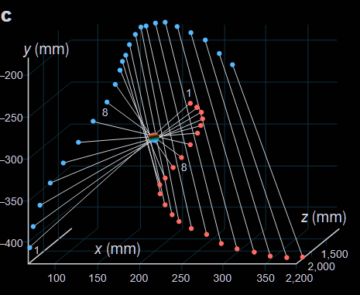

In a more complex display the blue shadowing dragonfly here goes in the opposite direction as the red ones, creating for the first 8 frames the illusion that it is static in the middle point, before switching to another camouflage strategy in which it creates the impression to be an infinitely distant object (therefore not represented on the graph).

Exactly how dragonflies compute and execute these stealthy trajectories remains unknown as well as why they produce such displays. Maybe they are playing while training their flying and visual anticipation skills

So far, we’ve focused on daylight gatherers and daylight hunters, yet the majority of insect species are nocturnal. So how do insects maintain flight orientation when most visual landmarks disappear? How do they even tell where is up and down?

It might seem obvious to us because gravity gives us a sense of direction. During slow hovering, legs hanging below the body might indicate gravity’s direction but tiny insects can’t reliably sense gravity during rapid flight maneuvers. Instead, almost all flying insects rely on what’s called the dorsal light response. Essentially it tells them that as long as they keep their backs to the light they will fly straight.

To put ourselves in their shoes, you could sense gravity in a pool of calm water. But after a few spins and dives below agitated waters, how would you know where is up and down? You would look at where the lights come from.

In nature, for millions of years, the brightest point has always been the sky. Even at night, the moon and stars shine brightest. So, this dorsal light response helped insects maintain stable flight attitude. Until humans introduced artificial lights.

A streetlamp or porch light is often much brighter than the night sky. The insect’s dorsal light response, encountering this novel stimulus, does what it’s evolved to do. The insects orient their backs toward the light and end up flying in circles. They think they are maintaining a relatively constant trajectory, but in reality, they are maintaining a constant distance to an artificial light source.

As has been shown in Why flying insects gather at artificial light, this is what drives insects’ apparent attraction to light. Not attraction, not warmth, but disorientation. Insects are effectively trapped within a perceptual loop.

The specific pattern above happens when insects encounter a light situated on their side. Insects then continuously bank in response, creating circular patterns when seen from above. Other less stable patterns can emerge. If the insect enters from above the light, trying to keep it back toward the light will produce inversion where the insect flips upside down. With ground light this can result in insect crashing into the earth.

During the day the sun is usually the brightest source of light and insects can also utilize horizon lines and optic flow from landscape features. These provide redundant orientation information. Only at night, with fewer visual references, can a single bright point-source dominate the insect’s dorsal light response from 85 m away without competing cues to override it. If some few insect species appeared unaffected there is no explanation as to why so far.

What ties these examples together is that each represents a perceptual interface adapted to its expected environment. Bees use simple angular velocity rules because natural landscapes are relatively stable and flowers don’t flee. Dragonflies build predictive models because prey move unpredictably and interception requires anticipation. Moths orient to overhead brightness because the sky has always been overhead.

Each interface makes assumptions about the world. Usually, these assumptions hold; occasionally, they don’t. When the environment shifts in ways evolution never prepared for, even sophisticated perceptual systems can fail spectacularly or be exploited ruthlessly. One animal known for exploiting spiders’ perceptual systems is the araneophagic (spider eating) jumping spider of the genus Portia that we will explore in the next essay.

***

If insects live inside evolved perceptual interfaces, so do we. I explore that question for human aesthetics in Why Do We Care About Fashion?

Enjoying the content on 3QD? Help keep us going by donating now.