Joe Pinsker in The Atlantic:

Later this year, if all goes well, Americans will be awash in social interactions again. At offices and schools, on sidewalks and in coffee shops, we’ll be bumping into one another like it’s 2019. The resulting flood of conversations will be extremely welcome. But less front of mind, at this still socially stifled moment, are the awkwardness and discomfort that will return along with day-to-day interactions. The co-worker who yammers on, the chatty subway seatmate who keeps you from reading your book, the friend of a friend who bores you at parties—they are all very excited to see you again, and have lots to catch you up on.

Perhaps this period before social life fully resumes is an occasion to revisit what we want from conversations and, more to the point, how we end them. In this regard, people generally have a poor sense of timing. “Conversations almost never ended when both conversants wanted them to,” concluded the authors of a study published earlier this month that asked people about recent interactions with loved ones, friends, and strangers. About two-thirds of them said they wanted the conversation to end sooner; on average, that group wanted the conversation to be about 25 percent shorter, Adam Mastroianni, a psychology doctoral student at Harvard and a co-author of the study, told me.

More here.

James Meadway in OpenDemocracy:

James Meadway in OpenDemocracy: Thomas Moynihan in Aeon:

Thomas Moynihan in Aeon: Alexander Zevin in New Left Review:

Alexander Zevin in New Left Review: Erica Eisen in Boston Review:

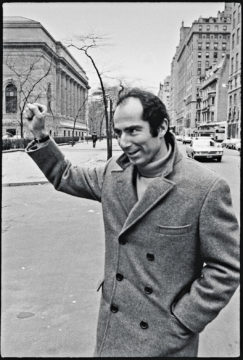

Erica Eisen in Boston Review: Aside from the flicker of fame that followed City of Quartz, Davis has managed to largely avoid the limelight for nearly four decades, despite receiving a MacArthur “Genius” Fellowship, a Lannan Literary Award, and many other honors along the way. For his devoted readers, part of his appeal is surely found in his writing style, which though forceful, self-assured, and playful, is also unapologetically precise, even scientific, making full use of a century-and-a-half’s worth of Marxist vocabulary. And part of it is his seemingly dour and idiosyncratic interests, which have led him to write books about the history of the car bomb, developmental patterns in contemporary slums, and the role of El Niño famines in nineteenth-century political economy.

Aside from the flicker of fame that followed City of Quartz, Davis has managed to largely avoid the limelight for nearly four decades, despite receiving a MacArthur “Genius” Fellowship, a Lannan Literary Award, and many other honors along the way. For his devoted readers, part of his appeal is surely found in his writing style, which though forceful, self-assured, and playful, is also unapologetically precise, even scientific, making full use of a century-and-a-half’s worth of Marxist vocabulary. And part of it is his seemingly dour and idiosyncratic interests, which have led him to write books about the history of the car bomb, developmental patterns in contemporary slums, and the role of El Niño famines in nineteenth-century political economy. “An illiterate, underbred book it seems to me: the book of a self-taught working man, & we all know how distressing they are, how egotistic, insistent, raw, striking & ultimately nauseating.” So goes Virginia Woolf’s well-known complaint about “

“An illiterate, underbred book it seems to me: the book of a self-taught working man, & we all know how distressing they are, how egotistic, insistent, raw, striking & ultimately nauseating.” So goes Virginia Woolf’s well-known complaint about “ When Roth died at age eighty-five in 2018, Dwight Garner wrote in the New York Times that it was the end of a cultural era. Roth was “the last front-rank survivor of a generation of fecund and authoritative and, yes, white and male novelists.” Never mind that at least four other major American novelists born in the 1930s—DeLillo, McCarthy, Morrison, Pynchon—were still alive. Forget about pigeonholing as white and male an author who at the beginning of his career was invited to sit beside Ralph Ellison on panels about “minority writing”—because Jews were still at the margins. No matter that the modes that sustained Roth—autobiography with comic exaggeration, autobiographical metafiction, historical fiction of the recent past—are the modes that define the current moment. Roth was not an end point but the beginning of the present. There had been fluke golden boys before him, like Fitzgerald and Mailer, but Roth, twenty-six when he won the National Book Award for Goodbye, Columbus in 1960, reset the template for the prodigy author in the age of television, going at it with Mike Wallace in prime time. The morning before he spoke to Wallace he gave an interview to a young reporter for the New York Post, who asked him about a critic who’d called his book “an exhibition of Jewish self-hate.” A few weeks later the piece turned up in the mail Roth received from his clipping service while he was staying in Rome. He was quoted as saying the critic ought to “write a book about why he hates me. It might give insights into me and him, too.” “I decided then and there,” his biographer Blake Bailey quotes him saying at the time, “to give up a public career.”

When Roth died at age eighty-five in 2018, Dwight Garner wrote in the New York Times that it was the end of a cultural era. Roth was “the last front-rank survivor of a generation of fecund and authoritative and, yes, white and male novelists.” Never mind that at least four other major American novelists born in the 1930s—DeLillo, McCarthy, Morrison, Pynchon—were still alive. Forget about pigeonholing as white and male an author who at the beginning of his career was invited to sit beside Ralph Ellison on panels about “minority writing”—because Jews were still at the margins. No matter that the modes that sustained Roth—autobiography with comic exaggeration, autobiographical metafiction, historical fiction of the recent past—are the modes that define the current moment. Roth was not an end point but the beginning of the present. There had been fluke golden boys before him, like Fitzgerald and Mailer, but Roth, twenty-six when he won the National Book Award for Goodbye, Columbus in 1960, reset the template for the prodigy author in the age of television, going at it with Mike Wallace in prime time. The morning before he spoke to Wallace he gave an interview to a young reporter for the New York Post, who asked him about a critic who’d called his book “an exhibition of Jewish self-hate.” A few weeks later the piece turned up in the mail Roth received from his clipping service while he was staying in Rome. He was quoted as saying the critic ought to “write a book about why he hates me. It might give insights into me and him, too.” “I decided then and there,” his biographer Blake Bailey quotes him saying at the time, “to give up a public career.” Among the many puzzles that confronted American sailors during World War II, few were as vexing as the sound of phantom enemies. Especially in the war’s early days, submarine crews and sonar operators listening for Axis vessels were often baffled by what they heard. When the USS Salmon surfaced to search for the ship whose rumbling propellers its crew had detected off the Philippines coast on Christmas Eve 1941, the submarine found only an empty expanse of moonlit ocean. Elsewhere in the Pacific, the USS Tarpon was mystified by a repetitive clanging and the USS Permit by what crew members described as the sound of “hammering on steel.” In the Chesapeake Bay, the clangor—likened by one sailor to “pneumatic drills tearing up a concrete sidewalk”—was so loud it threatened to detonate defensive mines and sink friendly ships.

Among the many puzzles that confronted American sailors during World War II, few were as vexing as the sound of phantom enemies. Especially in the war’s early days, submarine crews and sonar operators listening for Axis vessels were often baffled by what they heard. When the USS Salmon surfaced to search for the ship whose rumbling propellers its crew had detected off the Philippines coast on Christmas Eve 1941, the submarine found only an empty expanse of moonlit ocean. Elsewhere in the Pacific, the USS Tarpon was mystified by a repetitive clanging and the USS Permit by what crew members described as the sound of “hammering on steel.” In the Chesapeake Bay, the clangor—likened by one sailor to “pneumatic drills tearing up a concrete sidewalk”—was so loud it threatened to detonate defensive mines and sink friendly ships. A couple of weeks ago, I attended an interdisciplinary seminar featuring work in progress on law and humanities. After the guest presenter finished reading his chapter draft, the floor opened for discussion: Legal scholars pushed for more terminological precision, historians suggested alternative timelines, political scientists offered comparative context that called some of the author’s conclusions into question. It wasn’t until the frank, fun, productive conversation had wrapped up that I put my finger on what had been missing. Where was the praise?

A couple of weeks ago, I attended an interdisciplinary seminar featuring work in progress on law and humanities. After the guest presenter finished reading his chapter draft, the floor opened for discussion: Legal scholars pushed for more terminological precision, historians suggested alternative timelines, political scientists offered comparative context that called some of the author’s conclusions into question. It wasn’t until the frank, fun, productive conversation had wrapped up that I put my finger on what had been missing. Where was the praise? Alice and Bob, the stars of so many thought experiments, are cooking dinner when mishaps ensue. Alice accidentally drops a plate; the sound startles Bob, who burns himself on the stove and cries out. In another version of events, Bob burns himself and cries out, causing Alice to drop a plate.

Alice and Bob, the stars of so many thought experiments, are cooking dinner when mishaps ensue. Alice accidentally drops a plate; the sound startles Bob, who burns himself on the stove and cries out. In another version of events, Bob burns himself and cries out, causing Alice to drop a plate. And if you talk to people with a curious and open mind, you’ll pretty quickly find out that New York Times reporters are really smart. So are McKinsey consultants. So are the people working at successful hedge funds. So are Ivy League professors. Probably the smartest person I know was in a great grad program in the humanities, couldn’t quite get a tenure track job because of timing and the generally lousing job market in academia, and wound up with a job in finance at a firm that is famous for hiring really smart people with unorthodox backgrounds. Our society is great at identifying smart people and giving them important or lucrative jobs.

And if you talk to people with a curious and open mind, you’ll pretty quickly find out that New York Times reporters are really smart. So are McKinsey consultants. So are the people working at successful hedge funds. So are Ivy League professors. Probably the smartest person I know was in a great grad program in the humanities, couldn’t quite get a tenure track job because of timing and the generally lousing job market in academia, and wound up with a job in finance at a firm that is famous for hiring really smart people with unorthodox backgrounds. Our society is great at identifying smart people and giving them important or lucrative jobs. It’s 2050 and you’re due for your monthly physical exam. Times have changed, so you no longer have to endure an orifices check, a needle in your vein, and a week of waiting for your blood test results. Instead, the nurse welcomes you with, “The doctor will sniff you now,” and takes you into an airtight chamber wired up to a massive computer. As you rest, the volatile molecules you exhale or emit from your body and skin slowly drift into the complex artificial intelligence apparatus, colloquially known as Deep Nose. Behind the scene, Deep Nose’s massive electronic brain starts crunching through the molecules, comparing them to its enormous olfactory database. Once it’s got a noseful, the AI matches your odors to the medical conditions that cause them and generates a printout of your health. Your human doctor goes over the results with you and plans your treatment or adjusts your meds.

It’s 2050 and you’re due for your monthly physical exam. Times have changed, so you no longer have to endure an orifices check, a needle in your vein, and a week of waiting for your blood test results. Instead, the nurse welcomes you with, “The doctor will sniff you now,” and takes you into an airtight chamber wired up to a massive computer. As you rest, the volatile molecules you exhale or emit from your body and skin slowly drift into the complex artificial intelligence apparatus, colloquially known as Deep Nose. Behind the scene, Deep Nose’s massive electronic brain starts crunching through the molecules, comparing them to its enormous olfactory database. Once it’s got a noseful, the AI matches your odors to the medical conditions that cause them and generates a printout of your health. Your human doctor goes over the results with you and plans your treatment or adjusts your meds. On February 22nd, in an office in White Plains, two lawyers handed over a hard drive to a Manhattan Assistant District Attorney, who, along with two investigators, had driven up from New York City in a heavy snowstorm. Although the exchange didn’t look momentous, it set in motion the next phase of one of the most significant legal showdowns in American history. Hours earlier, the Supreme Court had ordered

On February 22nd, in an office in White Plains, two lawyers handed over a hard drive to a Manhattan Assistant District Attorney, who, along with two investigators, had driven up from New York City in a heavy snowstorm. Although the exchange didn’t look momentous, it set in motion the next phase of one of the most significant legal showdowns in American history. Hours earlier, the Supreme Court had ordered  “I

“I  Mann contended that Wagner’s art was neither monolithically grand nor sinister but deeply, violently ambivalent. He cited Nietzsche’s swing from filial devotion to Oedipal rebellion as a case in point. In his first major work, The Birth of Tragedy (1872), Nietzsche hailed his friend’s art in terms that fused Wagner’s own revolutionary rhetoric with Schopenhauer’s metaphysics of self-annihilation: it broke the “spell of individuation,” reopening the way to “the innermost heart of things.” But over the following decade Nietzsche shifted slowly from acolyte to skeptic, estranged by Wagner’s growing nationalism, the spectacle of Bayreuth, and his own changing intellectual needs. The final breach, he wrote, was precipitated by the self-betrayal of Parsifal, in which “Wagner, seemingly the all-conquering, actually a decaying, despairing decadent, suddenly sank down helpless and shattered before the Christian cross.” Later, in The Case of Wagner (1888), Nietzsche concluded that there had been nothing there to betray in the first place. Wagner had always been a disease, a toxin, and a neurosis, even before the encounter with Schopenhauer. “Only the philosopher of decadence gave to the artist of decadence—himself.”

Mann contended that Wagner’s art was neither monolithically grand nor sinister but deeply, violently ambivalent. He cited Nietzsche’s swing from filial devotion to Oedipal rebellion as a case in point. In his first major work, The Birth of Tragedy (1872), Nietzsche hailed his friend’s art in terms that fused Wagner’s own revolutionary rhetoric with Schopenhauer’s metaphysics of self-annihilation: it broke the “spell of individuation,” reopening the way to “the innermost heart of things.” But over the following decade Nietzsche shifted slowly from acolyte to skeptic, estranged by Wagner’s growing nationalism, the spectacle of Bayreuth, and his own changing intellectual needs. The final breach, he wrote, was precipitated by the self-betrayal of Parsifal, in which “Wagner, seemingly the all-conquering, actually a decaying, despairing decadent, suddenly sank down helpless and shattered before the Christian cross.” Later, in The Case of Wagner (1888), Nietzsche concluded that there had been nothing there to betray in the first place. Wagner had always been a disease, a toxin, and a neurosis, even before the encounter with Schopenhauer. “Only the philosopher of decadence gave to the artist of decadence—himself.”