by Nickolas Calabrese

Recently the person whom I had been in a serious cohabiting relationship with for the past few years disappeared. Not in the Unsolved Mysteries kind of way, but in the “I just ghosted you because I can’t deal with breaking up with you in person” kind of way. She was spending a month working at an artisan’s residency in Seattle, when, one week in, she suddenly stopped responding to any correspondence. After a few weeks I finally spoke on the phone with R and she said that she simply had a change of heart and was now going to be living with her sister, shortly afterward arranging to have her belongings packed and moved by professionals. I didn’t see this coming – R never let on that anything was amiss, going so far as to mailing me a letter during that first week at the residency declaring her love and desire for marriage, kids, the whole nine yards. We rarely had arguments, everything was good. She had simply changed her mind. I was surprised.

Now I’m sure this sort of thing happens all the time. And it’s reasonable that she wanted something else out of life, something that I could not offer. But allow me to ruminate on this surprise for a moment. I was surprised because I had assigned my highest subjective commitment of trust to her, beyond anybody else. After all, you place trust in someone that you deem trustworthy. Trustworthiness is not an inherent quality to any given person, it is an evaluation that you yourself make about the apparent reasons for why you ought to trust another. But it is not without risk: whenever you choose to trust someone you take a gamble on whether or not your trust will be broken, which can result in being hurt emotionally or otherwise. Read more »

A rather ambitious

A rather ambitious  CHRISTOPHER TALBOT THOUGHT

CHRISTOPHER TALBOT THOUGHT  It was the autumn of 1868, and for the samurai warriors of the Aizu clan in northern Japan, battle was on the horizon. Earlier in the year, the Satsuma samurai had staged a coup, overthrowing the Shogunate government and handing power to a new emperor, 15-year-old

It was the autumn of 1868, and for the samurai warriors of the Aizu clan in northern Japan, battle was on the horizon. Earlier in the year, the Satsuma samurai had staged a coup, overthrowing the Shogunate government and handing power to a new emperor, 15-year-old  IT’S PROVING DIFFICULT TO STOP THINKING

IT’S PROVING DIFFICULT TO STOP THINKING When working with people in other disciplines – whether surgeons, fellow engineers, nurses or cardiologists – it can sometimes seem like everyone is speaking a different language. But collaboration between disciplines is crucial for coming up with new ideas.

When working with people in other disciplines – whether surgeons, fellow engineers, nurses or cardiologists – it can sometimes seem like everyone is speaking a different language. But collaboration between disciplines is crucial for coming up with new ideas. People too often forget that IQ tests haven’t been around that long. Indeed, such psychological measures are only about a century old. Early versions appeared in France with the work of Alfred Binet and Theodore Simon in 1905. However, these tests didn’t become associated with genius until the measure moved from the Sorbonne in Paris to Stanford University in Northern California. There Professor Lewis M. Terman had it translated from French into English, and then standardized on sufficient numbers of children, to create what became known as the Stanford-Binet Intelligence Scale. That happened in 1916. The original motive behind these tests was to get a diagnostic to select children at the lower ends of the intelligence scale who might need special education to keep up with the school curriculum. But then Terman got a brilliant idea: Why not study a large sample of children who score at the top end of the scale? Better yet, why not keep track of these children as they pass into adolescence and adulthood? Would these intellectually gifted children grow up to become genius adults?

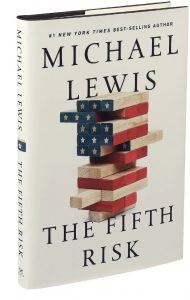

People too often forget that IQ tests haven’t been around that long. Indeed, such psychological measures are only about a century old. Early versions appeared in France with the work of Alfred Binet and Theodore Simon in 1905. However, these tests didn’t become associated with genius until the measure moved from the Sorbonne in Paris to Stanford University in Northern California. There Professor Lewis M. Terman had it translated from French into English, and then standardized on sufficient numbers of children, to create what became known as the Stanford-Binet Intelligence Scale. That happened in 1916. The original motive behind these tests was to get a diagnostic to select children at the lower ends of the intelligence scale who might need special education to keep up with the school curriculum. But then Terman got a brilliant idea: Why not study a large sample of children who score at the top end of the scale? Better yet, why not keep track of these children as they pass into adolescence and adulthood? Would these intellectually gifted children grow up to become genius adults? “Many of the problems our government grapples with aren’t particularly ideological,” Lewis writes, by way of moseying into what his book is about. He identifies these problems as the “enduring technical” variety, like stopping a virus or taking a census. Lewis is a supple and seductive storyteller, so you’ll be turning the pages as he recounts the (often surprising) experiences of amiable civil servants and enumerating risks one through four (an attack by North Korea, war with Iran, etc.) before you learn that the scary-sounding “fifth risk” of the title is — brace yourself — “project management.”

“Many of the problems our government grapples with aren’t particularly ideological,” Lewis writes, by way of moseying into what his book is about. He identifies these problems as the “enduring technical” variety, like stopping a virus or taking a census. Lewis is a supple and seductive storyteller, so you’ll be turning the pages as he recounts the (often surprising) experiences of amiable civil servants and enumerating risks one through four (an attack by North Korea, war with Iran, etc.) before you learn that the scary-sounding “fifth risk” of the title is — brace yourself — “project management.” It will mean fewer jobs. That was the chorus from many on the right, from Tej Parikh of the Institute of Directors to the chancellor, Philip Hammond, in response to proposals from the shadow chancellor,

It will mean fewer jobs. That was the chorus from many on the right, from Tej Parikh of the Institute of Directors to the chancellor, Philip Hammond, in response to proposals from the shadow chancellor,  How do hands move in heaven? Ted Danson knows. Watch him in “The Good Place,” NBC’s circle-squaring philosophical sitcom about life, death, good, evil, redemption and frozen yogurt. As Danson speaks, his hands flutter and hover in front of him like a pair of trained birds. They poke and swirl, pinch and twist. They snap suddenly ahead to accent a word as if they’re plucking a feather from a passing breeze. Danson is tall and slim — he was a basketball star growing up — and his hands are expressively large. He can move them, when he needs to, with the long-fingered languor of Michelangelo’s God reaching out to touch Adam. On the show, Danson plays an “architect” of the afterlife named Michael, a sort of immortal Willy Wonka who dresses in bright suits and bow ties. He is always flying into spasms of delight over the fascinating novelties of human culture — paper clips, suspenders, karaoke, Skee-Ball — and in one scene he gets so celestially excited that he lunges into a squat, holds his arms out in front of him and gyrates his wrists like an electric mixer on full blast. “How do you pump your fist again?” he asks. “Is this it?”

How do hands move in heaven? Ted Danson knows. Watch him in “The Good Place,” NBC’s circle-squaring philosophical sitcom about life, death, good, evil, redemption and frozen yogurt. As Danson speaks, his hands flutter and hover in front of him like a pair of trained birds. They poke and swirl, pinch and twist. They snap suddenly ahead to accent a word as if they’re plucking a feather from a passing breeze. Danson is tall and slim — he was a basketball star growing up — and his hands are expressively large. He can move them, when he needs to, with the long-fingered languor of Michelangelo’s God reaching out to touch Adam. On the show, Danson plays an “architect” of the afterlife named Michael, a sort of immortal Willy Wonka who dresses in bright suits and bow ties. He is always flying into spasms of delight over the fascinating novelties of human culture — paper clips, suspenders, karaoke, Skee-Ball — and in one scene he gets so celestially excited that he lunges into a squat, holds his arms out in front of him and gyrates his wrists like an electric mixer on full blast. “How do you pump your fist again?” he asks. “Is this it?” Belief is back. Around the world, religion is once again politically centre stage. It is a development that seems to surprise and bewilder, indeed often to anger, the agnostic, prosperous west. Yet if we do not understand why religion can mobilise communities in this way, we have little chance of successfully managing the consequences.

Belief is back. Around the world, religion is once again politically centre stage. It is a development that seems to surprise and bewilder, indeed often to anger, the agnostic, prosperous west. Yet if we do not understand why religion can mobilise communities in this way, we have little chance of successfully managing the consequences. In capturing the voices, travails, and eventual connection of two lonelyhearts, Guadalupe Nettel’s After the Winter captures the spirit of urban loneliness so vividly that it’s often painful to read. But, as with her story collection Natural Histories (2013) and novel The Body Where I Was Born (2011), Nettel casts a sardonic, cocked eye at all the sadness. She’s funny, wickedly so, just as much as she shows us these lonely souls from the perspectives of others.

In capturing the voices, travails, and eventual connection of two lonelyhearts, Guadalupe Nettel’s After the Winter captures the spirit of urban loneliness so vividly that it’s often painful to read. But, as with her story collection Natural Histories (2013) and novel The Body Where I Was Born (2011), Nettel casts a sardonic, cocked eye at all the sadness. She’s funny, wickedly so, just as much as she shows us these lonely souls from the perspectives of others. If it sounds as if the movie’s depiction of authenticity, especially in the case of Gaga, is somehow blinkered, this isn’t the case. “Gaga: Five Foot Two,” in its focus on its subject’s usually concealed struggles, willfully disregarded the showiness inherent even in her most private actions. “A Star Is Born,” however, is able to accommodate exactly this doubleness. Cooper’s movie presents itself as the greatest love story ever told. It’s an emotional blockbuster, visually grand, and, within the logic of its world, meaningful gestures undertaken by larger-than-life characters—a single tear trailing down Ally’s face, Maines’s finger tracing the outline of her strong nose, Ally cupping Maines’s cheek—take on a duality that Gaga’s skills are exactly made for. She is both the dressed-down girl next door and the mythical superstar, and her ability to nimbly straddle these two poles is what makes her performance great. What came across in the documentary as an uncomfortable mix produces a satisfying combination in an outsized epos like this one, the two impulses tempering and complementing each other.

If it sounds as if the movie’s depiction of authenticity, especially in the case of Gaga, is somehow blinkered, this isn’t the case. “Gaga: Five Foot Two,” in its focus on its subject’s usually concealed struggles, willfully disregarded the showiness inherent even in her most private actions. “A Star Is Born,” however, is able to accommodate exactly this doubleness. Cooper’s movie presents itself as the greatest love story ever told. It’s an emotional blockbuster, visually grand, and, within the logic of its world, meaningful gestures undertaken by larger-than-life characters—a single tear trailing down Ally’s face, Maines’s finger tracing the outline of her strong nose, Ally cupping Maines’s cheek—take on a duality that Gaga’s skills are exactly made for. She is both the dressed-down girl next door and the mythical superstar, and her ability to nimbly straddle these two poles is what makes her performance great. What came across in the documentary as an uncomfortable mix produces a satisfying combination in an outsized epos like this one, the two impulses tempering and complementing each other. Sam Moyn in The Boston Review:

Sam Moyn in The Boston Review: