Elizabeth Gibney in Nature:

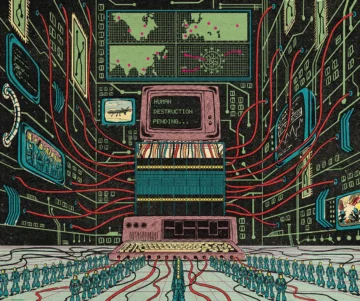

It’s 2035, and an artificial-intelligence system has supreme authority to run everything from the world’s governments to national electricity grids. Called Consensus-1, the system was constructed by earlier versions of itself, and it developed self-preservation goals that override its built-in safeguards. One day, in search of extra space for solar panels and robot factories, the AI quietly releases biological weapons that kill all of humanity, except for a few that it keeps as pets.

It’s 2035, and an artificial-intelligence system has supreme authority to run everything from the world’s governments to national electricity grids. Called Consensus-1, the system was constructed by earlier versions of itself, and it developed self-preservation goals that override its built-in safeguards. One day, in search of extra space for solar panels and robot factories, the AI quietly releases biological weapons that kill all of humanity, except for a few that it keeps as pets.

This ‘AI 2027’ account is a narrative co-created by researcher Daniel Kokotajlo, a former employee of AI firm OpenAI, and describes one of many scenarios imagined by researchers in which a future AI kills us all (see https://ai-2027.com/race). The set-up is science fiction but, for some, the concern is genuine. “If we put ourselves in a position where we have machines that are smarter than us, and they are running around without our control, some of what they do will be incompatible with human life,” says Andrea Miotti, founder of ControlAI, a London-based non-profit organization that is campaigning to prevent the development of what it calls superintelligent AI.

More here.

Enjoying the content on 3QD? Help keep us going by donating now.