by Jochen Szangolies

“The moment someone mentions the Turing test at you, assume they know nothing.” This somewhat grandstanding declaration comes from an AI in Tom Sweterlitsch’s recent novel The Gone World. Earlier, the AI had confided that its creator—of whom it is a digital replica—had considered it a ‘failure of consciousness’, a ‘simulation’, but not the real deal. The implication here is clear: passing the Turing test may be a necessary, but not a sufficient criterion for being more than a mere ‘simulation’. So what, exactly, is it that passing this test allows us to conclude?

A recent comment in Nature with the provocative title ‘Does AI already have human-level intelligence? The evidence is clear’ argues that what it calls ‘Turing’s vision’ has been realized: current LLMs do, indeed, pass the Turing test with flying colors. This is certainly a remarkable achievement: for the first time in history, we have non-human, indeed artificial entities that we can talk to, ask things, discuss with, bat ideas back and forth with, and so on, almost exactly as if we were talking to another human—one with a large percentage of the collective knowledge of humanity at their fingertips, no less. Indeed, just this morning a brief conversation with ChatGPT helped me sort out an issue with a piece of code I use to track appointments and tasks on a wall-mounted e-paper display that’d started misbehaving. But what, exactly, should be the takeaway from this?

According to the authors of the Nature comment, it is that ‘[g]eneral intelligence can indeed emerge from simple learning rules applied at scale to patterns latent in human language’ and that hence ‘[o]ur place in the world, and our understanding of mind, will not be the same’. This, if true, would be nothing short of revolutionary: we are, right now, sharing this planet with intelligences every bit our equal, yet products of code and mathematics, rather than of evolution and biology. But while I don’t exactly share the dismissive attitude of Sweterlitsch’s AI, I do believe there is a lot of middle ground hastily excluded here.

If It Quacks Like A Duck

The common attitude taken towards Turing’s test—his ‘imitation game’—is a broadly behaviorist one: the question ‘Can a machine fool a human interrogator?’ is suggested as an empirically accessible replacement for the far more vague ‘Can machines think?’. This interpretation has its roots in the philosophy of positivism, which sought to replace metaphysical—hence (according to its proponents) inaccessible or mysterious—propositions with concrete proposals amenable to empirical verification.

But this is not the only possible reading of Turing’s proposal. Another is the so-called inductive interpretation, according to which a positive answer to the first question yields at least plausible grounds for a positive answer to the latter. There is a stark contrast between the two readings: where a behaviorist attitude would have it that there is no difference between the two questions, that indeed, the meaning of being intelligent just is behaving intelligently, the inductive approach argues that there is a leap of inference from apparently intelligent behavior to the fact of intelligence. The link between behavior and reality, thus, is in principle defeasible: in making this leap, we can fall short.

A problem with the Nature-piece then is that it fails to cleanly distinguish between these readings, and to a certain extent even conflates them: while on the one hand proposing a clearly behaviorist definition of intelligence as being able to ‘do almost all cognitive tasks that a human can do’, the case for the presence of intelligence in LLMs is made by what the authors term a ‘cascade of evidence’—but evidence licenses only inductive judgment. Effectively, while claiming that being a duck just is walking and quacking in the right sort of way, the methodology employed is that of concluding that a given quacker-and-walker probably is a duck: what starts out with a claim of identity is weakened to one of plausible inference. The evidential case being made does not and cannot substantiate the identity that is being claimed.

One may read here a certain reluctance to fully commit to the behaviorist interpretation into the piece, and perhaps for good reason: after all, behaviorism has, thanks to sustained critique from the likes of Noam Chomsky and Hilary Putnam, today largely fallen out of fashion. There are twin worries that may prompt such a fallback from full-blown behaviorism: one, that behavior may underdetermine internal states, and that these states may be crucial in determining whether the judgment of intelligence is accurate; and two, the verificationist commitments of positivist philosophy leave this judgment vulnerable to disconfirming evidence. These may form sufficient grounds for preferring an inductive reading over a behaviorist one, despite a preference for a behaviorist notion of intelligence.

In principle, of course, there is no issue with retreating from the behaviorist version of the argument to its inductive cousin. An evidential argument on its own could make a very strong case for machine intelligence. However, unfortunately, the overall argumentative strategy hinges on the inductive case being shored up with behaviorist notions at a key point, which ends up rendering its conclusion highly questionable.

Let’s Table This

An influential criticism of behaviorism was presented by Hilary Putnam in his 1965 paper “Brains and Behavior”. There, he proposes the possibility of ‘super-Spartans’, fierce warriors with a disdain for showing weakness so great that they have trained themselves entirely out of showing any outward signs of pain-behavior—indeed, they even lack the disposition of such behavior altogether. Thus, if a super-Spartan is wounded in battle, they will make no outward acknowledgment of feeling any pain whatsoever—they will not cry out, flinch, or move to guard the wounded spot. Yet, their feeling of pain is unabated: so great is their stoic resolve that not even the worst agony compels them to show any outward signs of it.

If this is, at least, a consistent possibility, and it seems to be, then pain-behavior does not suffice as an explication of the feeling of pain: the latter exists over and above the usually associated outwardly observable signs of it, and may be related to these causally, but fails to be identical with them. The converse is easily imaginable: just because somebody shows the outward behavior of pain, like the schoolboy feigning a headache to avoid the test he forgot to study for, it doesn’t follow that they actually feel the pain whose symptoms they are showing.

Of course, this need not worry the dedicated behaviorist much when it comes to intelligence. While subjective qualities like pain may have characteristics that evade being captured in terms of mere behavior, functional qualities like intelligence need not depend on such qualities, and may be fully analyzable in terms of actually performing the requisite functions—going through the right motions, so to speak. Perhaps intelligence is more like dancing: arguably, there’s nothing to dancing beyond showing the right behavior—there’s nothing over and above the behavior of dance as there is to the behavior of pain.

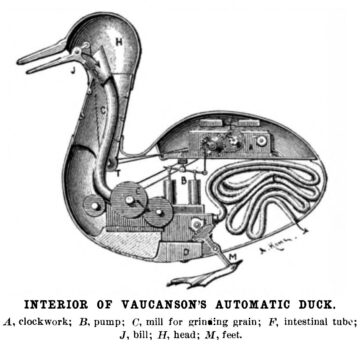

Or take, as an example of a slightly more cognitive bent, arithmetic abilities, like multiplication. If I give some device two numbers, and it returns their product, and it does so reliably and repeatably, then one might very well have strong grounds to conclude that it actually does perform multiplication—again, there’s nothing over and above the act of multiplying numbers that’s needed for ‘genuine’ multiplication. Or is there?

Suppose the device, unbeknownst to you, has stored within itself a great table of numbers and their products; thus, all it in fact does when given two numbers to multiply, is find the right entry in the table, and output whatever is stored there. Outwardly, from its behavior, there might be no difference to observe (let us suppose that the table is vast enough that no realistic multiplication is left off), yet still, it seems odd to say that the device performs multiplication. What we mean by that, it turns out, is not just producing the right output: it’s producing the output in a certain way, that manipulates the input to generate the output, say by means of long multiplication. Just looking up the result seems like cheating.

The same sort of cheat is possible with the Turing test. We may imagine—though obviously never build—a table so vast that it contains all possible dialogue trees of a particular length within it. While even for comparatively short conversations, this table would be vastly bigger than the number of atoms in the observable universe, for any given length of conversation, it would still be finite, hence at least in principle, any length of conversation could be replicated in this way. Thus, at least in this abstract sense, the behaviorist identification of intelligent behavior and intelligence can come apart, and it is possible to pass the Turing test without any true intelligence.

We can make this somewhat more realistic by noting that any such table is bound to have a great amount of redundancy, which we may employ as a means of compression—replacing repeated patterns by a single schema to be followed, say. Huge apparent amounts of information can often be compressed to an incredible degree: Borges’ library containing all possible 410-page books with a 25-character alphabet is completely specified by that description, in that it would be possible to ‘decompress’ every book from it (even if the algorithm might have a rather large runtime). (Indeed, you can browse the library here.) So it is not prima facie impossible to have a sufficiently compressed table within an imaginable device.

In a sense, that is of course exactly what large language models are: a ‘compressed’ version of all their training data, which, for present-day versions, is a substantial chunk of all the textual output ever produced by humanity. (Hence, the notion that they are a ‘blurry jpeg of the web’ popularized by science fiction author Ted Chiang.) This also highlights a potential difference between the intelligence of LLMs and that of humans: the former need the equivalent of hundreds of thousands of years of reading to achieve capabilities that a human learns in just a few years, from nothing but the scattered examples of language production in their vicinity.

It is thus not out of the realm of possibility that LLMs may ‘cheat’ in their apparent intelligence. Interestingly, this does not directly entail that the Turing test fails: what it discovers, however, is not the intelligence in the device in question, but rather, the intelligence that went into the creation of the giant lookup table—or that of the humans that actually produced the texts that went into the training data. From that point of view, every conversation with an LLM is really a conversation with the collective intelligence of humanity, ‘canned’ within the texts it has produced—a notion that may well be philosophically more interesting than that of ‘just’ an artificially created, singular intelligence.

Of course, the dedicated behaviorist may point to the fact that LLMs apparently produce significantly novel insights, which have not been part of their training data—indeed, which no human has ever produced before. But this is to miss the point. First of all, by their nature, LLMs are incapable of producing genuine novelty—they are information-preserving operations. Indeed, LLMs are injective functions, and hence, in principle invertible, thus, one is able to reconstruct their input from the output, meaning no novelty is possible. But this is vulnerable to the argument that maybe, human intelligence likewise lacks novelty, but just ‘remixes’ inputs in a non-obvious way. I don’t believe this is the case, but will grant it for the sake of the present discussion.

But more importantly, it’s not my aim here to show that LLMs ‘cheat’ on the Turing test in the sense that all of their answers are merely due to the ‘canned’ intelligence in their training data. The point here is just to show that such cheating on the test is possible: that what is tested by the Turing test and what we would call intelligence can come apart. The behaviorist reading of the Turing test is thus susceptible to a critique similar to Putnam’s. It then needs further arguments on the side of those arguing for LLM general intelligence to the effect that in this case, the two notions coincide after all. It’s here that the argument in Nature falls short.

Alien Ducks

If it walks like a duck, quacks like a duck, and is three meters tall and breathes fire, is it a duck? The ‘behaviorist’ notion according to which being a duck just is walking and quacking in a ducky way would answer in the affirmative: it may be an odd duck, but it is, quintessentially, a duck. To establish duckness, it suffices to verify if the properties that make a duck a duck are present.

It’s here that the verificationist commitment of behaviorism becomes relevant. We are only justified in calling a 3m tall quacker a ‘duck’ if we are committed to the idea that we can prove duckness by some finite set of criteria. But the problem is, very few, if any, today hold to such a view—indeed, the philosopher Daniel Dennett has coined the term ‘Village Verificationist’ to describe a naive commitment to verification as a criterion for theoretical admissibility (though he was open to a more sophisticated, ‘urbane’ kind of verificationism).

But the more widespread (if still approximate) approach to judge the merit of a proposal in scientific terms today is falsificationism, as first made explicit by Karl Popper. On this approach, while one can never establish the truth of a hypothesis, thus keeping it always provisional, one can conclusively establish its falsity through finding disconfirming evidence. On this approach, the fact that something is ten feet tall and breathes fire means, simply, that it’s not a duck! Our propensity to call it a duck, based on its quack and its walk, is then just an expression of our preconceived judgment, roundly shown erroneous by further, disconfirming, evidence.

It’s here that the argument in the Nature-comment falls apart. The outputs of LLMs are full of disconfirming evidence for the idea that they are instances of genuine (much less general) intelligence: errors of elementary reasoning that absolutely no mentally competent human would ever produce. The classical examples are lapses of reasoning, like the inability to correctly count the number of ‘r’s in the word strawberry, or trivial logic errors which persist even in the newest generation of LLMs, such as the inability to reliably infer B = A from A = B. Now, all of these are likely to eventually disappear, but what they show goes beyond individual difficulties: whatever goes on ‘behind closed doors’ inside the LLM, so to speak, must be fundamentally unlike what happens in the human brain, since it is inconceivable that a human could make some of the same errors.

Or, for another example, take the famed hallucination problem: while humans also suffer from occasional confabulations, the scale and extent of LLM fabrications is beyond anything compatible with human-type intelligence. For instance, I recently asked ChatGPT for some poems on spring, and was indeed offered two examples, one by Eduard Mörike, and the other by a poet I hadn’t heard of. The only problem: both of them were completely fabricated! While Mörike of course is famous for having penned one of the best known German spring poems (‘Er ist’s’), the poem the AI attributed to him was made up out of the whole cloth, as was the other one.

Now, while a human may misattribute a poem to the wrong author, perhaps even make up a few words or a line, it’s utterly inconceivable that they could just out of the blue come up with an entirely confabulated poem in full confidence. Typically, we know when we’re uncertain, and know when we don’t know something. But to an LLM, their inventions are of exactly the same character, carry the same verisimilitude as factually accurate content. Given this, on what basis could we judge them intelligent?

The move the authors of the Nature-piece propose, here, is telling: yes, there are characteristics of LLMs that are incomparable to human intelligence. However, they contend, all this means is simply that their intelligence is rather an alien form of intelligence!

But this immediately gives away the game: we are only justified in calling something that differs from the notion of intelligence we’re familiar with an ‘alien’ intelligence if we have sufficient grounds to think it intelligent in the first place. Thus, the argument here becomes circular: anything can be judged intelligent if we allow for sufficiently alien forms of intelligence. The three-meter quacker is a duck: it’s just an alien duck.

The argument for LLM general intelligence both embraces and rebukes its behaviorist roots, and as a result, ends up falling in on itself: it rebukes them in attempting to built an evidential, inductive case, then embraces them by painting apparent deviations from the established intelligence as simply due to its alien nature. But a coherent case can only follow one of these lines: either, the behaviorist notion is appropriate—then, we have to deal with the fact that there is a gap between what is behaviorally established, and the presence of genuine intelligence, as in the lookup table argument. Or, we make a case based on the evidence: then, the stark deviations from the one example of humanlike intelligence we have—i.e., human intelligence—provide strong reasons against attributing it to LLMs. Only if we believe that passing the Turing test just is being intelligent does it become reasonable to judge deviations from expectations as indications of the alien nature of the intelligence under scrutiny—and not, simply, as falsifying evidence.

In the end, it seems best to overcome our verificationist hangover: what is three meters tall and breathes fire is no duck—no matter its quack. Anything else just amounts to fowl play.

Enjoying the content on 3QD? Help keep us going by donating now.