by Malcolm Murray

By 2030, we will have countries of geniuses in data centers, but we won’t know what to do with them.

In my 10+ years as a Superforecaster, I have picked up many techniques and lessons regarding forecasting. Given the fractured state of the debate on Artificial General Intelligence (AGI) and AI progress, it seems useful to apply these Superforecaster lessons to the debate.

AI is a rich topic and of course contains many parallel debates, but one of the most heated (and most interesting) is regarding the expected continued speed of progress. That debate can probably be boiled down to the question of whether people are “feeling the AGI” or not. A phrase coined or at least popularized by Ilya Sutskever while at OpenAI, “feeling the AGI” has become a shorthand for a set of arguments saying that advanced AI will transform the world within a few years. This is the viewpoint of the people in the AI labs, for sure. See e.g. the statements from the leaders of the three leading AI labs – Sam Altman says “We are now confident we know how to build AGI”, Dario Amodei says “I think [powerful AI] could come as early as 2026”, and Demis Hassabis says “I think we’re probably three to five years away [from AGI]”. You can also find the frenzied AGI heads on X that greet every AI development with a collective genuflection of “AGI is near!”

In the other camp, you have the AGI naysayers, who for various reasons all believe that AI will not transform the world, or at least not in the near future, or without significant additional technological breakthroughs. Gary Marcus is a leading voice in this camp, other prominent members include Fei-Fei Li and Yann LeCun. The recent AI Paris Summit also showed that many politicians fall into this camp, from Macron seeing the summit as solely an investment opportunity to Vance’s speech downplaying any transformative effects AI might have.

We can try to make sense of this debate using some of the Superforecaster techniques and approaches. The first thing we need to do is to formulate the question more precisely. This is often one of the key reasons why people disagree, or debates get unresolved – a lack of precision in the question being discussed. This is very clearly the case with “feeling the AGI”.

There are both multiple definitions of AGI itself, as well as several, closely related, but different concepts. AGI used to be defined as an AI that can do everything a human can. However, as it has become clearer that advances in Large Language Models (LLMs) are moving ahead of advances in robotics, this is nowadays often re-defined as an AI being able to do every intellectual task a human can do. Even so, it is still not very precise. Some people are defining it even more narrowly, as every cognitive digital task a human can do, with the AI functioning as a remote worker and AGI being when an AI can do every task that a human can do with a laptop remotely. Certain analyses look at the example of COVID-19 to proxy the impact on the economy from AI. Even then, we run into many definitional questions – is this an average human or is it an Einstein-level human? How long can the AI take to execute the task? What is a reasonable cost to use for the AI to execute the task?

Most definitions of AGI are as seen from the above focused on input rather than output. But we have seen AGI definitions more focused on output. For example, OpenAI at one point defined AGI as when it makes $100 billion in profits. Similarly, the related concept of Transformative AI (TAI) is often defined as when AI is powerful enough that the economy changes significantly.

There are also the related concepts of ASI and PASTA. ASI is Artificial Super Intelligence, i.e. when the intelligence of AI is superior to a human in every way. This is also an input definition, and one we can put aside for now, as it is strictly defined as coming after AGI. Finally, PASTA, Holden Karnofsky’s useful (albeit unfortunately named) shorthand for Process for Automating Scientific and Technological Advancement, is when AI can autonomously make scientific progress. This is also an input definition and one that is narrower, so I will put that aside for now.

For the analysis below, I would like to delineate between input and output definitions and try to delineate how these might differ. Therefore, I will use AGI as the input definition and define it as an AI being able to do every cognitive digital task equivalently or better than the best human, in an equivalent or shorter time, and for an equivalent or cheaper cost. I will also use TAI, which is the output definition, and I will define it as a growth rate for the economy that is unprecedented historically, i.e. higher than in recorded economic history. Due to the very varied diffusion of AI between countries, this can likely not be measured for the world as a whole but rather must be defined as one country achieving such a growth rate. Taking the U.S. as an example of a large economy, the U.S.’ economy has never grown faster than 18.9% in a year (in 1942, due to the war economy), so growth in that neighborhood would be an extremely potent signal of complete economic transformation.

We also need to define the timeframe clearly, another common mistake in forecasts (and a common get-out-of-jail-free card that many pundits excused themselves with in the studies of Philip Tetlock). 2030 seems to be the most common timeframe currently, probably because it is a round number and it strikes the balance between being sufficiently into the future to be highly uncertain, yet still meaningful for government and corporate planning. As an example, two prominent superforecasters used 2030 as their timeframe in a recent discussion. Even then, we need to be clear if by that we mean Jan 1 or Dec 31, 2030, which can make a huge difference. I will use Dec 31, since that represents the end of the decade.

So, the two distinct questions we end up with are:

- Will there exist by Dec 31, 2030, an AI that is able to do every cognitive digital task equivalently or better than the best human, in an equivalent or shorter time, and for an equivalent or cheaper cost?

- Will by Dec 31, 2030, the U.S. have seen year-on-year full-year GDP growth rate of 19% or higher?

Overall, what I would argue is the most important Superforecasting technique is to look at the historical base rates and weigh these heavily in the analysis. At a high level, this means looking to the past for answers about the future and assuming that the past either repeats itself or at least rhymes, as Mark Twain might have said. Not assuming that the future will be radically different than the past is something that has served forecasters well for a long time. However, there are some key nuances that must be kept in mind and important choices to make when establishing the base rate.

The first thing to keep in mind is that we need to combine the inside and the outside view, as per Kahneman. It means to look both at the specifics of an event as well as its general reference class. The classic example is assigning a probability to a couple divorcing. Yes, one should consider their current level of marital strife, but it is equally important to look at the average rate of divorces in society.

For AI, this means two types of analysis. On one hand, AI is a technological advance among others. It can be argued that AI is at the level of a General Purpose Technology, as this paper does, which would make it akin to electricity or the steam engine, while others argue that it is perhaps a lower-level advance, more akin to social media.

But in both cases, we are analyzing it as part of its reference class and establishing a base rate based on that class. The other approach is to look at AI as completely unique. The argument for this is that it is the first time that we are creating intelligence, a hitherto uniquely human-dominated aspect of the world and the likely reason for human dominance on Earth. This could arguably lead to quite large adjustments to the base rate.

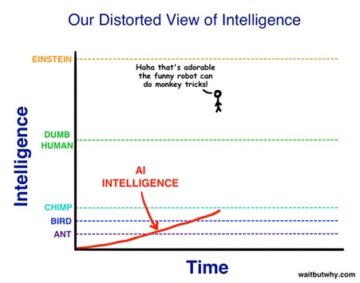

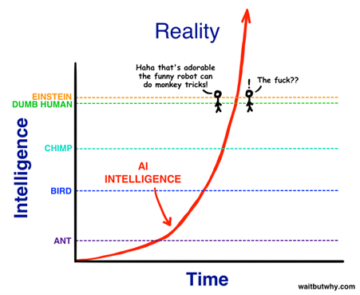

A second thing is to be very clear on the role of incentives for each actor. Any statements or analysis offered by the various players in the AI field will reflect their incentives. This is obviously most easily spotted in the statements from AI labs themselves, that have a valuation to maintain (and increase). Or politicians saying only what fits their short-term agenda. But even data is never fully objective and can always be analyzed in different ways. In the figures below, we see Tim Urban’s classic example showing how standing on an exponential curve makes things look very different depending on which way you are looking.

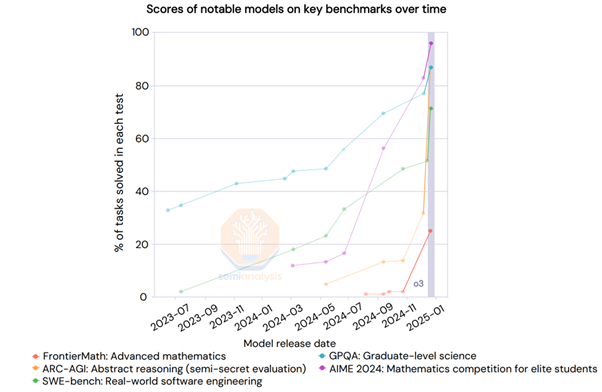

Third, we need to be very clear from which perspective we are establishing the base rate for AI progress. Like the input-output distinction above, we can draw very different conclusions if we look at AI progress from a technical point of view versus from a societal point of view. This can be seen as akin to De Bono’s 6 thinking hats. From a technical point of view, we have a plethora of benchmarks that are showing us the extremely rapid progress of AI models on a set of benchmarks. Someone could easily look at a chart like the figure below, from SemiAnalysis, which shows o3’s performance on benchmarks and draw the conclusion that AGI is near.

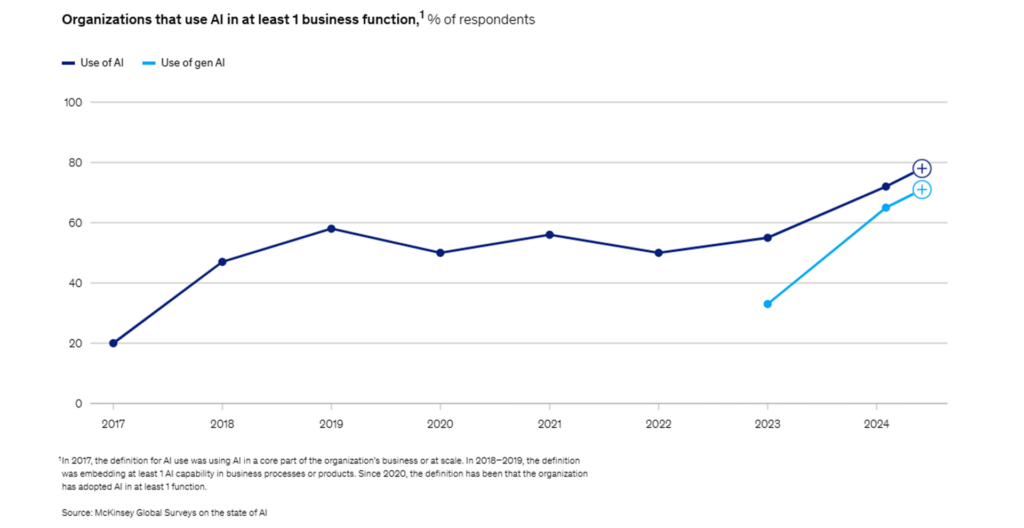

On the other hand, there is the societal point of view. Someone might look at a chart like the one below from McKinsey, showing enterprise adoption of AI, and draw the conclusion that AI progress is much slower.

These are of course very different things. The technical side measures AI progress “in a vacuum”, i.e. in a very clean and constrained environment, while the societal point of view considers the friction in the “real world” that slows down adoption. However, the concept of “AGI” conflates the two and it is important to separate them out. They should be looked at separately first and then afterwards, we can look at combinatory effects. Societal adoption likely develops in parallel with technical progress but is lagging due to friction and could also just perpetually remain lower due to constraints.

Putting all this together, it seems that the concept of AGI really is a conflation of two very separate questions:

- Will there exist by Dec 31, 2030, an AI that is able to do every cognitive digital task equivalently or better than the best human, in an equivalent or shorter time, and for an equivalent or cheaper cost (“AGI”)?

- Will by Dec 31, 2030, the U.S. have seen year-on-year full-year GDP growth rate of 19% or higher (“TAI”)?

These two questions have separate base rates and will likely therefore resolve differently. My forecast, with 60% and 90% likelihood, respectively, is that the first one will resolve in the affirmative and the second one in the negative. I.e., by 2030, it seems that we might have “AGI” but not “TAI”. Or we could say “we will have countries of geniuses in data centers (as Amodei put it), but we won’t know what to do with them.”

Enjoying the content on 3QD? Help keep us going by donating now.