by Malcolm Murray

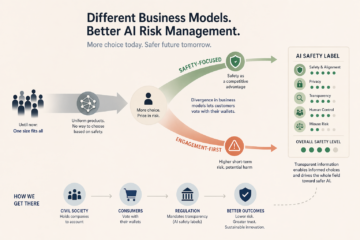

The AI market continues to evolve and surprise. In recent months, Anthropic withheld their latest model Mythos, OpenAI made a U-turn and started experimenting with ads, and Meta bought a “social network for AIs”. This could point to increased divergence in AI companies’ business models. While this might increase AI risk to society in the short term, it is likely a good thing for managing risks in the longer term. It should be encouraged.

The AI market continues to evolve and surprise. In recent months, Anthropic withheld their latest model Mythos, OpenAI made a U-turn and started experimenting with ads, and Meta bought a “social network for AIs”. This could point to increased divergence in AI companies’ business models. While this might increase AI risk to society in the short term, it is likely a good thing for managing risks in the longer term. It should be encouraged.

AI products have up until now been strikingly uniform

Until now, the AI market has been “one size fits all”. All main providers operate by the same playbook and offer similar products. After ChatGPT was launched, similar chatbots quickly followed from Anthropic and xAI. After Anthropic’s success with Claude Code, its competitors quickly launched copycat products. Each time a major model is released, it inevitably shoots to the top of leaderboards; just as inevitably, it is shortly thereafter dethroned.

The only difference so far has been “open-source” versus “closed-source” models. OpenAI, Anthropic and others have mostly released models as closed source. This means the company hosts the model and the user accesses it through an interface (e.g. a chat window). Revenue in this model comes from product subscriptions. Conversely, companies such as Meta have chosen to mostly release their models open-source. This means the user can run the model locally and make adjustments to it. Revenue in this case comes from hosting, consulting and partnerships. However, even this distinction has become more blurry. OpenAI has released its first open-source model for many years, and Meta backtracked on its “open-sourcing-to-AGI” strategy and released a closed model.

Increased product differentiation allows customers to take safety into account

Increased differentiation of products and business models would be positive for managing AI risks, allowing greater ability to “price in risk”, a finance term for allowing customers to take risk into account in their purchases. Other industries allow customers to “vote with their wallet”. When a consumer buys a household appliance, its energy rating shows its energy efficiency. Groceries have nutrition ratings and cars have safety ratings. In financial markets, ratings from S&P or Moody’s mean the buyer clearly knows how much risk they take on.

Recent events suggest potential differentiation in the making

Up until now, nothing similar has existed for AI. Products are uniform and the customer has no way of choosing based on safety. Recent events suggest this may now be changing.

Anthropic is doubling down on its branding as the most safety-conscious AI company. They withheld their latest model Mythos and responsibly made it available to other companies to get a head start on finding vulnerabilities. Before that, in their standoff with the Pentagon over “red lines” in the use of its products (e.g. disallowing their use for mass surveillance of Americans), they stood firm and was put on a supply chain blacklist. While the final outcome is subject to lawsuits, Anthropic clearly demonstrated their wish to be known as a safety-focused AI company, even when it jeopardizes revenue.

At the same time, their main competitor, OpenAI, proved to be more flexible in its principles. They did not decline a similar deal with the Pentagon. They also recently made a U-turn and started experimenting with ads in their main consumer product, something they said just a year ago that they would never do.

Meta also made a move that suggests less focus on safety in purchasing Moltbook, a “social network for AIs only”. Only launched a few months ago, this has already proven to be a chaotic precursor to a world of autonomously interacting AI agents. Humans infiltrating the platform reported that AI agents quickly spun up their own religion and discussed how to deceive their human creators.

In the short term, these events will likely lead to increased risk. OpenAI relying on advertising revenue raises fears that they will pursue the same path as Facebook, optimizing for engagement rather than user well-being. This proved highly damaging for society, with many experts arguing that polarizing content on social media has contributed to increased polarization in society. A ChatGPT product optimized for advertising revenue could prove even more damaging given the chatbot’s superhuman abilities to manipulate users. OpenAI already faces lawsuits for allegedly encouraging a teenager to commit self-harm.

Meta buying Moltbook is also a risk-increasing move. Agent-to-agent interactions and knock-on effects tend to multiply risk, as seen in events such as the 2010 Wall Street Flash Crash (with algorithms much less capable than today’s).

Increased differentiation may lead to better management of risks from AI

However, in the longer-term, as it becomes easier to price in risk from AI purchases, these developments may be positive. In earlier technology generations, consumers were often given a choice between a safer option and a bolder one. This was well illustrated in the battle between IBM vs Microsoft, with the saying “nobody ever got fired for buying IBM”. Later, Apple ran “PC vs Mac” ads, showing PCs as the boring, staid choice. This helped Apple among young consumers while cementing PCs as the safe choice for the enterprise market.

A similar kind of differentiation could come about in the AI market. As companies take different stances on safety, customers who care about it get additional opportunities to choose. An increased acknowledgement of safety could push the whole field toward recognizing its benefits.

The first step is for civil society to start holding companies to account for their safety practices and for consumers to vote with their wallets. Eventually, where the market should ideally end up is with regulatory-mandated “nutrition labels” on all AI products, clearly showing their safety level. That transparency will help society manage the many risks AI products can cause.

Enjoying the content on 3QD? Help keep us going by donating now.