by Malcolm Murray

Over the past year, there has been significant movement in AI risk management, with leading providers publishing safety frameworks over the past year that function as AI risk management. However, the problem is that these are not actually proper risk management when you compare them to established practice in other high-risk industries.

Over the past year, there has been significant movement in AI risk management, with leading providers publishing safety frameworks over the past year that function as AI risk management. However, the problem is that these are not actually proper risk management when you compare them to established practice in other high-risk industries.

In other industries, risk management typically has 5 components. These are all interconnected and important. They are: Risk Identification, Risk Assessment, Risk Evaluation, Risk Treatment and Risk Governance. These go by different names depending on the framework, and are sometimes also grouped differently. For example, in the International AI Safety Report, we call them Identification, Analysis/Evaluation, Mitigation and Governance. In a recently published paper that I worked on, Open Problems in Frontier AI Risk Management, they are instead Risk Planning, Risk Identification, Risk Analysis, Risk Evaluation and Risk Mitigation.

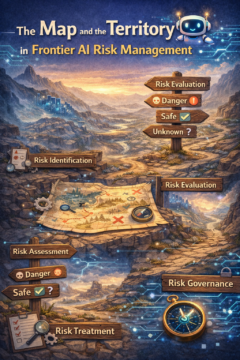

The exact wording and grouping does not matter, what matters is the activity. I have found that a good way to explain what the key activities are can be done using the concept of the map and the territory. Basically, first, we want to understand which territory we are in – this is risk identification. Then we create a map of that territory – that is risk assessment. Third, we determine alternative paths to proceed through the territory. This can be compared to risk evaluation. We then need to choose a path and pursue it. This is risk treatment. And finally, the risk governance equivalent aspect can be expressed as stopping once in a while to get a second pair of eyes on the compass, to determine that we’re still going in the right direction.

However, in what has become the norm in current AI risk management, only a limited subset of these are actually being done. What typically happens is an assessment of capabilities, and then, if the capabilities reach a specific threshold, a corresponding implementation of predetermined safeguards.

This means there are several missing areas where risk management is not happening. In fact, we don’t know exactly what territory we’re operating with, since there is not really any risk identification taking place. Then, the maps we are making of the territory are likely incomplete, since capabilities do not equal risk. So our maps may lead us wrong. And finally, with limited governance, we cannot have confidence that decisions are being made correctly and we may have veered off course without noticing it.

To look into each of these, for risk identification, the AI safety field identified a few risks early on that seemed salient to policymakers (such as terrorists with biological weapons and cyber offense) and those have become the go-to risks that everyone references. But given that we are creating new types of intelligence, it is unlikely that we have identified from day 1 all the risks that may occur. I think the Moltbook incidence the other month is a good example of the unpredictability of AI risk. What is needed instead is what I would call open-ended, blue sky red-teaming. This is where you bring together cross-functional teams that have access to usage data to think through very broadly what could go wrong, using structured techniques such as scenario analysis or fishbone diagramming.

Second, in risk assessment, we are missing the whole sequence of events that actually lead to harm. What is needed is risk modelling. This is what connects the capability of a model, which in itself of course is neutral, to actual end inflicted harm on people, property or the environment, by inserting the threat actors, decomposing the sequence of events that must take place and analyzing the safeguards in the broader environment along the way.

Third, the governance structures are very limited and one-dimensional. They are insufficient, they don’t include independent reviews, and enough checks and balances. We have insufficient governance already in today’s world, and then when things start happening at machine speed, the governance will need to become a lot more scalable and automated, something we do not yet know how to do.

So, there is clearly a lot of room for improvement in AI risk management. AI risks are no longer theoretical, we saw last year how Anthropic interrupted a nation state actor using AI to autonomously conduct cyberattacks and also how terrorists used AI to plan attacks in Las Vegas and Palm Springs. Therefore, the time to implement real risk management is now.

Enjoying the content on 3QD? Help keep us going by donating now.