Mordechai Rorvig in Quanta:

Our species owes a lot to opposable thumbs. But if evolution had given us extra thumbs, things probably wouldn’t have improved much. One thumb per hand is enough.

Our species owes a lot to opposable thumbs. But if evolution had given us extra thumbs, things probably wouldn’t have improved much. One thumb per hand is enough.

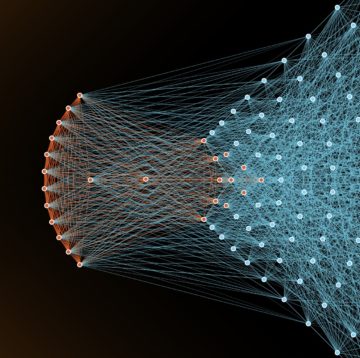

Not so for neural networks, the leading artificial intelligence systems for performing humanlike tasks. As they’ve gotten bigger, they have come to grasp more. This has been a surprise to onlookers. Fundamental mathematical results had suggested that networks should only need to be so big, but modern neural networks are commonly scaled up far beyond that predicted requirement — a situation known as overparameterization.

In a paper presented in December at NeurIPS, a leading conference, Sébastien Bubeck of Microsoft Research and Mark Sellke of Stanford University provided a new explanation for the mystery behind scaling’s success.

More here.